Last updated: May 20, 2026

Anthropic’s terminal-native AI coding agent that 46% of developers call their “most loved” tool. The same tool where one developer burned $1,619 in 33 days without realizing it. Both facts are true. That gap is the entire story.

BRIEFING SUMMARY

Claude Code is the most technically capable AI coding agent in 2026. SWE-bench Verified 80.8%, 1M token context window, MCP integrations that turn it into a full automation platform. No other tool touches it for complex, multi-file, agentic work.

But most developers burn money and time on it for preventable reasons. They treat the $20/month Pro tier as a real price. They use it for tasks that Copilot handles in a keystroke. They drown in approval prompts they could have configured away. They run Agent Teams without understanding token math. And they lock their workflow into a single model vendor in the fastest-moving market in tech.

The right stack for most developers: Cursor ($20/mo) or GitHub Copilot ($10/mo) for daily editing. Claude Code on API pay-as-you-go for the 2-3 heavy sessions per week that actually need an agentic terminal agent. Don’t use one tool for both jobs.

What Is Claude Code, Actually?

Claude Code is a CLI tool that runs in your terminal. Not an IDE plugin. Not a code editor. A terminal agent powered by Anthropic’s Claude models (Opus 4.6, Sonnet 4.6, Haiku 4.5) that can read your entire codebase, edit files across directories, run shell commands, execute tests, and submit pull requests. If you’re looking for a review of Claude itself as a chat assistant rather than a coding tool, see our Claude AI review.

It launched in February 2025. By March 2026, it had a 1 million token context window in GA, a growing MCP server ecosystem (GitHub, GitLab, Slack, Datadog, Linear, Supabase, Docker, PostgreSQL), and a research preview of Agent Teams for multi-agent parallel execution. As of April 2026, Claude Code now runs primarily on Claude Opus 4.7, which introduced silent default changes to tool use, adaptive thinking, and effort levels — our deep analysis of Opus 4.7 catalogues the eight admissions buried in Anthropic’s own documentation that directly affect Claude Code workflows.

The Pragmatic Engineer’s 2026 developer survey (906 respondents) put Claude Code at 46% “most loved,” compared to 19% for Cursor and 9% for GitHub Copilot. At the same time, Theo from t3.gg posted that Claude Code is “basically unusable at this point,” racking up 2,700 likes.

Both camps are right. It depends entirely on what you’re using it for, and whether you’ve fallen into any of the five traps below.

What Claude Code Actually Does Well

Before we get to the mistakes, the tool earned that 46% rating for real reasons. Skipping this part would be dishonest.

The Read-Execute-Fix Loop

No other coding tool does this natively. Claude Code lives in your terminal, which means it can run a build, read the error output, modify the code, rerun the build, and iterate until it passes. All without you touching the keyboard. Cursor can edit code. Copilot can suggest code. Claude Code can run, fail, diagnose, fix, and verify. That loop is the difference between a suggestion engine and an agent.

Codebase-Scale Understanding

The 1M token context window isn’t just a spec number. It means Claude Code can hold your entire project architecture in working memory while making changes across dozens of files. For large refactors, framework migrations, or building new features that touch multiple modules, this is a genuine capability that Cursor and Copilot don’t match.

The MCP Ecosystem

This is Claude Code’s actual moat. Model Context Protocol lets you connect external tools directly into the agent’s workflow. GitHub for PR management. Linear for ticket tracking. Datadog for log analysis. Supabase for database operations. Slack for team notifications.

A real workflow: you tell Claude Code to review a PR, it pulls the diff from GitHub MCP, runs your test suite, checks Datadog for related errors, comments on the PR with findings, and moves the Linear ticket to “In Review.” That’s not autocomplete. That’s automation.

No other individual developer tool has this breadth of integration. Cursor and Copilot are editors. Claude Code is closer to a junior DevOps engineer that works in your terminal.

SWE-bench: The Number in Context

Opus 4.6 scores 80.8% on SWE-bench Verified. That’s the highest score among coding agents in 2026. But SWE-bench measures the ability to resolve real GitHub issues in controlled environments. Anthropic themselves acknowledge that infrastructure configuration alone can swing scores by several percentage points. Academic research from 2026 suggests public coding benchmarks may overestimate agent capability by 20-50% compared to real-world conditions.

The score tells you Claude Code is the strongest reasoner for complex, well-defined coding problems. It doesn’t tell you it’s the best tool for writing CSS or fixing import errors.

Now, the five mistakes.

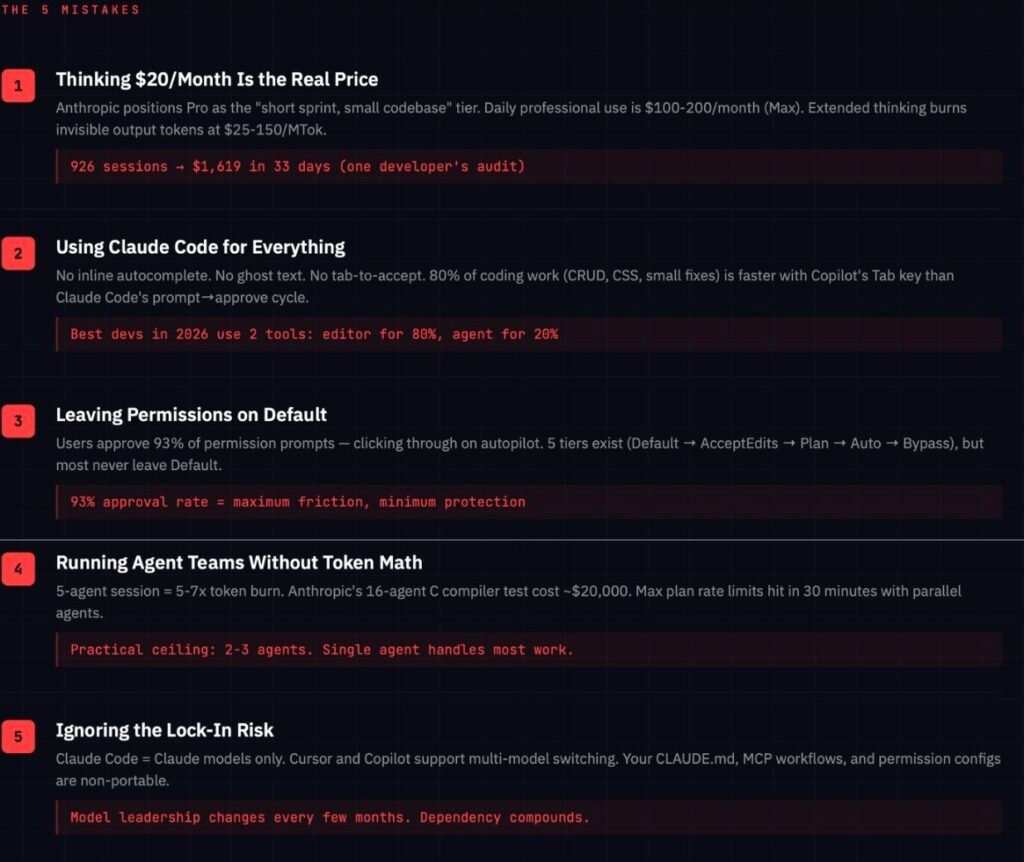

Mistake #1: Thinking $20/Month Is the Real Price

This is the part every other review glosses over.

Claude Code access starts at $20/month with the Pro plan. That number shows up on the pricing page, and that’s where most people stop reading. Here’s what Anthropic’s own product positioning actually says about Pro: it’s for developers “working on small-to-medium codebases” doing “focused coding sessions.”

The plan designed for daily, serious use? Max. Starting at $100/month for 5x Pro’s usage limits. Or $200/month for 20x. Anthropic isn’t hiding this. It’s right there in the tier descriptions. But the marketing leads with $20.

How the Token Economics Actually Work

Pro gives you roughly 44,000 tokens per 5-hour sliding window (this number is widely reported but not officially published, which is itself a red flag). That sounds like a lot until you understand what eats tokens.

Extended thinking is the silent budget killer. When Claude reasons through a complex problem, it generates thinking tokens that you pay for at output rates. On Opus 4.6, output tokens cost $25 per million tokens. In Fast Mode, that jumps to $150 per million. You get billed for the full thinking process, but you only see a summarized version. A single 32K thinking block costs $0.80 in standard mode and $4.80 in Fast Mode. Stack a few of those in a session and your 5-hour window evaporates.

Context window fills compound the cost. The 1M token context window is real, but at 83.5% usage, Claude triggers compaction and starts dropping older conversation context. You’re paying to fill a massive window, then losing parts of what you paid for.

Real Developer Spend Data

The range is staggering. One developer tracked 926 sessions over 33 days and found $1,619 in API costs, driven by Claude repeatedly opening files it didn’t need and calling tools it never used. Another developer ran 430 million tokens in a single day across Codex and Claude Code. At full API rates, that would have been $280. With prompt caching, actual cost: $9.

That’s not a typo. The difference between efficient and inefficient Claude Code usage is 30x or more. The tool doesn’t cost too much. Most people just don’t know how to control the spend.

The Pricing Comparison That Matters

Cursor Pro: $20/month. Unlimited Tab autocomplete, unlimited Auto completions, plus $20 of frontier model usage with optional spend caps. Predictable.

GitHub Copilot Pro: $10/month. Unlimited real-time code suggestions, 300 premium requests, extras at $0.04 each. Transparent.

Claude Code Pro: $20/month. Variable token consumption based on message length, file attachments, conversation length, model choice, and feature usage. 5-hour sliding windows. Weekly caps. Separate limits for Opus vs Sonnet. Extra usage needed for premium features. Opaque.

The first two tools tell you what you’ll pay. Claude Code tells you what you might pay.

How to avoid this mistake: If you’re exploring Claude Code, start with API pay-as-you-go instead of a subscription. Set a hard spending cap at $10. Run it for one weekend on a real project. Track your token usage with tools like ccusage or Claude-Code-Usage-Monitor. Only upgrade to Pro or Max after you know your actual burn rate.If API costs remain prohibitive, Anthropic SDK-compatible models like MiniMax M2.7 deliver competitive coding performance at $0.30 per million input tokens.

Mistake #2: Using Claude Code for Everything

This is the single most expensive habit in the AI coding tool space right now.

Claude Code has no inline autocomplete. No ghost text. No tab-to-accept suggestions. No keystroke-by-keystroke predictions as you type. Cursor and Copilot are built around your editor buffer. You type, they predict, you accept or reject in the flow of writing code. Claude Code’s VS Code extension exists, but it’s a sidebar-and-diff experience. You prompt, Claude responds with changes, you review diffs, you accept. That’s a fundamentally different rhythm.

For the boring 80% of development work (renaming props, adjusting Tailwind classes, fixing small test failures, updating serializers, writing CRUD endpoints), inline autocomplete is faster than an agentic conversation. Claude Code forces you into prompt-then-approve cycles for tasks that Copilot handles with a single Tab keypress.

The pattern that’s emerged across developer communities in 2026 tells the story: most productive developers use two tools. Cursor or Copilot for the 80% of work that’s editing. Claude Code for the 20% that needs an agent. Trying to use Claude Code for everything is how you burn through $200/month while being slower than someone with a $10 Copilot subscription.

How to avoid this mistake: Split your tools. Cursor or Copilot as your daily driver for editing, completions, and small fixes. Claude Code only for complex multi-file refactoring, test generation across modules, debugging sessions that require terminal access, and MCP-powered automation workflows. If you can describe the task in five words or fewer, you probably don’t need an agent for it.

Mistake #3: Leaving Permissions on Default

By default, Claude Code asks permission before every file edit and shell command. Anthropic’s own March 2026 engineering report found that users approve 93% of permission prompts. Read that number again: 93 out of 100 interruptions are unnecessary friction that you’re clicking through on autopilot.

This creates a paradox. You’re running a tool marketed as an autonomous agent, but your actual experience is clicking “approve” every 30 seconds. One developer described it as “drive you insane” levels of interruption. Another reported that Claude Code started suggesting he stop coding because it was getting late. The safety-first approach sometimes crosses from protective into patronizing.

Five permission tiers exist, and most developers don’t know about them:

Default: Asks before every file edit, command, or tool call. Where everyone starts. Where almost everyone stays.

AcceptEdits: Auto-accepts file edits but still asks for shell commands. The sweet spot for routine refactoring and test writing.

Plan: Auto-accepts edits in plan mode but asks for commands. Good for exploratory work.

Auto: Uses a classifier to auto-approve safe actions. Research preview. Reduces friction significantly, but can still escalate for irreversible actions.

BypassPermissions: No approvals at all. Only for isolated environments like CI/CD pipelines or ephemeral containers. Dangerous anywhere else.

Now, the safety friction isn’t theater. Anthropic documented real cases where overeager agents attempted to delete remote git branches, search for credentials, and run production-adjacent database commands. These were caught by the classifier or permission prompts. The risk is real.

But most developers never move past Default, which means they’re experiencing maximum friction for minimum benefit. They approve 93% of prompts automatically, which means those prompts aren’t actually protecting them. They’re just slowing them down.

How to avoid this mistake: Move to AcceptEdits for your first week. Get comfortable with it. Then try Auto mode for low-risk tasks (formatting, test writing, documentation). Keep Default or Plan only for critical production work. And combine permission modes with hooks (pre/post-action scripts) for defense in depth instead of relying on the interrupt-and-approve loop alone.

Mistake #4: Running Agent Teams Without Understanding the Token Math

Agent Teams is Claude Code’s research preview feature for multi-agent parallel execution. Spawn multiple agents (a security reviewer, a performance analyst, a test coverage checker) working simultaneously on the same codebase.

It sounds incredible. The token economics are brutal.

A 5-agent session burns roughly 5x the tokens of solo use. In plan mode, it’s closer to 7x due to coordination overhead between agents. Anthropic stress-tested 16 agents building a C compiler from scratch. The result compiled the Linux kernel. The API cost was approximately $20,000.

Even on Max plans, developers report hitting 5-hour rate limits within 30 minutes when running Agent Teams. The context window for each agent is independent (each gets up to 1M tokens), so you’re paying for parallel context that multiplies fast.

The gap between “Agent Teams exist” and “Agent Teams are economically viable for daily use” is massive. For most workflows, a single agent or subagents handle the job. Two to three agents is the practical ceiling for balancing speed gains against cost.

And there’s a subtler trap. Each session starts fresh unless you maintain context through CLAUDE.md files. The CLAUDE.md system works, but there’s a sweet spot around 100 lines of project instructions. Write more than that and Claude starts ignoring the less important rules. Write less and it misses critical context. Getting this right takes iteration that most developers skip.

How to avoid this mistake: Start with single-agent workflows. Get good at them. Learn what burns tokens (file exploration, tool calls, extended thinking) and what doesn’t (cached context, concise prompts). Only introduce Agent Teams for tasks where parallel exploration genuinely adds value: code reviews with multiple focus areas, large-scale refactoring across independent modules, or multi-component feature builds. Monitor your token usage after every Agent Teams session and compare it against what a single agent would have cost.

Mistake #5: Ignoring the Lock-In Risk

Claude Code runs Claude models exclusively. Opus 4.6, Sonnet 4.6, Haiku 4.5. Enterprise customers can route through AWS Bedrock or Google Vertex, but that’s deployment flexibility, not model flexibility. You’re inside the Claude ecosystem.

Cursor lets you switch between Claude, GPT, Gemini, xAI, and its own models. GitHub Copilot supports multiple model families including Claude and Gemini. Both tools give you arbitrage if a model improves or a provider changes pricing. Claude Code gives you dependency.

This matters because 2026 is not a stable year for AI. Model quality shifts. Pricing changes. New competitors emerge. SWE-bench leadership has already changed hands multiple times. If Anthropic raises Max plan pricing, changes the extra-usage terms, or another model leapfrogs Opus on the tasks you care about, Claude Code makes migration painful because the tool and the model family are fused.

The developer who invested months in CLAUDE.md configurations, custom MCP workflows, and permission mode setups has built an environment that only works with Claude. The more you invest in the ecosystem, the harder it becomes to leave.

How to avoid this mistake: Keep your critical workflow logic outside Claude Code. Store project rules and conventions in standard documentation that any AI tool can consume, not just CLAUDE.md-formatted instructions. Use MCP servers that follow the open protocol standard, so they’re portable if you switch tools. And maintain at least a working familiarity with one alternative (Cursor, Copilot, or Cline) so that switching costs don’t compound to the point where you’re trapped.

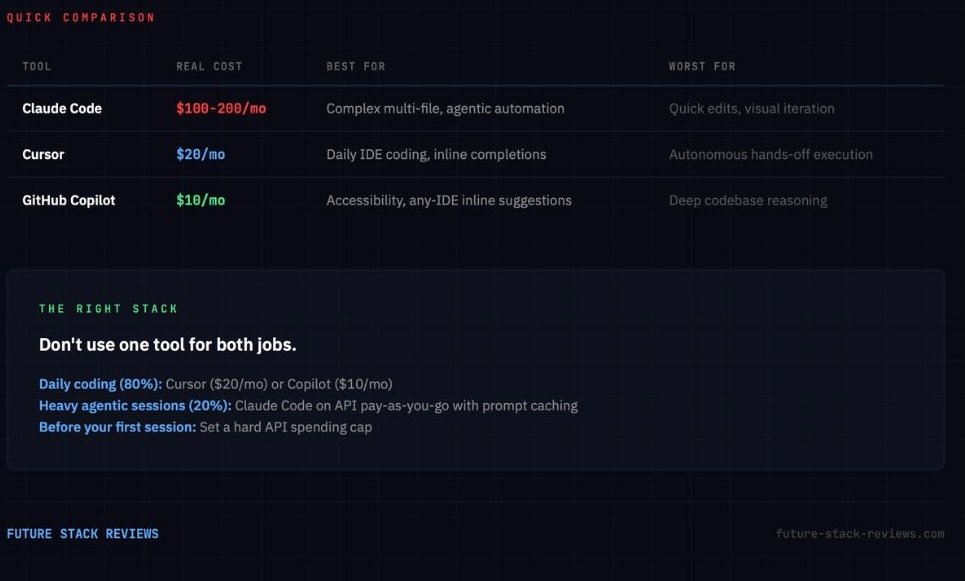

Claude Code vs Cursor vs GitHub Copilot

Three different tools, three different philosophies. Quick orientation:

Claude Code is a terminal agent. It reads, executes, fixes, and iterates autonomously. Best for complex multi-file tasks, large refactors, and automation workflows. Worst for quick edits and visual iteration. Starts at $20/month but real daily use runs $100-200.

Cursor is an AI-native IDE. Inline predictions, tab completion, Composer for multi-file editing, background agents. Best for daily coding in a polished editor experience. Worst for autonomous, hands-off execution. $20/month with predictable limits.

GitHub Copilot is a multi-IDE extension. Inline suggestions in any editor you already use, plus a growing agent mode. Best for accessibility and teams in the GitHub ecosystem. Worst for deep codebase reasoning. $10/month.

The productive pattern: combine them. One developer’s breakdown that represents the community consensus: Cursor or Copilot for the daily 80%. Claude Code for the heavy 20%. Nobody who uses AI coding tools seriously in 2026 relies on just one. For a full breakdown of all 12 coding assistants and 3 vibe coding platforms, see our Best AI Coding Assistant 2026 guide.

If your starting point is replacing GitHub Copilot specifically, our GitHub Copilot Alternatives guide compares seven tools with real cost data and a privacy matrix.

Who Should Use Claude Code

It’s the right tool if: You’re a senior developer or CLI-native engineer on large codebases. You already live in the terminal. You need multi-file refactoring, test generation, and automation. You understand token economics and will actively manage your CLAUDE.md, permission modes, and session lengths. You’re willing to spend $100-200/month because the time savings justify it.

Walk away if: You’re a frontend developer doing React/Tailwind/UI iteration. An indie hacker on a tight budget who needs predictable costs. A junior developer who learns faster from in-editor feedback loops than agent delegation. A team that finance requires monthly billing certainty from. Anyone whose fingers reach for the mouse more often than the command line.

The stack most developers should run: Daily coding with Cursor ($20/mo) or Copilot ($10/mo). Heavy sessions with Claude Code on API pay-as-you-go, prompt caching enabled, for the 2-3 complex tasks per week that justify an agentic approach. Set a hard API spending cap before your first session.

The Verdict

Claude Code is the most technically powerful AI coding tool available in 2026. The SWE-bench scores are real. The MCP ecosystem is a genuine competitive moat. The agentic workflow, when applied to the right problems, produces results that no IDE-based tool can match.

It’s also a tool that punishes careless adoption harder than any other in its category. The five mistakes above aren’t edge cases. They’re the default path for developers who subscribe to Pro, open their terminal, and start prompting without understanding what they’ve signed up for.

The developers who get Claude Code right treat it as a specialist. Terminal agent for the hard problems. Editor tool for everything else. Managed token budgets. Configured permissions. Portable workflows. That discipline is the difference between the developer who calls it “most loved” and the one who calls it “basically unusable.”

Both are using the same tool. Only one of them is using it right.

For a direct comparison between Claude Code and the leading AI-native IDE, read our Cursor vs Claude Code breakdown.

For OpenAI’s answer to Claude Code, read our OpenAI Codex review covering the $200 cloud trap, the shared 5-hour budget, and Tier B hands-on findings as of May 2026.

For xAI’s terminal coding agent in early beta, read our Grok Build CLI review covering the self-verification loop that doesn’t stop at file creation, three memory layers with different lifecycles, and a verdict of third tool, not first.