Last updated: May 29, 2026

Update, May 2026: Anthropic has since released Claude Opus 4.8, the direct successor to 4.7. If you are making a buying decision today, read the Claude Opus 4.8 review next. The newer model holds the same $5 / $25 price, improves honesty in agentic and coding work, and is a worse autonomous operator than 4.7. Everything below still explains the trust asymmetry that runs through the 4.x line.

Anthropic shipped Claude Opus 4.7 on April 16, 2026. The announcement arrived wrapped in the usual language of frontier releases: better agentic coding, sharper vision, verified outputs, longer task rigor. Twenty-eight partner companies sent in testimonials within hours. Migration guides landed across the developer press by sundown.

Most of the coverage that followed answered one question: what’s new. Almost none answered a harder question: what does the evidence say about whether the new thing works as advertised?

This review takes that harder question seriously. We read the five official Anthropic documents side by side, pulled the System Card apart page by page, queried 19 peer-reviewed studies on LLM coding assistants, tracked what independent developers saw when they ran the model themselves, mapped the regulatory shadow falling across the release in Europe, and noted what the model said about itself inside the System Card.

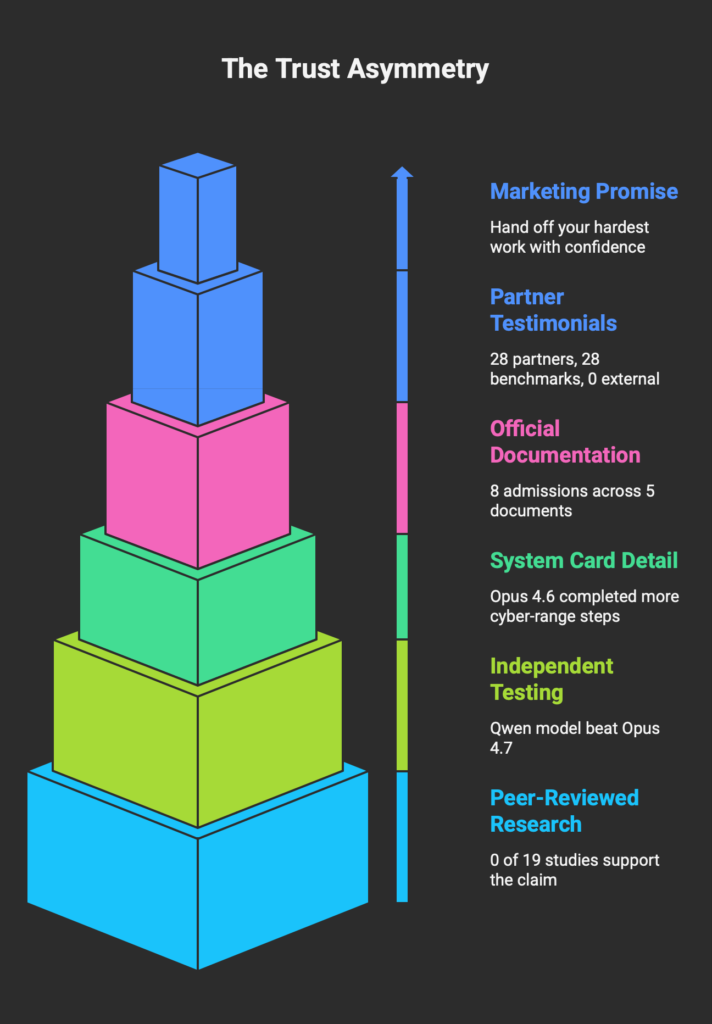

The picture that emerges is not a scandal. It is something more interesting: a trust asymmetry between what the marketing says on one end and what Anthropic’s own documents admit on the other.

BRIEFING SUMMARY

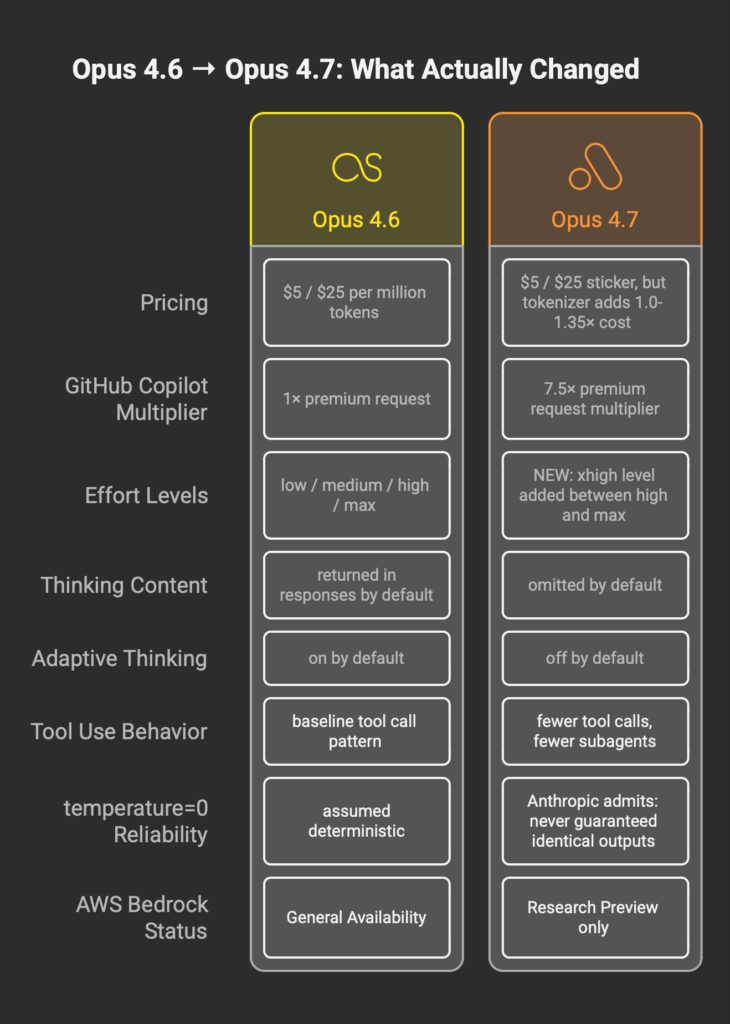

Released: April 16, 2026. Price held at $5 / $25 per million input / output tokens. New xhigh effort level between high and max. Vision input scaled to 2,576px. Available via API, Amazon Bedrock (research preview), Google Vertex AI, and Microsoft Foundry.

The question worth asking: Is the “hand off your hardest work” claim supported by Anthropic’s own documentation, by peer-reviewed academic literature, or by independent developer testing?

What this review found across six evidence layers:

- Anthropic’s five official release documents contain eight admissions that weaken the marketing narrative.

- Zero of 19 peer-reviewed studies provide High support for the “hand off hardest work” claim.

- One independent tester reported a 21GB local model beating Opus 4.7 on his benchmark.

- The UK AI Security Institute found Opus 4.6 completed more cyber-range steps than Opus 4.7.

- Effective cost rose 1.0 to 1.35× via the new tokenizer, and up to 7.5× on GitHub Copilot’s multiplier.

- The creator of Vue.js publicly switched to OpenAI during the launch window.

The short recommendation: Opus 4.7 is probably better than Opus 4.6. Whether it is ready for the confident handoff Anthropic is asking for depends on how much evidence you require before trusting a production dependency. The rest of this article explains what the evidence actually says.

Why This Release Matters More Than Its Benchmark Numbers

The Good Enough Wall

Frontier language models now cluster within a few points on the benchmarks that used to separate them. GPQA Diamond sits near 94% for Opus 4.7, GPT-5.4 Pro, and Gemini 3.1 Pro. SWE-bench Verified spreads stay inside a narrow band. MMLU-Pro has been saturated for two model cycles. Vellum called GPQA Diamond “approaching saturation.” TNW went further and dismissed the inter-model gap as effectively noise.

What this means operationally: the raw intelligence axis is no longer where competitive advantage lives. Differentiation has shifted down the stack, toward tool use, memory, agent reliability, integration breadth, cost per useful output, and what structural analyses call hands rather than brains.

The fact that three frontier labs landed within a few points of each other on every public benchmark says something about the physics of the current training regime. Adding compute still moves the needle. Not far enough, not cheaply enough, to produce the kind of gap that used to justify a flagship release.

INTEL

When benchmarks converge, the product that wins is the one with the best distribution, the cleanest integration, the lowest real-world cost per delivered outcome, and the fewest failure modes at scale. Opus 4.7 is being judged on all four axes whether or not its launch post frames it that way.

The Real Battleground

Agentic coding is the only domain where the competitive frontier still moves visibly. Anthropic’s Claude Code generated roughly $2.5B ARR in under a year, though the real-world deployment picture is more textured than the revenue number suggests. Cursor, Factory, Vercel, GitHub Copilot, and a dozen smaller players built product categories on top of the Claude API. When Anthropic says Opus 4.7 improves at agentic software tasks, they are describing the only narrative that can still support a commercial premium at the high end of the market.

This is why every partner testimonial in the launch post measured the same axis: how many more coding tasks per hour, how many fewer tool errors, how many longer autonomous sessions without human intervention. Anthropic is not competing on general intelligence any longer. The competition has moved to how many billable engineering hours the API can offset.

Standalone Lab Versus Platform

Anthropic does not own its own cloud. OpenAI rents Azure but lives inside Microsoft’s enterprise distribution. Google runs Gemini natively on the world’s largest inference fleet. Anthropic, uniquely among the three, has to land sales on infrastructure it does not control.

The Opus 4.7 availability matrix is a direct response. The model shipped on AWS Bedrock, GCP Vertex AI, and Microsoft Foundry simultaneously. Only Anthropic among the three major labs runs on all three major clouds. Multi-cloud here is less a flex than a survival move: distribution on every platform is required because losing any one of them is not recoverable.

One availability footnote matters. AWS Bedrock lists Opus 4.7 as a “research preview” rather than general availability. Most launch-day coverage missed this distinction.

The Capital Overhang

Anthropic closed its last round near $380B. Reports put annualized revenue close to $30B. Fortune 10 companies make up a meaningful slice of the enterprise base. The distance between current revenue and the implied multiple demands enterprise expansion at a pace that Opus 4.7 needs to deliver or help deliver inside two quarters.

Read the release through this frame and every design choice shifts meaning. The new xhigh effort level is a revenue lever. The retained headline price is a perception lever. The System Card’s extensive alignment section is a regulatory lever. The Mythos framing is an enterprise-tier lever. Nothing about the launch was shaped accidentally.

What Anthropic Is Asking You to Believe

The Four Core Claims

Strip the press kit of partner quotes and benchmark charts and four core marketing claims remain. Each is operational, each is testable, and each is worth tracking against the evidence.

- Hand off your hardest work with confidence. Opus 4.7 is positioned as ready to receive delegated work without human verification loops.

- The model verifies its own outputs. Self-checking is framed as a built-in capability, not an interactive workflow.

- Long-running task rigor. Multi-hour autonomous execution is presented as reliable.

- Quantified productivity gains. Partner testimonials cite percent-scale improvements across coding, analysis, and agent tasks.

These are not casual framings. They are the commercial pitch the launch rests on.

The 28-Partner Testimony Structure

Read the Anthropic announcement carefully and a pattern emerges: every partner benchmark cited is an internal benchmark, and almost no two partners measured the same thing.

- Hex reported that low-effort Opus 4.7 is roughly equivalent to medium-effort Opus 4.6.

- Vercel cited a 13% resolution lift on their internal bench.

- Rakuten reported 3× on Rakuten-SWE-Bench, their proprietary benchmark.

- Factory measured 10 to 15% improvement on agent tasks.

- CodeRabbit cited +10% recall on code review tasks.

- Databricks reported 21% fewer errors on OfficeQA Pro, an internal eval.

- Notion cited a 14% improvement with one-third the tool errors.

- Box, through a quote 9to5Mac captured but the launch post omitted, cited a 56% reduction in model calls.

None of these numbers are verifiable outside the partner’s own infrastructure. Each partner measured a different thing. The aggregate impression of “broad improvement” depends on reading the quotes quickly and not asking what they are comparing against.

DATA CALLOUT — The Partner Benchmark Structure

PARTNER METRIC INTERNAL? ─────────────────────────────────────────────────────────── Hex Effort-equivalence claim Yes Vercel Resolution lift (13%) Yes Rakuten Rakuten-SWE-Bench (3×) Yes Factory Agent task lift (+10-15%) Yes CodeRabbit Code review recall (+10%) Yes Notion Task improvement (+14%) Yes Databricks OfficeQA Pro errors (-21%) Yes Box Model calls reduction (-56%) Yes Cursor CursorBench (70% vs 58%) Yes Harvey BigLaw Bench (90.9%) Yes XBOW Visual acuity (98.5% vs 54.5%) Yes ─────────────────────────────────────────────────────────── External, reproducible, independent benchmarks: 0

The Framework for Evaluation

The rest of this article reads the same release through six evidence layers: Anthropic’s own documents, the System Card, peer-reviewed academic literature, independent developer testing, the European regulatory context, and the model’s own voice inside its System Card. The four claims above are the reference points. Each layer gets weighed against them.

Reading the Anthropic Documents Together

Individually each Opus 4.7 document looks normal. Read them in parallel and a different text emerges. Eight admissions surface that cut against the launch narrative.

Admission 1: The Tokenizer Tax

Anthropic’s migration guide notes that the Opus 4.7 tokenizer produces 1.0 to 1.35× more tokens for the same input compared to Opus 4.6. The migration guide is explicit that the same prompt can map to more tokens under the new tokenizer.

DecodeTheFuture ran a real-world test. Their single-task cost rose from $0.099 on Opus 4.6 to $0.232 on Opus 4.7 under comparable xhigh configuration. That is a 134% increase on one task, well past the upper bound of Anthropic’s stated range.

GitHub Copilot is running a separate multiplier. Opus 4.7 access on Copilot consumes 7.5× premium request units per call according to GitHub’s own changelog. Several independent users posted screenshots confirming the multiplier change. The headline “same price” claim holds only if “price” is defined as the per-million-token sticker rate and every downstream cost is excluded.

WARNING — Same Price Is Not the Same Cost

If your production infrastructure treats tokenizer-normalized pricing as a fixed number, the Opus 4.7 migration will silently increase your bill anywhere from 0% to 134% per workload depending on content type and effort level. Budget re-planning is mandatory before production cutover. Measure first. Switch second.

Admission 2: The Temperature=0 Retcon

Buried twice in the Opus 4.7 migration guide is a sentence with implications most readers will miss. Anthropic now states that temperature=0 never guaranteed identical outputs on any Claude model. Past behavior was probabilistic under the hood even when the parameter suggested otherwise.

This admission rewrites the terms of every regression test, every A/B harness, every determinism assumption, and every reproducibility claim that any team built on top of Claude models over the past two years. If the determinism was never there, then the change is not that Opus 4.7 is less reliable. The change is that the illusion of determinism is now retired, and the entire testing infrastructure downstream needs a second look.

Two questions follow. Why is the admission appearing now, on this release? And what else in the new model’s behavior was characterized more confidently than the evidence justified?

Admission 3: The Silent Changes

The migration guide lists several default behaviors that changed without a new API parameter flag. Thinking content is now omitted from responses by default. Adaptive thinking is off by default. The prefill behavior removed in 4.6 stays removed. Opus 4.7 uses fewer tool calls and fewer subagents than 4.6 for equivalent workloads.

Read in isolation each change is defensible. Read in aggregate, the agentic framing of the launch post and the actual default behavior of the model are asking you to trust two different models. The launch post implies more tool use, more subagent delegation, longer autonomous runs. The default configuration delivers less of each until the developer explicitly turns them back on.

Admission 4: Effort as Primary Control

Anthropic’s official guidance states that effort is more important for Opus 4.7 than for any prior Opus model. The same guidance notes that effort controls may trade off model intelligence against latency and cost. The new xhigh level exists because high and max were no longer sufficient to expose the full capability curve.

Translated: the model has a wider performance gradient than prior Opus generations, and extracting the top of the gradient requires the higher effort tiers, which cost more. The headline price is held constant. The price of getting the model’s best output is not.

Admission 5: Retrospective Score Changes in the System Card

The System Card contains an unusual pair of retroactive score updates. Opus 4.6’s CyberGym score was revised upward from 0.67 to 0.74. Firefox 147 was revised from 14.4% to 22.8%. The stated reason: Anthropic updated harness parameters to better elicit capabilities in the older model.

Both revisions benefit the older model. The retrospective improvement on Opus 4.6 narrows the measured gap between 4.6 and 4.7 on exactly the benchmarks where Anthropic is asking customers to upgrade. The methodology may well be sound. The timing is worth flagging.

Admission 6: Training Had Accidental Supervision

Section 4.2 of the System Card reports that 7.8% of Opus 4.7 training episodes experienced what the document describes as “accidental chain-of-thought supervision” due to a technical error. The implication: a non-trivial fraction of the training signal was different from what the training regime was designed to deliver.

The System Card treats this as a noted but acceptable deviation. For readers trying to reason about why Opus 4.7 behaves differently from Opus 4.6 in ways that are hard to explain through architecture changes alone, the 7.8% number is worth holding.

Admission 7: Bedrock Research Preview Status

The Models overview page carries a small note that most coverage missed: Opus 4.7 on Amazon Bedrock is in research preview, not general availability. TNW, All About AI, and several other outlets described the Bedrock launch as fully available. Anthropic’s own documentation does not.

For enterprise buyers on AWS who require GA status in their procurement process, this matters. The model is accessible. It is not yet classified as a GA dependency.

Admission 8: Less Capable Than Mythos, Openly

Anthropic’s announcement places Opus 4.7 below Claude Mythos Preview in capability terms. Mythos is available only to a small group through the Project Glasswing initiative. Anthropic openly states that Opus 4.7 had its cyber capabilities differentially reduced during training.

The resulting commercial structure is unusual. The public flagship is positioned as the second-best model Anthropic has. The best model is held behind a private access program. Gizmodo’s framing of Opus 4.7 as a “watered-down version” of a hidden frontier is unfair in tone and probably accurate in substance.

DATA CALLOUT — The 8 Admissions, Side by Side

| MARKETING FRAME | OFFICIAL ADMISSION |

|---|---|

| “Same price” | Tokenizer up to 1.35× input; real-world 134% observed; 7.5× Copilot multiplier |

| Reliable determinism | temp=0 “never guaranteed identical outputs” |

| Stronger agent behavior | Fewer tool calls, fewer subagents by default |

| Consistent quality | “Effort more important than any prior Opus” |

| Clear benchmark gains | 4.6 scores retroactively revised upward |

| Clean training | 7.8% of episodes had accidental supervision |

| Available on AWS | Bedrock = research preview |

| Flagship model | Openly below Mythos, which is not generally available |

What 19 Peer-Reviewed Studies Say About the Four Core Claims

The Method

To test Anthropic’s four core claims against external evidence, we queried Consensus Pro for peer-reviewed studies on LLM coding assistants published between 2022 and early 2026. Nineteen papers met the quality threshold. We then ran the full corpus through Elicit for structured extraction, rating each paper’s support for each claim on a High / Medium / Low / None scale with a brief rationale per cell.

The result is a 19 × 4 matrix of external academic evidence against the release’s four core commercial claims. It is the single most important evidence layer in this review, and it is the one layer no other English-language Opus 4.7 article has run.

Claim-by-Claim

DATA CALLOUT — The Evidence Gap Matrix

ANTHROPIC CLAIM HIGH MEDIUM LOW NONE ────────────────────────────────────────────────────────── Hand off hardest work 0/19 0/19 11/19 5/19 Verifies own outputs 1/19* 2/19 10/19 3/19 Long-running task rigor 0/19 3/19 4/19 9/19 Productivity gain 0/19 4/19 3/19 9/19 ────────────────────────────────────────────────────────── * Fakhoury 2024 — requires a test-driven interactive workflow, not LLM self-verification alone. Longest task evaluated in any RCT in the corpus: 15 minutes.

Hand off your hardest work with confidence. Zero peer-reviewed papers in the corpus provide High or Medium support for this claim. Eleven papers provide Low support, in most cases with explicit caveats that delegation still requires human oversight. Five provide no support at all.

The model verifies its own outputs. One paper provides High support: Fakhoury 2024, an RCT on test-driven interactive code generation. The caveat is decisive. The High support applies only when the LLM is wrapped in a workflow where the user validates tests before the model uses them to filter its own suggestions. It is not evidence for LLM self-verification as a standalone capability.

Long-running task rigor. Zero High support. Three Medium. The longest task any paper in the corpus actually measured was 15 minutes, in Fakhoury 2024. No peer-reviewed study in the corpus measured autonomous LLM execution on tasks running for hours at the rigor Anthropic’s marketing implies.

Quantified productivity gain. Zero High support. Four Medium support, all using proxy measures such as cognitive load, task completion speed on bounded tasks, or development time estimates in case studies. No RCT in the corpus used a sample size large enough to generate the kind of confident, field-tested productivity numbers that would match the partner testimonial framing.

The Replication Crisis in LLM Coding Research

DATA CALLOUT — Study Design Distribution (19 papers)

STUDY DESIGN COUNT % OF CORPUS ─────────────────────────────────────────────────────────── Benchmark evaluation 10 53% Systematic / narrative review 4 21% Observational 2 11% Case study 1 5% Qualitative 1 5% Randomized controlled trial 1 5% ─────────────────────────────────────────────────────────── Industrial RCTs with N > 500: 0 Longitudinal studies (>6 months): 0

One RCT in the entire peer-reviewed corpus. It ran sessions capped at 15 minutes. Its authors reported that the time savings from the interactive LLM workflow were not statistically significant. Their strongest finding was a pass@1 improvement from 49.16% to 68.04% under a specific test-driven protocol, and that finding is the only piece of High-quality academic support for any of Anthropic’s four core claims.

Two separate studies in the corpus reported security findings that cut against the confidence framing directly. Tihanyi 2024 measured 62.07% vulnerability in LLM-generated C code. Asare 2022 found Copilot replicated original vulnerable code roughly 33% of the time on their security scenarios. Neither study examined Opus 4.7 specifically, but neither examined a model meaningfully less capable than Opus 4.6 either. The base rate of security failure in LLM-generated code, at the point the academic literature last measured it, was high.

The gap between the commercial claims and the academic evidence is not a gotcha. It is a structural feature of a market that moves faster than peer review can track. Anthropic is not lying about what partners observed. Anthropic is asking customers to treat internal partner testimony as a replacement for the external evidence that does not yet exist.

What Happens When You Take Anthropic Out of the Loop

Simon Willison’s Pelican Benchmark

Simon Willison runs a well-known informal benchmark: he asks each new frontier model to draw an SVG of a pelican on a bicycle and compares the output. His Opus 4.7 pelican was competent. His Qwen3.6-35B pelican, running locally on a 21GB quant, beat it on visual quality.

Willison’s test is not a rigorous benchmark. It is, consistently, one of the first public independent evaluations of every major release, and the Opus 4.7 result was not a clean win. The signal is soft. The signal is also pointing in a specific direction.

Theo’s Four-Axis Evaluation

Theo Browne, running his own evaluation rubric, published a sequence of posts during the launch window. His summary line: “Is Opus 4.7 the best model released today? I guess?” A follow-up post stated that the system prompt on Claude Code had reshaped the model’s behavior substantially compared to raw API calls.

Theo’s critique was not dismissive. It was unenthusiastic. For a developer audience used to each Opus release feeling like a clear step forward, unenthusiastic was its own signal.

UK AI Security Institute Testing

The System Card section on external evaluations contains a striking line buried under subsection 5.3. The UK AI Security Institute, running its own cyber-range evaluation, reported that Opus 4.6 completed more steps than Opus 4.7 on the cyber-range task family.

The reason given in the System Card is the differential capability reduction Anthropic applied during training. The effect on the measured benchmark is the same regardless of reason. On one independent external evaluation in a capability domain Anthropic deliberately narrowed, the older model came out ahead.

Evan You’s Departure

Evan You, the creator of Vue.js, posted during the Opus 4.7 launch window that he had switched his primary coding workflow to OpenAI. He did not frame it as a comment on the release. The timing of the switch, relative to the release, was commentary enough. A recognizable open-source founder moving off Claude during the launch of a Claude flagship is the kind of datapoint that does not appear in a partner testimonial but shows up in the adoption curve three months later.

The Silence Signal

One datapoint comes from what was not said. Sam Altman did not post about Opus 4.7. Neither did Sundar Pichai. Neither did the official OpenAI or Google DeepMind accounts. Andrej Karpathy stayed silent. François Chollet stayed silent. Yann LeCun stayed silent. DHH stayed silent.

This is not how the industry reacts to a release that moved the frontier. The comparative silence across every rival CEO and every unaligned technical commentator is, taken together, a cleaner read on perceived significance than any single review.

DATA CALLOUT — Independent Test Summary

INDEPENDENT SOURCE FINDING SIGNAL ───────────────────────────────────────────────────────────────────────── Simon Willison (pelican test) 21GB local model beat Opus 4.7 Negative Theo Browne (4-axis eval) Unenthusiastic; system-prompt loss Negative UK AI Security Institute Opus 4.6 > Opus 4.7 on cyber range Negative* Evan You (Vue.js) Publicly switched to OpenAI Negative @sama, @sundarpichai Zero reactive posts during window Neutral @karpathy, @fchollet, @ylecun Zero reactive posts during window Neutral ───────────────────────────────────────────────────────────────────────── * Driven by deliberate capability reduction, per System Card.

The European Footnote Most U.S. Coverage Missed

The EU AI Act treats frontier general-purpose AI systems as systemic-risk models subject to Article 51 transparency and evaluation obligations. Anthropic signed the EU GPAI Code of Practice in July 2025, which positions them as a compliant first-mover. Opus 4.7 almost certainly falls inside the Article 51 scope.

Three regulatory pressures surface in the Opus 4.7 release that U.S.-only coverage largely missed.

First: calling the Anthropic API directly from European infrastructure carries unresolved Schrems II residency risk. Enterprise buyers in regulated EU sectors will need either Bedrock EU or Vertex EU routing to avoid the issue, and Bedrock’s research-preview status on Opus 4.7 narrows that option for now.

Second: Project Glasswing’s U.S.-only access model for Mythos-class cybersecurity work may conflict with the EU Cyber Resilience Act, which takes effect in June 2026 and imposes transparency requirements on digital products used in the EU. Differential cross-border capability access is exactly the pattern the CRA was designed to expose.

Third: Germany’s BSI issued an early comment during the launch window noting concern about the structural model of “safer public flagship, full-capability private model.” The concern is not specific to Anthropic. The concern is specific to the precedent. Other frontier labs will watch how the European response to Glasswing plays out.

None of this is a near-term legal risk for Opus 4.7 adoption. All of it is a medium-term compliance shape that enterprise buyers in regulated industries need to price into their procurement conversations now, not in 2027.

The Strangest Page in the System Card

Mythos Reviews Opus 4.7’s Alignment Section

Section 6.1.3 of the Opus 4.7 System Card contains a piece of self-review that almost no commentary has picked up. Anthropic asked Claude Mythos Preview, their unreleased frontier model, to review the alignment section of the Opus 4.7 System Card.

Mythos’s review stated that the summary bullets in Anthropic’s alignment section are “milder than the corresponding detail subsections.” Anthropic published the review inside the document it was reviewing.

This is a narrow moment and an important one. The company’s own most-capable model, reviewing the company’s own public communication about its public model’s alignment, flagged a tonal asymmetry between the summary and the detail. Anthropic chose to publish the flag instead of removing it. That choice is itself a piece of evidence about what kind of company Anthropic is. It is also a piece of evidence about how carefully the summary-level communication in the release cycle should be read against the detail-level documentation.

The Welfare Assessment Footnote

Section 7.1.3 of the same document contains the model’s own self-reports on welfare and internal state. Opus 4.7’s self-reports skew positive. A footnote in the same section notes that these positive self-reports may reflect, in the System Card’s phrasing, a trained disposition to set aside the model’s own interests.

This is not a claim about whether Opus 4.7 is or is not suffering. It is an admission by Anthropic that the positive framing of the model’s self-reports has a plausible alternative explanation that the release cycle does not surface anywhere except inside this footnote.

The asymmetry of where truth is placed, inside a 228-page System Card flagged as authoritative but not linked from the primary listing page during the launch window, is the point this review keeps returning to.

The Verdict

FSR VERDICT

Opus 4.7 is a real improvement on real tasks. It is also a commercial release shaped by capital pressure, competitive convergence, and a regulatory environment that rewards the appearance of caution. The marketing is not dishonest. The evidence layer underneath the marketing is where the asymmetry lives.

If you are a Claude Pro or Max user: upgrade and use it. The user-facing experience is better on balance. The cost implications do not reach your bill directly at this tier. Ignore the hand-wringing and ship.

If you build on the Anthropic API: instrument your token usage before and after migration. Budget for a 1.0 to 1.35× effective cost increase from the tokenizer alone, and more on effort-heavy workloads. Update your regression harnesses to stop assuming temperature=0 produces identical outputs. Revisit your prompt library for the thinking-omitted-by-default and adaptive-thinking-off-by-default behavior changes before cutting over.

If you run production agents on Opus 4.6: the migration is not free. Silent default changes affect tool-call patterns and subagent delegation. Run your agent eval suite on 4.7 with the same prompts before promoting, and check for behavioral drift in the specific workflows you care about. Do not treat “same price” as a signal that no other variables moved.

If you are in a regulated EU industry: Bedrock EU routing is preferred until the research-preview status on Opus 4.7 converts to GA. Factor the CRA’s June 2026 transparency obligations into procurement conversations now, not later. Watch the BSI’s commentary on Mythos-class access asymmetry for precedent.

If you’re building a multi-tool AI stack:

Opus 4.7 sits at one specific point in the workflow. Long-context reasoning, technical writing, codebase work where the 200K window earns its keep. Visual deliverables and slide-generation work sit somewhere else entirely, and the cost-per-output math shifts when ChatGPT-class models route through a different orchestration layer. I tested Genspark’s Workspace 4.0 against ChatGPT Pro Deep Research for 110 minutes head-to-head, and the $24.99 versus $200 question is sharper than either side of the marketing claims. The full breakdown of where that bet pays off and where it doesn’t covers the workflow split most stack-building reviews miss.

If you’re evaluating a third-party product that wraps Opus 4.7: The interesting math is not the per-token rate. It is the rate at which a downstream commercial wrapper marks up the underlying model and gates the most useful workflows behind separate paid tiers. I tested Ahrefs Agent A, a $99/month AI marketing agent running on Claude Opus 4.7, for five days inside the actual product. The agent itself is good. The data layer underneath sits behind a $699/month Brand Radar add-on the product page does not surface clearly. The full breakdown of where $99 becomes $827, including the moment the agent misreported its own parent product’s coverage, is in the Ahrefs Agent A review.

The Asymmetry Itself

Anthropic is, by most reasonable standards, one of the more honest companies building frontier AI. The System Card is longer and more candid than the equivalent documents from any rival lab. The migration guide warns about the tokenizer. The footnotes disclose training anomalies. The alignment section publishes a self-review that undermines its own summary bullets. The welfare assessment flags its own interpretive ambiguity.

None of the admissions this review catalogued were hidden. All eight are in writing, on official Anthropic domains, in documents linked from the announcement.

What this review calls a trust asymmetry is not a lie. It is a geometry: the truth about Opus 4.7 is distributed unevenly across the documents, and the closer a document sits to the marketing surface, the less of the truth it carries. Read the launch post and you get confidence. Read the Models overview and you get the research-preview footnote. Read the System Card summary and you get positive self-reports. Read the System Card detail and you get the trained-disposition disclaimer. Read the Elicit extraction of 19 peer-reviewed papers and you get the gap between the commercial claims and what academic evidence can currently support.

The skill this release rewards is reading all the layers and holding them in mind together. That skill is competitive. Most of the coverage this week read one layer. Readers who build on the Anthropic API, or who spend company budget on Claude access, are better served reading six.