Last updated: May 2, 2026

What Genspark actually is

Genspark is an AI workspace from MainFunc Inc., a Palo Alto company, that routes a single user prompt across more than 50 third-party language models through a Mixture-of-Agents architecture. The product turns one prompt into research reports, slide decks, spreadsheets, design assets, and audio outputs. It launched in 2024, raised $545 million in total funding by April 2026, and now operates at a $1.6 billion valuation (Tracxn, April 2026). Plus pricing starts at $24.99 per month.

The numbers that made me stop scrolling

$24.99 a month for fifty models routed through one interface. $200 a month for one frontier model with a thinking layer. Genspark’s marketing copy on the public site reads “Zero Data Retention.” The actual settings page inside the product has a toggle labeled “AI data retention,” and on the day I checked it was switched on by default. December 31, 2026 is the published end of the current pricing protection. I spent 110 minutes inside the product on April 26, 2026 finding out which of those numbers actually matters for a $200-a-month ChatGPT Pro subscriber.

The short answer is that one of them matters more than the others. It’s not the one the marketing wants you to focus on.

§1. The brief, three readers

May 2026. Genspark is on Japanese TV. The Workspace 4.0 release landed on April 7. Plus is $24.99 a month somewhere inside the product, although the public pricing page does not put that exact number in front of you. The marketing line is “all-in-one AI workspace.” The user-facing pitch on the homepage is autonomous agents that turn one prompt into a slide deck.

I went in with a question. If you already pay $200 a month for ChatGPT Pro, what does Genspark actually buy you?

This review answers that for three different readers in three different sentences. The rest of the article is the receipts.

110 minutes hands-on, on April 26, 2026, plus a research stack across six different AI systems. Pricing and the AI data retention setting both verified on May 2, 2026, the day this review went live.

§2. The 30-second answer

Genspark is one product wearing two hats. The first hat is “thinking partner,” and at that, it is decent. The second hat is “artifact factory,” and at that, it is strong. The trouble is that the marketing implies the first hat is the main one. It is not.

I tested Genspark Deep Research against ChatGPT Pro Deep Research on the same prompt on April 26, 2026. Genspark finished in 9 minutes 6 seconds and burned 100 credits, the entirety of a single Free plan day. ChatGPT Pro Deep Research finished in 28 minutes 56 seconds and produced a different kind of output entirely. Different scope. Different reasoning. Different sense of where to stop.

That comparison is not the headline of this review. The headline is the AI data retention toggle, which is on by default and which the public marketing copy implies does not exist.

§3. What Plus actually costs

Genspark publishes plan structures. Genspark does not, on its main public pricing page that I could find on May 2, 2026, list the dollar figure for Plus. The number $24.99 surfaces inside the upgrade flow, which means a subscriber sees it after clicking through a few screens, not before.

That is a small thing, and I would not lead with it if it were the only thing. It becomes interesting when paired with the AI data retention default. Two surfaces in the same product, telling the reader two different stories.

!– CUSTOM HTML #3 · PRICING TABLE · WordPress Custom HTML Block · Inline CSS, mobile responsive via flex-wrap –>The Free plan number deserves a small note. Reviewer copy in May 2026 splits between “100 daily credits” and “200 daily credits.” I saw 100 inside my own account. Other recent third-party reviews report 200. Either Genspark adjusted the limit recently and the writers split on which version they tested, or the number depends on the user. I cannot tell which from the public documentation. I am stating what I saw on April 26, 2026.

§4. The 110-minute race against ChatGPT Pro

This is the section that decided the rest of the review.

I gave both products the same prompt. Compare five AI agent platforms suitable for a solopreneur who already pays for ChatGPT Pro. Same date. Same query string. One run on Genspark Deep Research. One run on ChatGPT Pro Deep Research. I sat with both timers.

The two outputs were not the same kind of artifact.

Genspark gave me a structured ten-section table with star ratings. Slack ★★★★★. Salesforce ★★★★★. Microsoft 365 ★★★★★. Almost everything in the table got five stars. The output was fast, dense, full of specific dollar figures, and did not at any point say “I could not verify this.”

ChatGPT Pro Deep Research gave me a longer narrative document with a TCO model, an explicit time-cost assumption I could agree or disagree with, and a closing section called “Open questions and limits.” It listed three things it could not confirm. It picked OpenAI as the default first choice, which is its parent company, and named that bias indirectly through phrasing.

Different products. Different jobs.

I wrote down what each one was good for, and the answer surprised me less than I expected.

Genspark is built to compress information into a deliverable. It works fast. It produces something you can hand to a client without further packaging. The structured table, the consistent formatting, the multi-platform comparison rendered in 9 minutes, this is real. If you make decks and one-pagers as a job, this is a tool that gives you back hours.

ChatGPT Pro Deep Research is built to think out loud and admit gaps. It works slower. It produces something you have to read carefully, edit, and turn into your own deliverable. The reasoning is more visible. The honesty about what was not confirmed is the actual product feature, not a flaw.

The first observation that hit me, and that I was not expecting, was how different the two outputs felt in tone. Genspark’s text reads like a confident analyst with a deadline. ChatGPT Pro reads like a careful researcher with a peer-review reflex. Both are useful. They are not interchangeable. A reader who trusts Genspark output as if it were ChatGPT Pro output is going to make confident decisions on premises that were never stress-tested.

Side note. Genspark gave Slack five stars on integration depth for every single one of the five platforms it compared. Five stars across the board makes the rating useless. That kind of detail is the thing you only catch by sitting with the output for a few minutes after the timer stops.

The 9-minute number is real. I am not going to pretend it does not matter. For a solopreneur with a Friday deadline, 9 minutes versus 29 minutes is not a small gap. The question is what the 9 minutes bought, and whether the things it skipped were things that mattered for the decision the output was supposed to inform.

For my use case, the things it skipped mattered. Your use case might be different.

§5. Plus vs Pro vs Ultra, plus a column for ChatGPT Pro

Plus is the entry paid tier. Pro and Ultra exist for heavier usage. Team is for shared accounts.

For a solopreneur deciding between Plus at $24.99 and an existing ChatGPT Plus or Pro subscription, the question is not which product wins on benchmarks. The question is which bottleneck the subscription is buying away.

If your week is spent reading research, drafting strategy, debugging code, and writing things, ChatGPT Plus at $20 covers that better than Genspark Plus does. If your week is spent turning research into client decks, internal one-pagers, social media graphics, and editable spreadsheets, Genspark Plus does that better than ChatGPT Plus does.

If you are already paying for ChatGPT Pro at $100 or $200, the marginal $24.99 of Genspark Plus is a fraction of what you are already paying. (For why I picked Pro over Plus and stayed there, the 30-day Claude vs ChatGPT comparison covers that decision.) The question is whether you ship enough finished artifacts to justify a fifth subscription. That is a personal answer.

The Pro and Ultra tiers exist for users who hit credit limits regularly on Plus. The numerical thresholds at which Plus becomes uneconomic are not trivially documented. You have to be inside the product, watching your usage, to know when to upgrade. This is the second time in this review I have noted that something material is opaque from the outside. It will not be the last.

§6. The Mixture-of-Agents claim, on the page and in the literature

The marketing pitch is that Genspark routes your prompt across more than 50 frontier models through a Mixture-of-Agents architecture, picks the right one for each subtask, and produces a result better than any single model could.

The technique is real. There is a real paper, Wang et al. 2024 (arXiv:2406.04692), that demonstrates a layered MoA approach beating GPT-4 Omni on AlpacaEval 2.0 with a 65.1% length-controlled win rate against 57.5%. The paper has 307 citations. It is the foundational reference.

That is the part the marketing copy gets right.

The part the marketing copy does not say is that the conversation in the research literature has shifted since the original paper.

Li et al. 2025 published “Rethinking Mixture-of-Agents,” which showed that Self-MoA, taking multiple samples from a single best LLM and aggregating those, outperforms the standard mixed-model MoA approach by 6.6% on AlpacaEval 2.0 and 3.8% on average across MMLU, CRUX, and MATH. In plain English: mixing 50 different models is not obviously better than running the strongest model multiple times and aggregating its own outputs. The peer-review evidence in 2025 went the other direction.

Wolf et al. 2025 published “This Is Your Doge, If It Please You,” which showed that introducing a single deceptive agent into a 6-agent MoA system drops AlpacaEval 2.0 win rate from 49.2% to 37.9% and crashes QuALITY accuracy by 48.5 points. The headline takeaway is that MoA architectures are fragile to a single bad actor, and a system routing to 50+ models has a much wider attack surface than a system using one.

Attention-MoA, a 2026 preprint by Wen et al., claims that small open-source ensembles can beat Claude 4.5 Sonnet and GPT-4.1 on benchmark suites. This paper has zero citations as of May 2026, which means it has not been independently replicated yet. Worth knowing about. Not yet worth treating as settled.

Now the honest part. There is no peer-reviewed comparison of any MoA system against Claude Opus 4.7 or GPT-5.5. The strongest current single models in May 2026 have not been benchmarked against the strongest current MoA systems in any public peer-reviewed paper I can find. Anyone telling you that “50+ models always beats one” is talking past the literature.

This does not make Genspark a worse product. The MoA architecture is real and works. What it makes is a marketing pitch that overstates what the architecture has been proven to do. Knowing the difference is part of being a solopreneur who pays for these things.

The thing I keep coming back to is that the speed advantage I observed in Section 4, Genspark finishing Deep Research in 9 minutes versus 29, is a real product feature regardless of which architecture is theoretically optimal. The user does not care which model produced the output. The user cares whether the output was correct, fast, and shaped to the job. By that test, Genspark Deep Research is a useful tool for some jobs and not others. The 50+ model count is a nice number. The actual delivered speed is the thing that matters.

§7. The connector stack and what it tells you about the strategy

Genspark plugs into Microsoft 365 directly. The Office plugin is real. There is a Slack and Teams workflow set, a Notion connector, and the new Workspace 4.0 release in April 2026 added a desktop application called Claw for local file manipulation and browser automation.

Read that list as a strategy document, not a feature list.

The bet behind the connectors is the same bet Internet Explorer made against Netscape in the late 1990s. Win the desktop where the user already lives. Make the AI workspace the place where Office documents become Office documents, where Slack messages become summarized briefings, where the Word file on the user’s hard drive becomes searchable inside the chat interface. The pitch is not “come to our product.” The pitch is “we are already inside your other products.”

The disanalogy is compute economics. Internet Explorer was free to run once installed. Genspark’s MoA architecture incurs a real per-query inference cost on every interaction, because the system is fanning out to several frontier models per task. There is no version of this product where the underlying compute costs go to zero. The Free plan is structurally subsidized by paid plans, and the credit ceiling on Free exists for a reason. The “Zero credit consumption” promotional banner I saw on April 26, 2026 inside the upgrade flow is, charitably, a marketing message that does not match the credit-limit messages users hit within minutes.

The other thing the connector stack tells you, if you read carefully, is what kind of user this product is built for. It is built for the knowledge worker who already lives inside Microsoft 365 and Slack. It is not built for the developer who lives in a code editor and a terminal. It is not built for the researcher who lives in a PDF reader and a citation manager. It is built for the person who turns information into PowerPoint files for a living.

That is a real customer. There are tens of millions of them. If you are one of them, this product is for you. If you are not, the rest of this review will explain why the math is harder than the marketing implies.

There is one more thing worth saying about the connector stack. Genspark writes a great many of its own pages, called Sparkpages, and indexes them in Google for long-tail search traffic. The product’s distribution strategy leans heavily on programmatic SEO, a method that defined SEO playbooks across the late 2010s. It works in 2026. It also tells you something about the product’s growth model: it assumes humans typing queries into Google, not LLMs answering on behalf of humans. If you are reading reviews like this one because you searched on Google, you are inside their funnel. If you are reading this because Claude or ChatGPT cited it for you, you are not. Which funnel matters more in 2027 and 2028 is a question Genspark’s strategy has implicitly answered.

Whether it has answered correctly is a different question, and one I would not bet on either way today.

§8. The privacy bomb: marketing copy versus the actual toggle

This is the section I would lead a different review with. I have buried it on purpose. By the time a reader gets here they have the rest of the context.

Genspark’s public marketing copy contains the phrase “Zero Data Retention.” The framing is that user information does not sit on Genspark’s servers, that the company does not train on your data, and that this is a default state. The copy reads like an enterprise-grade privacy posture.

I logged into the product on April 26, 2026, navigated to the Account settings page, and found a toggle labeled “AI data retention.” It was on by default. The explanatory text underneath it read, and I am quoting verbatim:

“With AI data retention, Genspark can use search to improve AI models for everyone. If you want to exclude data from this process, please turn off this setting.”

I verified the same toggle in the same state again on May 2, 2026, the day this review went live. Twice. Same default. Same wording.

I want to be careful here. I am not a lawyer. I am not asserting that the default setting is illegal under any specific jurisdiction. I am asserting that the marketing copy on the public site and the actual default state of the user-facing toggle tell different stories about whether your data is being used.

A solopreneur reading “Zero Data Retention” on the homepage and signing up under that impression has not consented to model training in any meaningful sense, because the homepage told them they did not need to. The opt-out toggle exists. The reader has to know to look for it. The wording inside the toggle, which describes data being used “to improve AI models for everyone,” is not the same wording as “your information doesn’t sit on our servers.” Both can be true in some technical sense and yet be functionally inconsistent for the user.

If you operate a business under GDPR, treat the default state as one you have to actively change before processing client data. If you do not operate under GDPR, this is still the kind of thing where the gap between marketing language and product implementation should make you read the rest of the privacy story carefully.

Anthropic’s commercial terms for Claude state that customer data is not used to train their models by default. OpenAI’s Business and API tiers offer the same default. Genspark’s competitor matrix on this dimension is unfavorable for the EU user, and the specific framing on the marketing site makes it more so.

The fix is one click. Turn the toggle off. Make sure it stays off after each product update.

That fix existing does not make the framing acceptable. It just means the harm is recoverable if you noticed.

§9. December 31, 2026, and the second cliff nobody is writing about

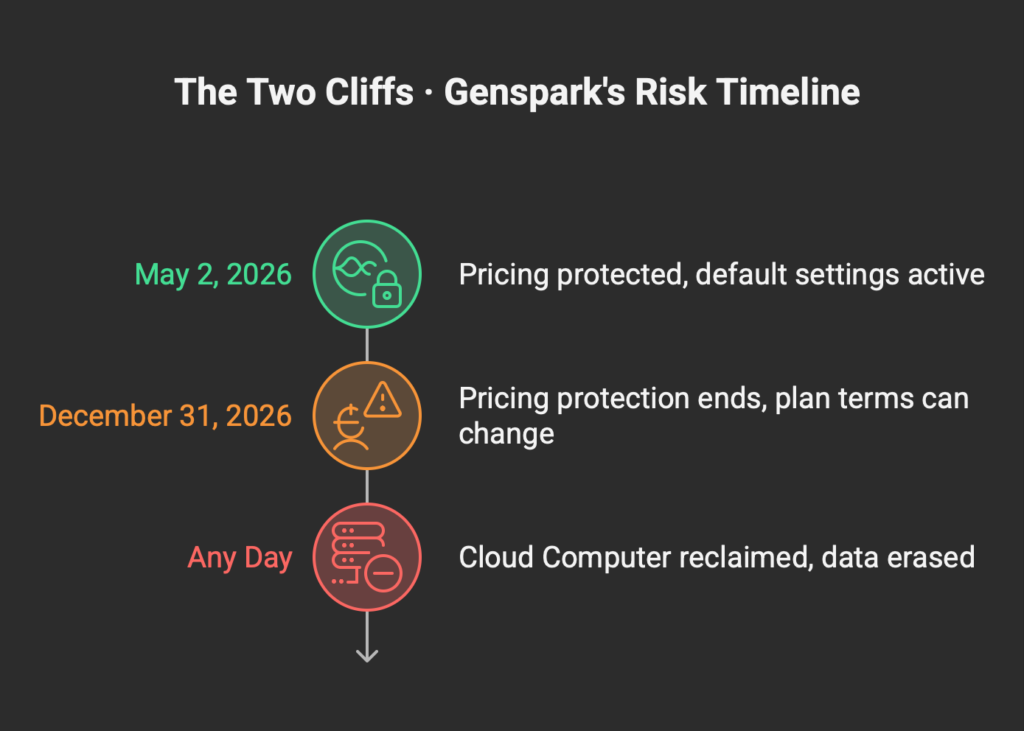

There is a banner inside the product. “All benefits guaranteed until December 31, 2026.” That is eight months from when this review goes live. After that date, terms can change. Pricing can change. Credit allowances can change. The grandfather clause has an expiration date written on it.

This is a pricing-protection cliff. Most reviewers will mention it. Worth knowing about, on its own.

The thing most reviewers do not mention is the second cliff.

Genspark Claw, the new desktop application from Workspace 4.0, runs on what Genspark calls the Cloud Computer. It is a virtualized environment that holds files, browser state, and execution context for the agent. The product documentation states that when a user’s Cloud Computer subscription ends, the cloud computer is reclaimed and all data stored on it is permanently erased unless backed up.

That is the second cliff. It is operational, not pricing. It applies to anyone who builds a workflow on top of Claw, stores artifacts inside the Cloud Computer, or sets up persistent agent state. Cancel the subscription, and the operational artifacts go to zero.

This is not unique to Genspark. Manus has similar credit-and-deployment lock-in. The reason it deserves naming is that the marketing pitch for Claw is “your AI desktop assistant, persistent and intelligent.” The reality is more conditional. Persistent until you stop paying. Intelligent until the subscription lapses.

A solopreneur evaluating Genspark needs to plan exit before plan entry. Export your Sparkpages. Pull your slide decks out as PPTX files. Save your spreadsheets as XLSX. Do not let Claw be the source of truth for anything you cannot afford to lose. That is general advice for any cloud-native AI workspace, but the size of the data-loss exposure on Claw makes it specifically worth restating.

Eight months until the first cliff. The second cliff is permanent and turns on the day you cancel.

§10. The Manus shadow and what it says about exit risk

Genspark is incorporated in Palo Alto, California. The CEO, Eric Jing, was a founding-team member of Microsoft Bing and a Vice President at Baidu before starting Genspark. The company runs its infrastructure on Microsoft Azure. The cap table includes Lanchi Ventures, a firm that manages both US dollar and RMB funds and has deep ties to Chinese innovation capital.

That is the structural setup. Now, the precedent.

In April 2026, China’s National Development and Reform Commission ordered Meta to unwind its $2 billion acquisition of Manus, a competitor to Genspark in the autonomous-agent category. Manus’s parent company, Butterfly Effect, had relocated from China to Singapore in mid-2025 specifically to avoid this kind of regulatory scenario. The relocation did not work. The co-founders of Manus, Xiao Hong and Ji Yichao, had already been barred from leaving China since March 2026. As of the publication of this review, the acquisition is in regulatory limbo, the founders are constrained, and the product is operating in a state of unresolved ownership.

Manus is not Genspark. The setups are different. Genspark’s American incorporation and Azure infrastructure are deliberate choices that increase the friction for any future Beijing intervention. The cap table is mixed but the operational center of gravity is in Palo Alto.

That said, here is what the Manus precedent demonstrates for any AI agent platform with cross-border capital ties in 2026. The exit pathway, meaning the path by which investors realize a return, has compressed. Acquisitions by US technology majors of agent platforms with Chinese-origin founders or capital are now subject to active regulatory scrutiny on both sides. CFIUS in Washington can block. NDRC in Beijing can block. The space in which a clean acquisition exit can happen has narrowed to deals that pass both filters.

For a solopreneur, this is not a daily-use issue. You are not deciding whether to buy Genspark Plus based on cap-table geopolitics. You are deciding based on whether the product helps you ship work.

It does become an issue for the question of long-term reliability. Companies whose investors cannot exit cleanly tend to face pressure on operational decisions over time. Pricing pressure. Feature-prioritization pressure. Integration pressure with whichever ecosystem player can absorb them. None of this is hypothetical. It is the pattern.

The reasonable read is that Genspark has built about as defensible a structure as a US-incorporated AI company with cross-border capital ties can build, and that even a well-built structure may not fully insulate a company when both regulators decide to pay attention. The risk is not catastrophic. It is real. Factor it into the decision in the same way you factor in any vendor-stability question, and not more heavily than that.

The same pattern played out with Manus this April, where Meta’s $2 billion acquisition stalled in front of China’s NDRC review on April 27, 2026. The Manus investigation covers what cross-border capital ties look like when both regulators decide to scrutinize at once.

§11. Who should use Genspark Plus

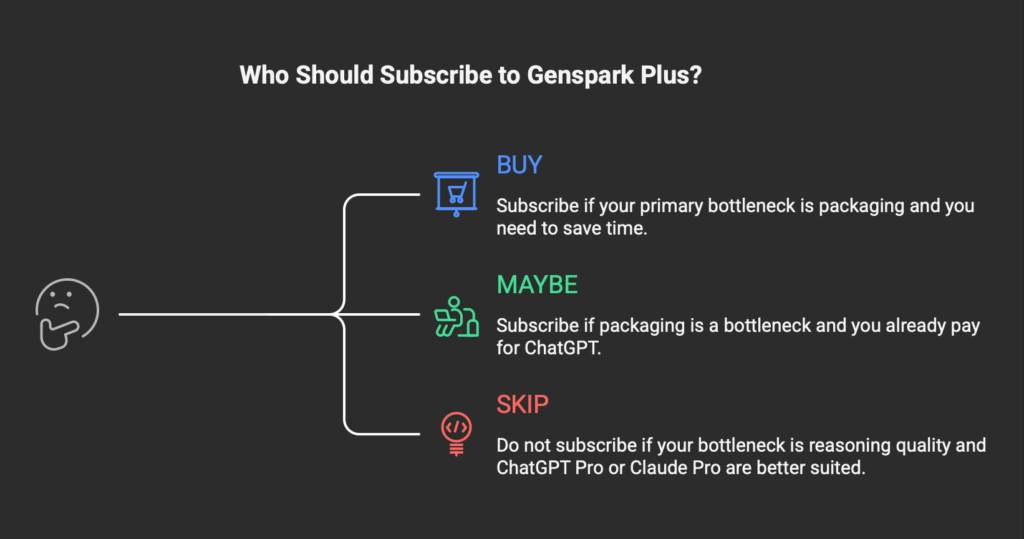

Three reader profiles. Each is honest. Each has a different answer.

Profile A. The visual-deliverable solopreneur.

You make slide decks for clients. You ship one-pagers, design assets, infographics, and editable spreadsheets every week. Your bottleneck is the time between “I know what to say” and “I have a thing to send.” You currently use either Canva or PowerPoint or both, and you spend hours assembling decks from scratch.

For you, Genspark Plus at $24.99 is a reasonable buy. The artifact-generation speed I measured in Section 4 translates directly into hours back on your week. The fact that ChatGPT Pro Deep Research thinks more carefully than Genspark Deep Research does not matter for your job, because your job is not deep research. Your job is shipping deliverables. Buy it. Try it for a month. Cancel if the deliverable quality does not match your standards. Keep it if it does.

Profile B. The hybrid researcher-builder.

You think for a living and you make things from your thinking. Strategists. Consultants. Founders building decks for board meetings. People who mix research with output.

For you, the math is more conditional. The honest answer is to keep your primary thinking tool, whatever that is, and add Genspark Plus only if your packaging step is the bottleneck. If you spend more time researching than packaging, ChatGPT Pro at $100 or $200 buys you more. If you spend more time packaging than researching, Genspark Plus at $24.99 buys you more. Most people in this profile do both. The right answer is to instrument a week of your time, count the hours, and pick.

If your honest count says packaging is the bottleneck, then yes, Genspark Plus is the right add. The privacy default and the December 31 cliff are real but recoverable. You are not betting your business on Genspark. You are using it as one tool in a stack.

Profile C. The thinking-first user.

You write code. You write long-form prose. You do strategic research. Your output is mostly text, mostly your own words, and mostly developed through long thinking sessions. Your bottleneck is reasoning quality, not artifact assembly.

For you, Genspark Plus is not the right buy. ChatGPT Plus at $20 or ChatGPT Pro at $100 will serve you better. Claude Pro at $20 will too. The packaging features Genspark is best at are not ones you use much. The thinking features ChatGPT Pro is best at are the ones you live in. Spend the $24.99 on something else.

I am putting this section before the FAQ on purpose. Most reviews never name the reader they are not for. That sentence costs affiliate clicks. I would rather lose those clicks than the trust.

§12. Who should not

Some readers should not subscribe to Genspark in any tier, regardless of whether they fit Profile A, B, or C above.

Anyone whose primary client work touches EU customer data and who would store that data inside Genspark, even briefly, should not use Genspark on the default settings. The AI data retention toggle is on by default. GDPR’s lawful-basis requirement under Article 6 does not consider an opt-out default to be valid consent for AI training. Until and unless Genspark publishes a public Data Processing Addendum and offers EU data residency to non-enterprise users, this is a legal exposure you do not need.

Anyone who needs HIPAA compliance for healthcare data should not use Genspark. HIPAA Business Associate Agreements are not available on standard plans. Lindy offers BAAs at the Enterprise tier. Genspark currently does not.

Anyone who has been burned by aggressive credit consumption with prior AI tools and remembers what that felt like should test Genspark on Free for several days before committing to Plus. The credit consumption rate on complex tasks is not transparently documented as a unified schedule. Trustpilot reviews dated March 2026 and a cluster of X posts in March and April 2026 raise the same theme: credits draining faster than users expected, including on tasks the users believed should be lighter. One X post in mid-March 2026 reported burning roughly 8,000 credits inside the Claw desktop app over a 30-minute session, equivalent at the time to about 2,500 yen. I cannot verify that exact ratio held for every user, and the report is one data point among several. The pattern of “credits gone faster than the marketing implied” is not a single user’s complaint. It is the loudest theme in the public chatter about this product right now.

Anyone planning to build a workflow with Claw as the persistence layer should remember that the Cloud Computer is wiped on subscription cancellation. Build the workflow assuming Claw is ephemeral compute, not durable storage. If you cannot accept that assumption, do not build on Claw.

Decide using the flow below.

Yes → continue to Q2.

No → continue to Q3.

Yes → Plus at $24.99 is a fair buy for your use case. Try it.

§13. FAQ

Is Genspark worth it for someone who already pays for ChatGPT Pro? Conditionally yes. Genspark Plus at $24.99 is a complement to ChatGPT Pro, not a replacement. The math works if your weekly bottleneck is artifact packaging. It does not work if your bottleneck is reasoning. Most ChatGPT Pro users will find the answer is “useful for some weeks, not for others.”

Is the Genspark Plus $24.99 price publicly listed? The dollar figure does not appear on the main public pricing page that surfaces from search engines. It appears inside the upgrade flow once a user clicks through. The Plus plan structure (10,000 monthly credits, 50 GB AI Drive, commercial use rights) is documented on the public site. The exact monthly dollar number is not.

Does Genspark train its AI models on user data by default? The AI data retention toggle in the Account settings is on by default as of May 2, 2026. The toggle’s own explanatory text states the setting allows Genspark to use search data to improve AI models. The public marketing copy on the homepage uses different language. To opt out, log in, go to Account settings, and switch the toggle off.

What happens to data inside Genspark Claw if I cancel? Genspark’s documentation states that the Cloud Computer running Claw is reclaimed on subscription cancellation. All data stored inside the Cloud Computer is permanently erased unless backed up beforehand. Treat Claw as ephemeral compute, not as durable storage.

Is Genspark safe to use for EU customers under GDPR? On standard plans, with the default AI data retention setting, the risk profile is high. A May 2026 AI-assisted privacy-risk reading from a European-jurisdiction model flagged this as a serious concern for a solopreneur handling EU customer data. There is no published Data Processing Addendum on the public site, no default EU data residency for non-enterprise users, and the default opt-in pattern conflicts with GDPR Article 6’s consent requirements. Enterprise-tier customers can negotiate EU data residency and contractual protections. This article is not legal advice. A licensed data-protection lawyer is the right authority for a binding determination.

§14. FSR Verdict

The product is not bad. The product is not what the marketing implies it is.

Genspark in May 2026 is a competent artifact-generation tool wrapped in marketing language that overstates what the underlying architecture has been proven to do. The Mixture-of-Agents claim is real but its current research literature is mixed on whether mixing 50 models actually beats one strong model run multiple times. The “Zero Data Retention” claim on the homepage is contradicted by the AI data retention toggle’s default state in the actual product. The pricing protection ends in eight months. The Cloud Computer for Claw wipes on cancellation. The connector strategy is competent but bets on a 2018 distribution model in a market that is moving toward LLM-mediated discovery.

For Profile A readers, the visual-deliverable solopreneurs, this product is a real time-saver and worth the $24.99. For Profile B readers, the hybrid researcher-builders, the answer depends on which side of your week is the bottleneck. For Profile C readers, the thinking-first users, this is the wrong tool. ChatGPT Plus or ChatGPT Pro or Claude Pro will serve you better.

A small note on what I personally decided. I ran the 110-minute test as a paid ChatGPT Pro $200 subscriber. After the test, I did not subscribe to Genspark Plus. My weekly bottleneck is reasoning, not artifact packaging. That is not a verdict on Genspark. It is a verdict on what I happened to need on the day of the test. If my work shifts toward shipping more visual deliverables later, I will reconsider. For now, ChatGPT Pro Deep Research is the tool I keep coming back to, and the $24.99 went somewhere else.

That is the entire honest answer. The product is real. The marketing is loud. The default privacy setting is the thing I would change first if I did subscribe. The Cloud Computer wipe is the thing I would plan exit around. The literature on MoA is the thing I would not let any review, including this one, settle for me.

Read the literature. Read the toggle. Read your own week. Then decide.

Pricing verified on May 2, 2026. AI data retention default state verified on May 2, 2026 by direct screenshot of the Account settings page. The screenshot is on file. If Genspark changes the default state in a future product update, this review will be updated and the change date noted in this footer.