Last updated: April 17, 2026

On April 4, 2026, Anthropic shut the door on one of the most popular ways developers used Claude. If you build with AI tools, the implications go far beyond one product.

Anthropic cut OpenClaw and all third-party tools from Claude Pro/Max subscription limits, effective April 4, 2026. This is not a pricing tweak. It is the moment the AI industry admitted that flat-rate subscriptions and autonomous agents cannot coexist. Every builder paying $20/month for “unlimited” AI access should understand what just shifted, and why it will not shift back.

Jump to: What This Means for You →

What Happened

At 4:14 PM Pacific on April 3, Boris Cherny, Anthropic’s Head of Claude Code, posted a single announcement on X: starting noon the next day, Claude subscriptions would no longer cover usage through third-party tools like OpenClaw.

The post collected 2.7 million views, over 6,400 likes, and more than 1,000 quote posts in under 24 hours.

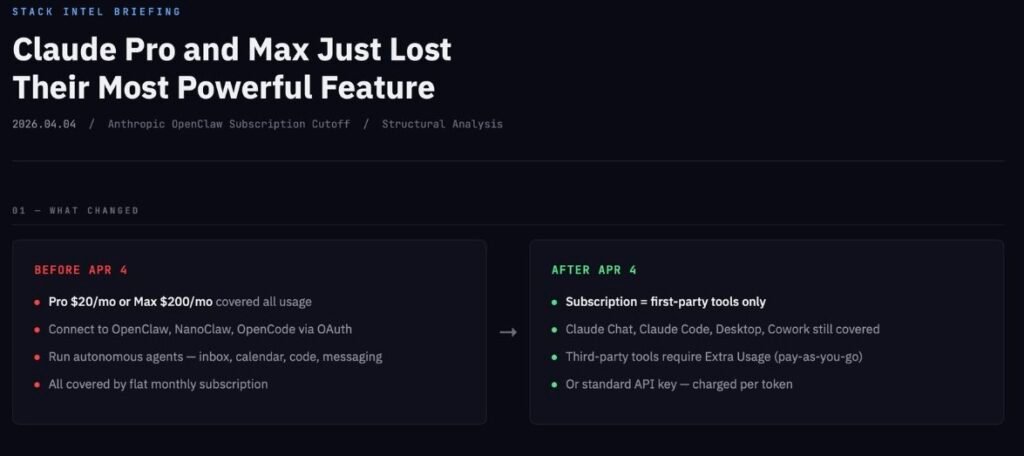

Here is what changed in practical terms. Before April 4, anyone with a Claude Pro ($20/month) or Max ($100–$200/month) subscription could connect their account to OpenClaw, an open-source AI agent framework with over 300,000 GitHub stars and 3.2 million monthly active users. OpenClaw would then use the subscriber’s Claude access to run autonomous workflows: clearing inboxes, managing calendars, writing and debugging code, operating across messaging platforms. All covered by the flat monthly fee.

After April 4, that same usage requires either Anthropic’s new “Extra Usage” pay-as-you-go billing (with pre-purchased bundles available at up to 30% off) or a standard API key charged per token. Anthropic offered a transition credit equal to each user’s monthly plan price, claimable until April 17.

The subscription itself still works for Claude’s own products: the chat interface, Claude Code, Claude Desktop, and Claude Cowork. The line Anthropic drew is between first-party and third-party access.

The Timeline That Got Us Here

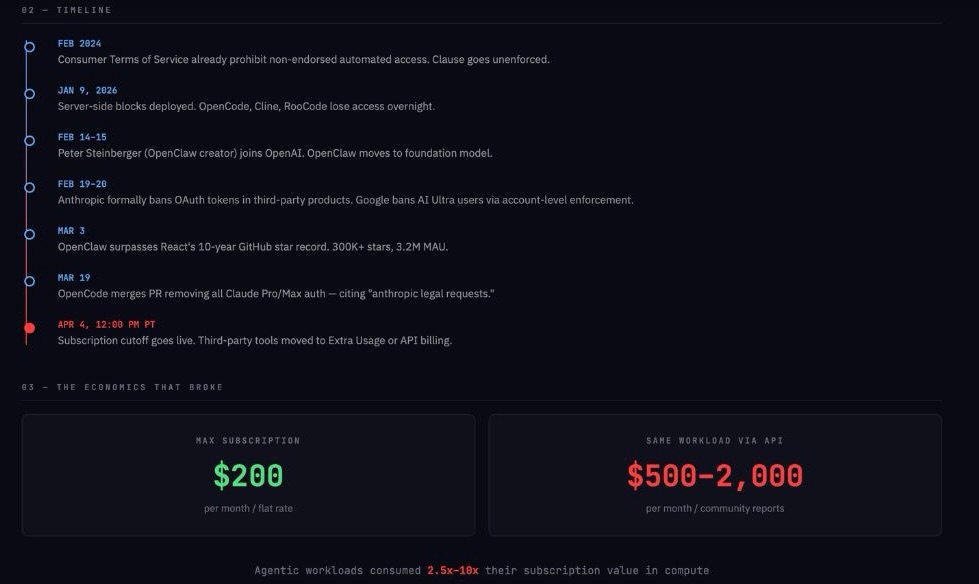

This did not happen overnight. The tension between Anthropic and third-party tool makers built across five months.

Anthropic’s Consumer Terms of Service have prohibited automated non-endorsed access since at least February 2024. For nearly two years, that clause went largely unenforced. OpenClaw (originally called Clawdbot, later renamed after Anthropic’s trademark complaint) grew explosively during that window, surpassing React’s 10-year GitHub star record on March 3, 2026.

Then enforcement began. On January 9, 2026, Anthropic deployed server-side blocks against third-party OAuth usage. Tools like OpenCode, Cline, and RooCode lost access overnight, receiving errors that their credentials were “only authorized for use with Claude Code.” Anthropic engineer Thariq Shihipar confirmed the company had “tightened safeguards against spoofing the Claude Code harness.”

In mid-February, Peter Steinberger, OpenClaw’s creator, joined OpenAI. Sam Altman announced that OpenClaw would become a foundation. Days later, Anthropic formally updated its legal compliance page to explicitly ban OAuth tokens from Free, Pro, or Max accounts in any third-party product.

On March 19, OpenCode merged a pull request removing all Claude Pro/Max authentication, citing “anthropic legal requests.”

On March 26, Anthropic tightened peak-hour session limits, affecting roughly 7% of Pro subscribers during business hours. (This was a separate capacity management move, not directly related to the OpenClaw cutoff, though the two are frequently conflated in coverage.)

The April 3 post from Cherny made the final step explicit.

Why It Happened: The Economics That Broke

The surface explanation is capacity. Cherny wrote that subscriptions “weren’t built for the usage patterns of these third-party tools” and that Anthropic was “prioritizing our customers using our products and API.”

The structural explanation runs deeper. It comes down to a fundamental mismatch between two types of AI usage that look similar on the surface but behave very differently underneath.

When a human uses Claude through the chat interface, the interaction follows a predictable rhythm. You type a question, wait for a response, read it, think, and type again. This generates a few thousand tokens per minute at most. Prompt caching works efficiently because sessions are structured and sequential. A $20 monthly subscription absorbs this comfortably.

When an agent like OpenClaw uses Claude, the pattern is entirely different. The agent reads an entire codebase, generates a plan, writes files, evaluates errors, and loops again, potentially consuming millions of tokens in minutes. Sessions are long-lived and bursty. Prompt cache hit rates drop because the tool is not optimized for Anthropic’s internal caching architecture.

The pricing math exposes the gap. Claude’s API rates for Sonnet 4.6 run $3 per million input tokens and $15 per million output tokens. Opus 4.6 costs $5/$25. Community reports from developers who switched from Max subscriptions to API billing after the cutoff describe paying $500 to $2,000 per month for the same workloads that previously fit inside a $200 plan. One analysis estimated that aggressive agentic deployments could consume $1,000 or more in API-equivalent compute per month.

Anthropic even attempted to bridge the gap. Cherny noted he had personally submitted pull requests to improve OpenClaw’s prompt cache hit rate. But a generalized third-party harness, by design, cannot match the efficiency of a tool built from the ground up around a specific model’s caching architecture.

The blunt version: Anthropic sold a subscription seat. A significant number of users were consuming it like a wholesale compute service. That delta was never going to survive contact with scale.

The Platform Pattern

This is not the first time a technology platform has opened its doors to third-party developers, watched them find product-market fit, and then redrawn the boundaries.

Twitter’s 2023 API pricing changes wiped out the entire third-party client ecosystem. Tweetbot, Twitterrific, and dozens of other apps that had defined the early Twitter experience vanished within weeks. The official justification was preventing data scraping and fighting bot spam. The strategic effect was consolidating users onto Twitter’s own apps, where it controlled the ad experience.

Reddit’s 2023 API changes followed a similar arc. The stated reason was covering server costs and preventing free AI training data extraction. The practical result was the death of Apollo, the most popular third-party Reddit client, and a wave of subreddit blackouts that fractured community trust even as Reddit moved toward its IPO.

The pattern is consistent. Platforms use third-party developers to discover use cases and train user behavior. Once those use cases are validated, the platform either replicates the functionality internally or prices out the external tools. The industry term for this is “Sherlocking,” after Apple’s repeated practice of building features that directly replaced successful App Store apps.

Anthropic’s situation follows this template, with one added element. Steinberger, the developer who proved the demand for agentic interfaces on top of Claude, joined Anthropic’s primary competitor weeks before the final cutoff. Whether the timing was retaliatory or coincidental, the optics reinforced the narrative that Anthropic was closing a door it had allowed to stay open only while it was useful.

The Competitive Landscape Right Now

Anthropic is not acting in isolation. Its two largest competitors have taken notably different positions.

OpenAI moved in the opposite direction. Its Codex product is distributed as open source, and the company has explicitly endorsed third-party harness usage with subscription plans. After Anthropic’s January crackdown, OpenAI’s Thibault Sottiaux publicly endorsed using Codex subscriptions in third-party tools. Around the time of the April 4 cutoff, OpenAI doubled Codex usage limits for subscribers. The competitive positioning is unmistakable.

Google went further than Anthropic, but in a more punitive direction. In late February, Google restricted AI Ultra ($249.99/month) subscribers who had accessed Gemini through OpenClaw’s OAuth flow. Unlike Anthropic’s approach, Google’s enforcement operated at the account level, meaning a flagged AI subscription could cascade and block Gmail, Workspace, and other Google services. Google later introduced a self-service reinstatement process, but the episode demonstrated the risks of account-level dependency on a single provider.

The result is a three-way split. OpenAI is courting displaced developers. Anthropic is drawing a clear line between consumer subscriptions and production agent workloads. Google is signaling that unauthorized third-party access carries the most severe consequences of all.

What This Means for You

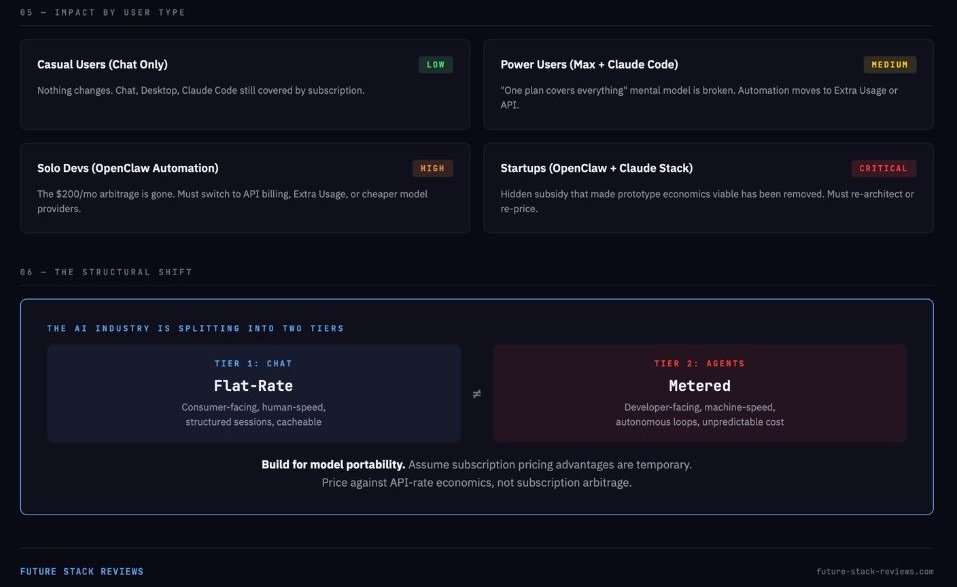

The impact varies sharply depending on how you use AI tools.

If you use Claude through the chat interface, desktop app, or Claude Code for interactive work, nothing changes today. Your subscription covers what it covered yesterday. But one thing did change this month: Claude Code now runs primarily on Claude Opus 4.7, which introduced silent default changes to thinking content, adaptive thinking, and tool use behavior. Our full Opus 4.7 evidence review documents what quietly shifted under the “same price” marketing — worth reading in parallel with the subscription changes above.

If you are a solo developer who ran OpenClaw on a Max subscription for personal automation (email, calendar, messaging workflows), the economics just changed significantly. The arbitrage that let you run a quasi-autonomous assistant for $200/month is gone. Your options are Extra Usage bundles, API key billing, or switching to a different model provider.

Small teams and startups that built workflows around OpenClaw plus Claude face the most severe impact. The hidden subsidy that made prototype economics look viable has been removed. The choice now is to formalize on Anthropic’s API or Enterprise tier at real unit costs, re-architect around cheaper providers, or adopt a hybrid approach using Claude for the hardest tasks and open-weight models for background automation.

Enterprise buyers are the least affected. Serious production deployments were already moving toward usage-billed plans, spend controls, and admin oversight. Anthropic’s Enterprise plan bills every token at standard API rates with admin-set spend limits. This change mostly reinforces what enterprise procurement teams already understood: consumer subscriptions are not production infrastructure.

The Alternatives Are Real, but Not Free

The developer response on X was immediate. Migration signals point toward OpenAI Codex (which supports one-command switching from OpenClaw’s CLI), Qwen 3 Coder, Kimi K2.5, DeepSeek V3/R1, and local deployments using Llama 4 or Mistral through Ollama.

The price differences are substantial. Kimi K2.5 lists input pricing at $0.60 per million tokens for cache misses and $0.10 for cache hits, with output at $3.00 per million. DeepSeek’s reasoning model launched at $0.55/$2.19. Both are dramatically cheaper than Claude’s Sonnet at $3/$15 or Opus at $5/$25.

But cost is not the only variable. For coding-heavy agentic workflows, Qwen 3 Coder (480B parameters, 35B active) represents the most credible open-weight challenger, with its developers claiming performance comparable to Claude Sonnet. Kimi K2.5 offers the lowest-friction migration path because Moonshot explicitly documents OpenClaw integration. DeepSeek excels on price-performance for reasoning tasks but is less predictable in long-running tool loops.

Self-hosting remains an option in theory, but the social media narrative often understates the real cost. Models like DeepSeek V3 (671B total, 37B active parameters) and Llama 4 Maverick (400B total, 17B active) are massive. Running Claude-class agent behavior locally requires serious hardware, meaningful engineering time for prompt tuning and error handling, and a tolerance for quality degradation. The viral “$15/month rebuild” stories tend to leave out at least one of three hidden costs: reduced output quality, operator labor, or infrastructure spending.

The most realistic path for most builders is a split stack: Claude or another premium model for high-stakes tasks, cheaper or open-weight models for routine automation, and careful monitoring of where each model’s reliability boundaries actually fall.

The Bigger Picture

Zoom out from the specifics of OpenClaw and Anthropic, and the structural signal is clear.

The AI industry is splitting into two distinct pricing tiers. Tier one is the chat interface: flat-rate, consumer-facing, tightly controlled, optimized for human-speed interaction. Tier two is agent infrastructure: usage-based, developer-facing, built for machine-speed autonomous loops where token consumption is unpredictable and potentially enormous.

This split is not unique to Anthropic. It is a consequence of the physics of large language models. Compute is a variable cost. Autonomous agents consume that compute at rates that no flat subscription can sustainably absorb. Every AI provider offering “unlimited” access to models that can also power agents will eventually face the same math Anthropic faced with OpenClaw.

For builders, the takeaway is architectural. If you are designing systems that depend on AI agent capabilities, build for model portability from the start. Assume that any subscription-based pricing advantage you find today is temporary. Price your products against API-rate economics, not subscription arbitrage. The companies that treat this as a permanent shift, rather than a temporary inconvenience, will be the ones still standing when the next provider draws the same line.

Six months from now, the most likely outcome is not that Anthropic reverses course. It is that the policy sticks, the backlash fades, multi-model architectures become standard practice, and the developers who built on subscription arbitrage either professionalize their billing or move on. The providers who come out ahead will be the ones who made that transition easiest, not the ones who pretended the math would never catch up.