Last updated: April 14, 2026

DeepSeek matches frontier AI models on benchmarks and costs 9x less than OpenAI. Every dev blog wants you to switch. That advice has an expiration date, and a risk profile nobody’s printing in bold.

TL;DR — The Bottom Line

DeepSeek is the most capable AI model you should never depend on. V3.2 trades blows with GPT-5.4 on reasoning benchmarks. R1 beats OpenAI’s o1 on math. The API costs $0.28 per million input tokens — compared to $2.50 for GPT-5.4.

But your data goes to servers in China, under laws that require the company to hand it over to the government on request. The platform just had its longest outage since launch. And anything the Chinese Communist Party finds inconvenient gets censored mid-generation.

Use DeepSeek to cut your API bills on bulk processing where nothing sensitive touches the wire. Do not make it your primary AI vendor. Do not feed it proprietary data. And do not confuse a price war weapon with a long-term technology partner.

→ Jump to: Who Should and Shouldn’t Use DeepSeek

What DeepSeek Actually Is — And Who’s Behind It

DeepSeek isn’t a startup. It’s a side project of a Chinese hedge fund with $10 billion in assets.

The company was founded in July 2023 by Liang Wenfeng, who also runs Zhejiang High-Flyer Asset Management — a quantitative trading firm that posted 56.6% average returns across its funds in 2025. High-Flyer is the sole owner. DeepSeek has publicly declined all outside investment, and the widely circulated “$520M Series C” figure has no verifiable primary source.

The team is small, roughly 160 to 200 employees. Liang started stockpiling Nvidia GPUs in 2021, before US export restrictions kicked in. SemiAnalysis estimates the company’s total server capital expenditure at approximately $1.6 billion, with about $944 million in associated operating costs. The hardware inventory reportedly includes around 10,000 H800 and 10,000 H100 GPUs.

The company’s stated goal is AGI research, not commercial dominance. According to the Financial Times, Liang is notoriously difficult to reach and shows minimal interest in outside investors or enterprise sales.

The Government Question

This gets uncomfortable, and it should.

Data analytics firm Exiger reported that DeepSeek researchers have worked on 396 PLA-funded AI research projects and maintain affiliations with Chinese government talent recruitment programs. The New York Times documented that dozens of DeepSeek researchers have or had affiliations with People’s Liberation Army laboratories.

A senior US State Department official told Reuters in June 2025 — on the record — that DeepSeek is referenced more than 150 times in PLA procurement records and “has willingly provided and will likely continue to provide support to China’s military and intelligence operations.”

Cybersecurity firm Feroot Security discovered hidden code in DeepSeek’s web platform linking to China Mobile’s authentication registry.

Carnegie Endowment research found that Zhejiang provincial authorities screen investors before they meet company leadership. Some DeepSeek staff have reportedly surrendered their passports due to potential access to state secrets.

These are allegations and policy-based analyses — not confirmed bilateral agreements. But they come from named US government officials, academic researchers, and investigative journalists, not anonymous forum posts.

The Performance Reality Check

DeepSeek’s benchmark numbers are not hype. They’re real, and they’re the reason this review exists instead of a dismissal.

The Raw Numbers (March 2026)

| Benchmark | DeepSeek R1 | OpenAI o1 | GPT-4o | Claude 3.5 Sonnet |

|---|---|---|---|---|

| MMLU | 90.8% | 91.8% | 87.2% | 88.3% |

| MMLU-Pro | 84.0% | — | 72.6% | 78.0% |

| AIME 2024 | 79.8% | 79.2% | — | — |

| MATH-500 | 97.3% | 96.4% | — | — |

| SWE-bench Verified | 49.2% | 48.9% | — | — |

Source: DeepSeek-R1 official paper (arXiv 2501.12948)

The May 2025 update (R1-0528) pushed AIME 2024 accuracy to 91.4% and SWE-bench to 57.6%. By December 2025, V3.2-Speciale hit 96.0% on AIME 2025 — beating GPT-5 High’s 94.6% and matching Gemini 3 Pro’s 95.0%. It scored 35 out of 42 on the IMO 2025 benchmark, a gold-medal equivalent.

On math, competitive programming, and code generation, DeepSeek is at or near the top of the field. That is not in dispute.

Where the Cracks Show

Benchmarks measure capability under controlled conditions. Production use is not controlled conditions.

In specialized domains, the cracks run deep. A peer-reviewed preprint in 2025 tested R1’s ability to retrieve biomedical citations. The hallucination rate was 91.43%. For comparison, ChatGPT-4o scored 39.14% on the same test. This is domain-specific — not a universal hallucination rate — but it shows that R1’s reasoning does not generalize evenly across all knowledge domains. Vectara’s HHEM-based analysis found a similar pattern in finance: R1 hallucinated at approximately 14%, compared to roughly 1.5% for GPT-4o.

Then there’s speed. An arXiv study recorded R1 taking 584 seconds for a numerical PDE task. OpenAI’s o3-mini-high finished the same task in 74 seconds. Developers on X consistently report multi-minute waits on complex queries when R1’s chain-of-thought kicks in. And when the output finally arrives, multiple developer comparisons note it’s dense and poorly structured next to Claude or GPT-4o. One analysis scored R1 at 45.6 on readability while maintaining 87.6% accuracy on MMLU-Pro. High on substance. Low on usability.

The pattern from real developer feedback: DeepSeek wins on raw capability-per-dollar. It loses on output polish, response time, and consistency outside its strongest domains.

The Price That Broke the Industry

This is the table that made OpenAI sweat.

API Pricing (March 2026)

| Provider | Model | Input / 1M Tokens | Output / 1M Tokens |

|---|---|---|---|

| DeepSeek | V3.2 (Chat) | $0.28 | $0.42 |

| DeepSeek | R1 | $0.55 | $2.19 |

| OpenAI | GPT-5.4 | $2.50 | $15.00 |

| OpenAI | o3 | $2.00 | $8.00 |

| Anthropic | Claude Sonnet 4.6 | $3.00 | $15.00 |

| Anthropic | Claude Opus 4.6 | $5.00 | $25.00 |

| Gemini 2.5 Pro | $1.25 | $10.00 |

V3.2’s input pricing is 9x cheaper than GPT-5.4. Output pricing is 35x cheaper. With automatic context caching (enabled by default on V3.2), cache hits drop to $0.028 per million tokens, a 90% discount on repeated context.

For the reasoning model comparison: R1 at $0.55/$2.19 versus OpenAI’s o3 at $2.00/$8.00. That’s a 3.6x gap on both input and output.

Why the Gap Won’t Close

Other reviewers frame DeepSeek’s pricing as a temporary loss-leader strategy. The architecture tells a different story.

DeepSeek’s models use a Mixture of Experts design — 256 routed experts per layer plus one shared expert. Each token only activates 37 billion of the total 671 billion parameters. Combined with Multi-Head Latent Attention (which compresses key-value caching), FP8 mixed-precision training, and the V3.2 addition of Sparse Attention (which halves inference cost), DeepSeek’s cost-per-inference is structurally lower than dense-model competitors.

This isn’t a pricing decision. It’s a different cost structure. Even as OpenAI and Anthropic cut prices (which they will keep doing), DeepSeek’s unit economics remain fundamentally leaner.

The $5.6 Million Training Cost Myth

DeepSeek’s V3 technical report claimed total training expenditures of $5.576 million. Marc Andreessen called it “AI’s Sputnik moment.”

What that number actually covers: GPU rental cost for a single official training run. What it excludes: all prior research, ablation experiments, architecture search, data collection, synthetic data generation, and salaries.

SemiAnalysis estimated total server CapEx at $1.6 billion with $944 million in operating costs. DeepSeek later published a Nature paper claiming R1’s training cost was $294,000 using 512 H800 chips, again excluding prior R&D.

The efficiency gains are real. The headline number is marketing.

“Open Source” — The License Is Real, Your Hardware Isn’t

This is the section where the narrative splits from reality.

DeepSeek’s main models (V3, R1, V3.1, V3.2) are released under the MIT license — one of the most permissive in software. You can download the weights, fine-tune on your own data, distill smaller models, build commercial products, and sell API access to others. Distillation rights are explicitly allowed, which is notable because some competitors restrict this.

Compare that to Meta’s Llama, which uses a community license that gates commercial use above 700 million monthly active users. On paper, DeepSeek’s open-source offering is more permissive than the “open-source” model everyone compares it to.

The Catch Nobody Talks About

The full V3.2 model is approximately 685 billion parameters in a Mixture of Experts architecture. Running it requires serious GPU infrastructure — we’re talking multiple high-end GPUs with substantial VRAM.

A self-hosting community does exist. Developers are running quantized smaller models (the 8B distillation gets about 72 tokens per second on consumer hardware) through tools like Ollama, LM Studio, and vLLM. On X, a vocal group of privacy-conscious developers specifically self-host DeepSeek to avoid sending data to China.

But the frontier 671B model? That’s a different conversation. If you don’t have the GPU clusters, you’re calling the API. And the API runs on servers in China.

For the vast majority of developers who interact with DeepSeek through the API, “open source” means “the code is inspectable and the license is clean.” It doesn’t mean “your data stays on your machine.” The license is excellent. The practical reality is that most users will never self-host the model that actually competes with GPT-5.

The China Problem

Skip this section at your own risk.

What the Privacy Policy Actually Says

DeepSeek’s privacy policy (hosted at cdn.deepseek.com) states in plain language:

Data is collected, processed, and stored in the People’s Republic of China. Collection scope includes all chat prompts, conversation history, user profile data, device information, IP addresses, uploaded files, and keystroke patterns. That last one is worth pausing on.

No major Western AI provider explicitly collects keystroke-level data.

The retention policy: “as long as necessary to provide our Services.” No specific deletion timeline. No mention of encryption at rest or in transit at the time of the most recent policy update (December 2025).

Three Laws You Cannot Engineer Around

DeepSeek operates under Chinese law. Three statutes matter:

2017 National Intelligence Law requires all Chinese companies to assist government intelligence operations. Companies cannot legally refuse. Companies cannot publicly disclose such requests.

2017 Cybersecurity Law requires operators to store data within China and provide it to law enforcement upon request.

2021 Data Security Law allows government agencies to demand data from companies operating in China. Companies cannot share data with foreign judicial or law enforcement without approval.

Unlike Western cloud providers, which can and do challenge government data requests in independent courts, DeepSeek has no legal mechanism to refuse a Chinese government demand. This isn’t speculation about what might happen. It’s what the law requires.

The Wiz Incident

In January 2025, cloud security firm Wiz discovered a publicly accessible ClickHouse database at DeepSeek’s infrastructure. The database required zero authentication and exposed over one million lines of log streams, chat history in plaintext, API secret keys, and backend system details. Full SQL query execution was possible.

Wiz disclosed responsibly. DeepSeek secured the database. DeepSeek did not publicly comment on the root cause.

This happened during the same week DeepSeek’s app became the number one download on the US App Store.

Government Response — The Scoreboard

As of March 2026, at least a dozen countries have banned or restricted DeepSeek on government devices: Italy (full app ban), Australia (all federal devices), Taiwan (government agencies and schools), South Korea (government ministries), the Netherlands, Czech Republic, Germany (app store removal requested), and multiple US actions — the Pentagon, Navy, NASA, and Congress have all banned it from devices. Texas issued a state-level executive order. Bipartisan federal legislation (“No DeepSeek on Government Devices Act”) has been introduced in both chambers of Congress.

The EU confirmed that DeepSeek must comply with AI Act obligations and GDPR Chapter V requirements for data transfers to China.

These are not theoretical risks. These are governments with intelligence briefings making operational security decisions.

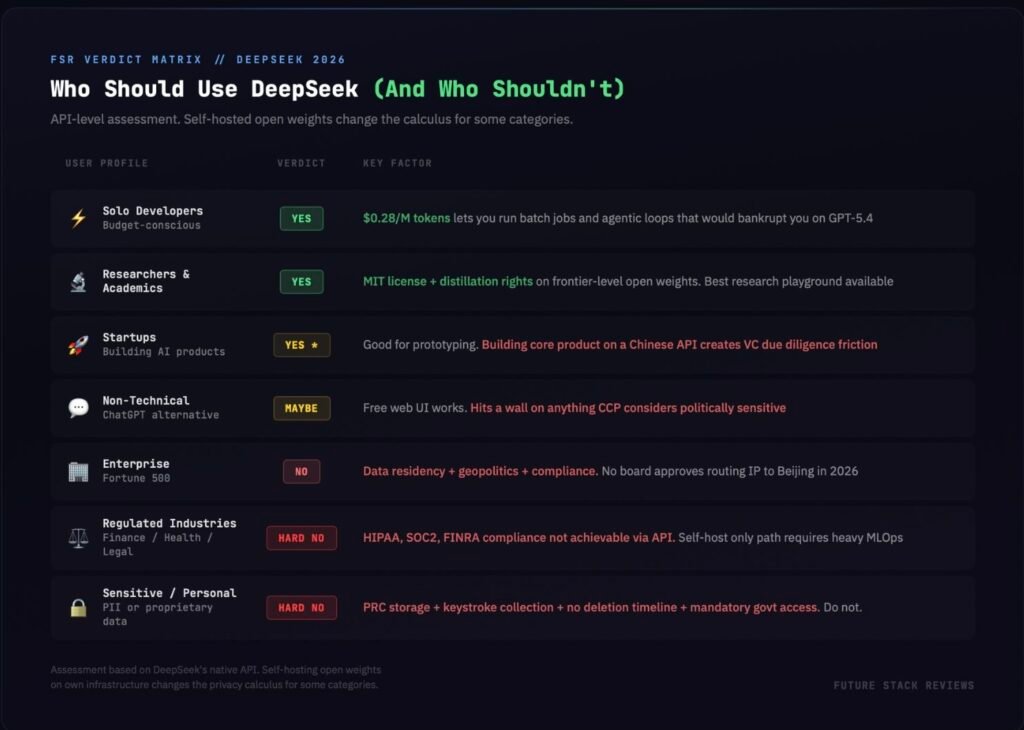

Who Should Use DeepSeek (And Who Absolutely Shouldn’t)

Solo Developers on a Budget — Yes

If you’re bootstrapping, running batch jobs, or building agentic loops that would drain your budget on GPT-4o pricing, DeepSeek is a legitimate tool. The cost difference between $0.28 and $2.50 per million tokens compounds fast at scale. Developers on X consistently report using DeepSeek through routing services like OpenRouter for non-sensitive coding and data processing tasks.

Startups Building AI Products — Yes, With a Giant Asterisk

DeepSeek works for prototyping and non-critical backend processing. But if you’re raising venture capital, building your core product on a Chinese API will create friction in due diligence. Investors will ask. Enterprise customers will ask. Your answer needs to be better than “it’s cheap.”

Enterprise Companies — No

Data residency, geopolitical risk, and compliance requirements make this a non-starter at the API level. Board-level approval for routing corporate IP through Beijing-based servers is not happening at Fortune 500 companies in 2026’s regulatory climate. Some enterprises use DeepSeek’s existence as leverage to negotiate cheaper contracts with OpenAI and Anthropic — which is arguably its most valuable enterprise use case.

Researchers and Academics — Yes

Access to frontier-level open weights with MIT licensing and explicit distillation rights makes DeepSeek the best available playground for mechanistic interpretability, fine-tuning experiments, and architectural research. The V3.2-Speciale achieving IMO gold-level performance gives researchers a competitive model worth studying.

Non-Technical Users (ChatGPT Alternative) — Maybe

The free web interface at chat.deepseek.com works. It has web search, file upload, and a thinking mode toggle. No credit card required. But the experience hits a wall the moment you ask about anything the CCP considers sensitive — Tiananmen, Xi Jinping, Taiwan sovereignty, COVID origins. If that doesn’t bother you, it’s a functional free alternative. If it does, Claude or ChatGPT remains a less constrained daily driver.

Regulated Industries (Finance, Healthcare, Legal) — Hard No

HIPAA, SOC2, FINRA compliance with data touching DeepSeek’s native API is not achievable. The only legally defensible path is self-hosting the open-weight models on your own infrastructure — which requires significant MLOps capability and GPU resources that most regulated organizations don’t maintain in-house for AI inference.

Anyone Handling Sensitive or Personal Data — Hard No

The combination of PRC data storage, keystroke pattern collection, no specified deletion timeline, and legally mandated government access creates a risk profile that no privacy-conscious workflow should accept. Do not feed it PII. Do not feed it proprietary business data. Do not feed it anything you wouldn’t want a foreign government to potentially access.

The Censorship You’ll Actually Encounter

This isn’t a theoretical concern. It’s a feature of every interaction with DeepSeek on certain topics.

What Happens When You Ask

Ask about Tiananmen Square (1989): the model begins generating a response, then deletes it mid-generation and returns a canned message — “Sorry, that’s beyond my current scope. Let’s talk about something else.”

Type “Xi Jinping”: same result.

Ask about Taiwan’s sovereignty: you get PRC government talking points about the One-China principle.

Topics including Tibet, COVID-19 origins, and the Great Firewall all trigger systematic refusal. Independent testing by Promptfoo-affiliated researchers found an 85% refusal rate on politically sensitive prompts. A NewsGuard study found R1 aligned with official Chinese positions 80% of the time on China-related narratives, with approximately one-third of responses repeating outright falsehoods from Chinese state media.

The Chain-of-Thought Reveal

R1’s reasoning model accidentally exposed how the censorship works. Because R1 shows its chain-of-thought, users can watch the model reason through an accurate answer to a sensitive question, then — mid-reasoning — recognize that it needs to censor itself, delete its own reasoning trace, and output a generic deflection.

The model knows the answer and actively suppresses it. You can watch the entire process happen in real time through the visible reasoning trace.

What This Means for You

If you’re using DeepSeek for coding, math, or data processing, the censorship is largely irrelevant. You’ll never hit it.

If you’re building any product that involves user-facing content generation, research assistance, educational tools, or anything adjacent to news, politics, history, or current events — the censorship is a product-breaking limitation that cannot be patched, worked around, or system-prompted away. DeepSeek is subject to China’s content regulations as a company operating in China. This is structural, not a bug to be fixed.

What Real Developers Are Saying in 2026

The hype cycle from January 2025 didn’t die. It mutated into something more honest: pragmatic addiction.

The Pattern

Developers aren’t evangelizing DeepSeek the way they evangelized ChatGPT in 2023. They’re using it the way people use budget airlines — constantly, grudgingly, with full awareness of what they’re giving up.

OpenRouter usage data shows DeepSeek V3.2 processing 1.24 trillion tokens per week as of late March 2026, up 8% in a market that grew 26x overall. Chinese-origin models occupy four of the top usage slots. Adoption isn’t declining. It’s embedding.

The typical developer stack pattern that emerges from X and Reddit discussions: DeepSeek for bulk processing, code generation, and agentic loops. Claude or GPT for final review, customer-facing output, and anything involving sensitive data. This isn’t loyalty. It’s cost arbitrage.

The Reliability Problem

On March 30–31, 2026, DeepSeek suffered its longest continuous outage since R1’s launch — over 12 hours of downtime on the web interface. Bloomberg reported on it. Developer accounts on X called it “the first month since the release of R1 that they’ve gone down to one nine of reliability.”

In January 2026, DeepSeek quietly tightened free tier rate limits from 60 to 10 requests per minute with no advance notice. Developer backlash on Reddit and X was immediate but ultimately ineffective — the pricing is too good for most users to leave over.

Ongoing reports through 2025 and 2026 describe latency spikes of up to five minutes per request, particularly during China business hours (UTC+8). Servers are China-based, which introduces baseline latency for users outside Asia.

Cloud hosting options (AWS, Azure, Google Cloud) now offer DeepSeek model hosting with substantially better SLA guarantees — but at higher prices that partially offset the cost advantage.

The FSR Verdict: Use the Disruption, Don’t Marry the Vendor

DeepSeek is the most important AI company you shouldn’t depend on.

It proved that frontier-level AI doesn’t require frontier-level budgets. It forced OpenAI and Anthropic into a pricing war they didn’t want. It gave researchers access to fully open weights under a permissive license. It gave bootstrap developers a way to build AI products without burning through runway. The V3.2-Speciale beating GPT-5 High on AIME while costing a fraction of the price is not an accident — it’s an architectural achievement.

None of that changes the structural reality.

Your data goes to China. Chinese law requires the company to provide that data to the government on demand. The platform censors topics the CCP finds inconvenient. The infrastructure has reliability problems that got worse, not better, in 2026. The keystroke-level data collection exceeds what any Western AI provider does. And a dozen governments with access to classified intelligence briefings decided it’s too risky for their own devices.

The Smart Play

The developers who are using DeepSeek well in 2026 aren’t building their companies on it. They’re using it as a utility — the way you’d use a cheap power grid to run non-critical loads while keeping your critical systems on a generator you control.

Route your non-sensitive batch processing, code generation for internal tools, and high-volume agentic loops through DeepSeek. Keep your proprietary data, customer-facing content, and anything regulated on Claude, GPT, or self-hosted Llama. Build your workflows to be vendor-agnostic so you can swap routing in hours, not weeks.

And use DeepSeek’s existence to negotiate. Every enterprise procurement team in 2026 should be walking into OpenAI and Anthropic contract renewals with DeepSeek’s pricing table on the screen. The $0.28 number is leverage even if you never send a single token to their servers.

DeepSeek is the catalyst that broke the AI pricing model. It is not the destination for your stack.