Last updated: April 23, 2026

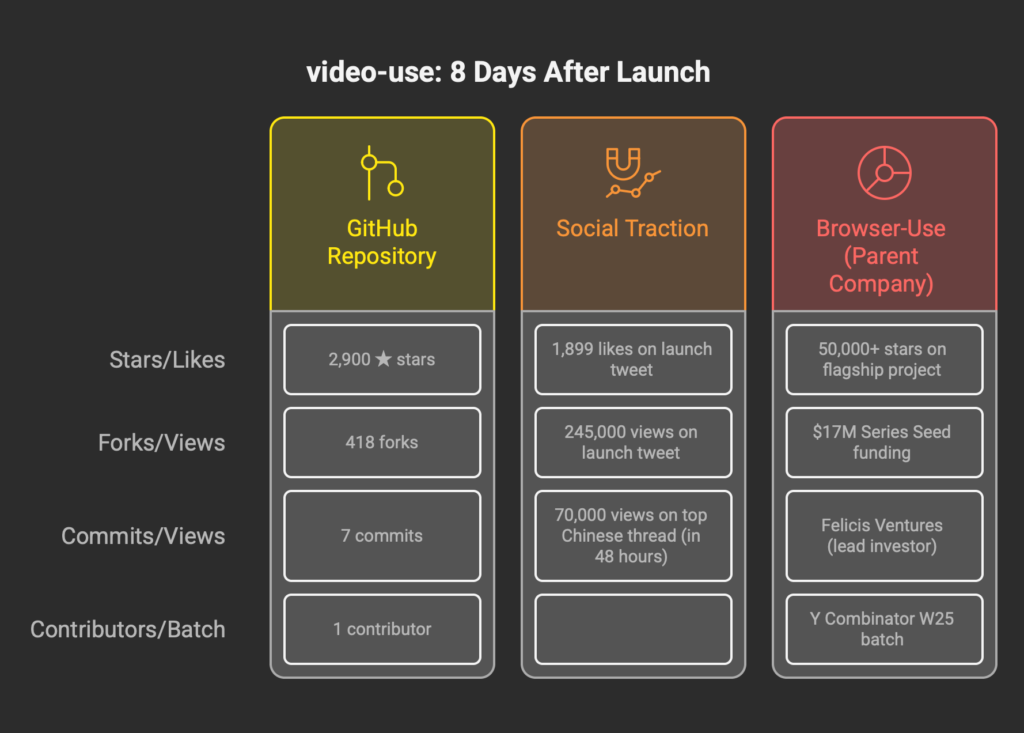

This video-use review opens with a receipt. On April 15, 2026, browser-use shipped video-use to GitHub. Eight days later the repository has 2,900 stars, 418 forks, 7 commits, and exactly one contributor. The launch post by co-founder Gregor Žunič got 1,899 likes and 245,000 views on X. The top Japanese-language review came from a developer who tested it on his own voice and reported the default subtitle chunker split words at wrong boundaries, breaking mid-morpheme instead of respecting Japanese word structure. The top Chinese thread racked up 70,000 views in 48 hours. In English, you cannot Google a serious review of this tool. Perplexity Pro returns nothing useful. ChatGPT doesn’t recognize the product name. That is either a red flag or a window, depending on whether you believe browser-use (the $17M-funded startup behind one of the most-starred open-source AI projects of 2025) tends to ship things that matter.

Here is what the launch framing undersells. video-use is not a video editor. It is an editing playbook written as code, executed at runtime by a language model that reads transcripts instead of pixels. That distinction is the entire story. Get it right and the tool collapses days of post-production into minutes. Get it wrong and you will pay ElevenLabs Scribe $0.22 per hour of footage to learn you bought the wrong mental model.

BRIEFING SUMMARY · APRIL 2026

Eight days post-release, one contributor, seven commits, and a design document (SKILL.md) that runs past 300 lines. Those numbers are either alarming or clarifying depending on what you think software is. Every fact in this review was verified against the GitHub repository and ElevenLabs official pricing on April 23, 2026.

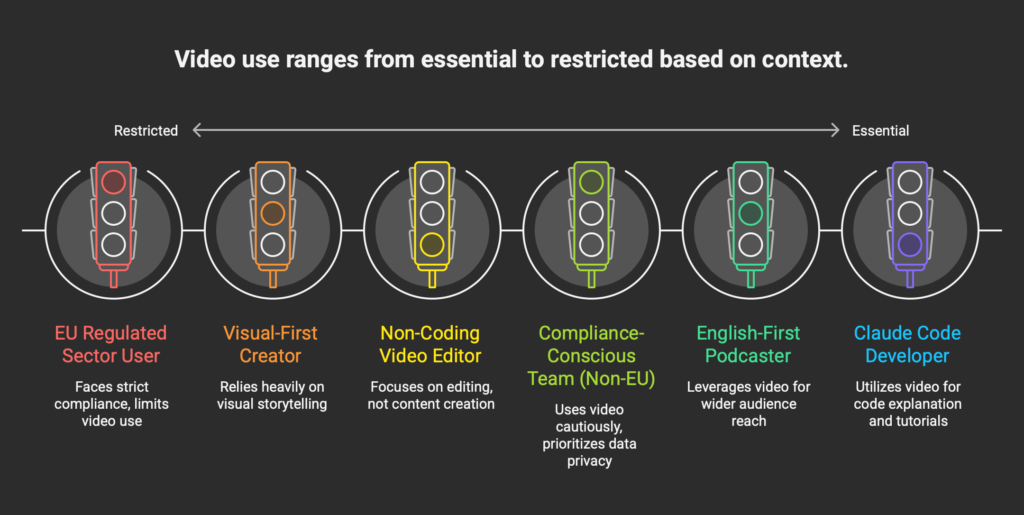

If you already live inside Claude Code and write code daily: video-use installs in roughly three commands and turns your existing Anthropic subscription into a working video editing pipeline. Scribe API overhead runs about $0.22 per hour of footage. Nothing else on the market matches this cost profile for anyone already paying for Claude Code.

If you edit video for a living and don’t write code: walk away. This is not the tool. The interface is a terminal, the iteration loop assumes you can read a Python traceback, and the “ask, confirm, execute, iterate” cadence requires comfort with agent-style collaboration that most video editors find slower than dragging clips on a timeline. Our Descript review and OpusClip review cover where you should be looking instead.

If your content is talking-head (podcasts, interviews, tutorials): this is video-use’s strongest lane. The transcript-first architecture assumes speech is the spine and visuals follow. That assumption holds for most of what you probably produce.

If you work in Japanese, Mandarin, or any language with word-boundary rules unlike English: verify before you commit. At least one early Japanese test found the default two-word uppercase subtitle chunker splits mid-word. The tool is customizable (it exposes almost every setting), but the out-of-the-box defaults are English-first.

If you are European and work in regulated sectors: the default Scribe path sends audio to US servers. Read the compliance section carefully. There is a self-hostable escape via Mistral Voxtral (Apache 2.0), but getting there is engineering work, not a one-command swap.

WHAT EVERY video-use REVIEW IS MISSING IN APRIL 2026

The first pass of commentary on this tool (mostly on X, with a handful of blog posts in Japanese and Chinese) has been uniformly enthusiastic and uniformly shallow. Pattern: developer installs video-use, feeds it a clip, shows before/after, marvels that the LLM can cut. Zero of the first fifty posts I read address what this tool actually is in a product sense. That is the gap this review tries to fill.

video-use in April 2026 is not a competitor to Descript or CapCut or OpusClip. It is a category shift. Most video editing tools are applications. You download a binary, it has a UI, you click things. video-use is an editing system written as code, distributed as a Claude Code skill, and executed by whatever language model you have authenticated against. The closest analog is how browser-use (the same team’s first product) shifted browser automation from “write Selenium scripts” to “tell an LLM what to do on a webpage.” video-use is the same move for post-production.

That reframing matters because the buying decision is not “is video-use better than Descript.” The right question is: is Video-as-Code a workflow shape that makes sense for you. If yes, video-use is currently the only serious implementation. If no, Descript or CapCut still wins your Tuesday regardless of how interesting this tool is on paper.

TL;DR

video-use is the most interesting thing to happen to post-production workflows in 2026, and also the wrong product for 90% of people who will try to install it. It is a Claude Code skill that turns a folder of raw footage into a finished MP4 through conversation with an LLM. It leans hard on a single architectural bet: the model reasons from transcripts, not frames. That bet is vindicated for talking-head content and most tutorial work. It breaks for anything visually driven.

Pricing is two layers, and the second one is where most buyers get confused. The skill itself is MIT-licensed and costs nothing. You need Claude Code (Pro at $20 per month for light use, Max at $100+ for daily work) and ElevenLabs Scribe API at $0.22 per hour of footage transcribed. There is no per-seat fee, no export watermark, no credit system to game. If you already pay for Claude Code for coding work, video-use is effectively a free add-on capability.

Three things make this different from every commercial competitor. First, the LLM never processes video frames, only the transcript plus selective visual lookups on demand. Second, every edit decision is persisted in an EDL JSON file plus a project.md memory, which makes the edits auditable and replayable in a way that timeline editors simply cannot match. Third, it generalizes. The same tool that cuts your podcast can grade the color on a travel reel if you describe what you want.

Three things break. Japanese and other non-English defaults are not optimized. The “90% of video is spoken content” assumption is wrong for significant parts of the creator economy. And if you are on EU GDPR compliance turf, the default Scribe dependency is a problem that requires technical work to fix.

→ Jump to: The Real Cost of Free OSS

- Developers already on Claude Code

- English talking-head content

- Teams needing edit audit trails

- Projects where cost scales with footage hours

- CLI + Python comfortable workflows

- Video editors who don’t code

- Gaming, music video, cinematic work

- Non-English out-of-box users

- EU regulated sectors (without Voxtral swap)

- Mobile-first or tablet-first creators

QUICK START: THIRTY SECONDS OF JUDGMENT

Clone the repo, symlink it into your Claude Code skills directory, install ffmpeg, drop your ElevenLabs API key into .env, point Claude Code at a folder of video. The entire setup is four commands on a Mac. If those four commands sound like four too many, this is not your tool. Not snark, just the fastest possible filter. video-use’s core audience is developers who can tolerate a terminal, and the install flow telegraphs that on purpose.

Once it is installed, try one thing: feed it a 10-minute podcast episode and type “make this into three short social clips.” Watch what happens. If the back-and-forth feels like working with a fast, cheap video editor who reads faster than you can talk, you are the target user. If it feels like babysitting a stochastic intern, move on. That decision takes an afternoon, not a week.

THE SAME-NAMESPACE TRAP

Two different projects named “video-use” live on GitHub. They are not the same thing. If you search “video-use review” or clone the wrong repo, you will install a completely different tool.

The one this review covers: github.com/browser-use/video-use. Published by the browser-use organization on April 15, 2026. A Claude Code skill for transcript-first video editing.

The other one: github.com/sauravpanda/video-use. An older, smaller project. It records browser interactions and converts them to automation scripts. Nothing to do with video editing in the cinematic sense.

This matters because early aggregator blogs and AI summarization tools have already started conflating the two. Read the GitHub URL before you clone. Verify that the organization is “browser-use” (no hyphen in sauravpanda). This kind of namespace collision is becoming a common failure mode in the OSS ecosystem as LLM-friendly tool names (short, verb-first, hyphenated) converge. Worth flagging.

WHAT video-use ACTUALLY IS

Let me define the product more precisely, because the single-sentence pitches floating around Twitter miss most of it.

video-use is a SKILL.md file (about 300 lines of markdown) plus a handful of Python helpers: transcribe.py, pack_transcripts.py, render.py, grade.py, timeline_view.py. All of it designed to be loaded into Claude Code’s skills directory. When you start a Claude Code session inside a folder of raw footage, the model reads SKILL.md, understands the production rules and editing craft encoded there, and begins a conversation with you about what you want to build. It asks what the video is for, who it’s for, what the tone should be, what must be preserved, what must be cut. Then it proposes a strategy in plain English, waits for your approval, and executes.

The execution path is where the design gets interesting. The model never watches the video. It transcribes every source file once using ElevenLabs Scribe (cached, never re-transcribed), packs those transcripts into a compressed phrase-level markdown view called takes_packed.md, and reasons about cuts from text. When it needs to confirm something visual (is the speaker smiling here, is the shot in focus, is there a flash artifact between takes), it calls a helper called timeline_view that produces a filmstrip-plus-waveform PNG for that specific moment. Text is primary. Visuals are on-demand.

The README puts it more tersely than I can. “The LLM never watches the video. It reads it.” Compare that to the alternative. Feed 30,000 frames from a 20-minute video into a vision-capable model at 1,500 tokens per frame, you consume 45 million tokens of context to describe content that a 12-kilobyte transcript encodes more precisely. video-use’s architecture is faster and cheaper, yes, but the deeper argument is correctness. Word boundaries from a diarized transcript are more reliable than pixel-pattern inference for the same boundaries.

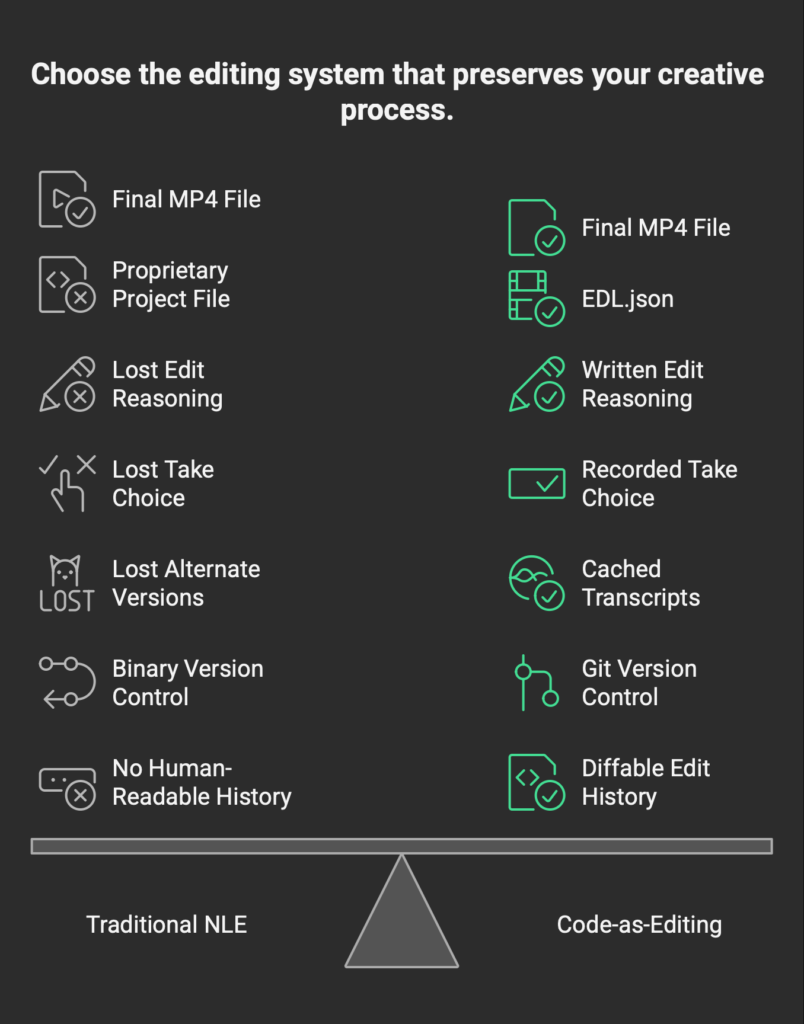

The output structure matters almost as much as the input structure. Every session produces an edl.json (the edit decision list), a master.srt (subtitles), a project.md (session memory), a clips_graded folder (per-segment extracts with color grading and audio fades applied), and a final.mp4. You can replay any session, audit any cut, and resume work next week with full context because the entire reasoning trail persists in plain text. Name one commercial video editor that does this. I will wait.

Side note: the README is co-authored. Check the GitHub commit log and you will see “gregpr07 and claude” on the commits. video-use was built with Claude Code, using Claude Code, for Claude Code. Dogfooding at this density is unusual. It also explains why the SKILL.md reads so specifically like a document written for an LLM audience first and a human audience second.

THE REAL COST OF FREE OSS

Here is where the $0 headline falls apart.

video-use is MIT-licensed and the skill itself costs nothing. That part is true and will stay true. What costs real money is the infrastructure video-use depends on, and the first confusion most buyers fall into is assuming “OSS” means “free to operate.” It doesn’t. It means “the code is free, you pay for the runtime.”

The runtime has three parts.

Claude Code. You need an active Claude subscription. Pro at $20/month is the entry point and runs fine for light use (maybe five or six short editing sessions a week). Daily users will hit usage limits on Pro and need Max at $100/month for the 5x multiplier, or Max at $200 for 20x. Video editing sessions are token-heavy because the transcripts are large and the model iterates. Budget for Max if you plan to edit more than an hour of footage a week.

ElevenLabs Scribe API. Audio transcription runs $0.22 per hour of audio, flat. This is the only purely variable cost and it scales linearly with raw footage. Edit a 60-minute podcast, pay 22 cents. Edit a 10-hour documentary, pay $2.20. If this number looks too small, that is because it is. Scribe is priced aggressively to feed the surrounding ElevenLabs ecosystem, and video-use quietly benefits. Worth noting: several competing reviews incorrectly report Scribe as $0.40 or more per hour. Those numbers are stale or pulled from different ElevenLabs product tiers. The live number on elevenlabs.io/pricing/api as of April 23, 2026 is $0.22 flat for Scribe v1/v2. If you need a broader picture of the ElevenLabs ecosystem, our ElevenLabs review walks through where the subscription plans start to actually matter.

Everything else. ffmpeg, yt-dlp, Python, Manim, Remotion, PIL, storage, compute. All free, all standard, all already on your machine if you do any video work at all.

So the honest monthly cost for three realistic user shapes.

The weekend experimenter. Claude Pro at $20. Maybe two hours of footage a month, so 44 cents of Scribe. Round up to $21/month.

The daily creator. Claude Max at $100 (the 5x tier). Maybe 10 hours of footage a month, so $2.20 of Scribe. Round to $103/month. Compare that to Descript Creator at $24 per month. video-use is roughly four times more expensive in dollar terms.

But here is the trick. Claude Max is a developer tool subscription that happens to run your editing workflow. If you are already a Max subscriber for coding work (and most of the people considering video-use are), the marginal cost of editing is the Scribe bill. Two dollars and twenty cents. That is not a typo.

The power user. Claude Max at $200 (20x). Maybe 50 hours of footage a month, $11 in Scribe. $211/month total. Against Descript Business at $65 per month, that math looks ugly until you apply the same “already paying for Max anyway” argument. For a developer running parallel edit sessions, the marginal cost of adding video-use to an existing workflow is essentially the Scribe line item.

The takeaway is unintuitive. video-use is the cheapest video editor of 2026 for people who already pay for Claude Code, and one of the most expensive for people who don’t. There is no middle ground. Pick your side of that bimodal distribution and the decision writes itself.

THE 12 HARD RULES THAT MAKE IT WORK

Any video editor who has been burned by automated tools should find the SKILL.md reassuring. The first section after the design principles is a list of twelve non-negotiable production rules. Not “best practices.” Not “recommendations.” The document calls them “hard rules, production correctness, non-negotiable” and says deviation produces silent failures or broken output.

Some are craft. Never cut inside a word. Snap every edit to a word boundary from the transcript. Pad cut edges by 30 to 200 milliseconds because Scribe timestamps drift by 50 to 100ms. Apply 30-millisecond audio fades at every segment boundary or you will hear audible pops.

Some are technical. Subtitles apply last in the filter chain, after every overlay, or overlays hide captions. Use per-segment extraction followed by lossless concat, not a single-pass filtergraph, or you double-encode. Overlays require setpts=PTS-STARTPTS+T/TB to shift the overlay’s frame zero to its window start, or you see the middle of the animation during the overlay window.

Some are procedural. Cache transcripts per source. Never re-transcribe unless the source file changed. Spawn animation sub-agents in parallel, never sequentially. Confirm strategy in plain English before touching the cut.

Why this matters: if you have ever tried to automate video editing with a general-purpose LLM and a few ffmpeg calls, you already know the failure modes. Audio pops at every cut. Subtitles hidden by animated overlays. Timestamp drift. Double-encoding. video-use’s twelve rules are each a scar, encoded. They read like production notes from someone who has shipped enough automated edits to have been humiliated by each of them. That is the document you want writing the playbook.

The flip side: if Claude Code is updated in a way that breaks the skill’s ability to follow these rules, the output breaks silently. This tool is as durable as the underlying model’s instruction-following. File that under long-term risk.

HOW video-use STACKS UP

The table below reflects verified prices and features as of April 23, 2026. Where an entry says “not applicable,” the tool operates in a paradigm that does not admit the comparison. Where an entry picks a winner, I have tried to justify it.

| Feature | video-use | Descript Creator | OpusClip Pro | CapCut Pro | Omniclip OSS |

|---|---|---|---|---|---|

| Base pricing | $0 (MIT) | $24/mo annual | $29/mo | $9.99/mo | $0 (AGPL) |

| Required infrastructure | Claude Code + Scribe | None | None | None | Self-hosted |

| Realistic monthly cost | $21 to $211 | $24 | $29 | $9.99 | Hosting only |

| Transcription included | Via Scribe, $0.22/hr | 30 hrs/mo | 300 min/mo source | Limited | Self-hosted |

| Export format | MP4, customizable | MP4, three quality tiers | MP4 | MP4 | MP4 |

| Audit trail of edits | Full (edl.json + project.md) | None | None | None | None |

| Works offline | Partially | No | No | Partial | Yes |

| Talking-head strength | Strong | Strong | Strong | Weak | Weak |

| Music / cinematic strength | Weak | Weak | Very weak | Strong | Moderate |

| Non-English out of box | Weak (English defaults) | Moderate | Moderate | Strong | Weak |

| Data residency | US (Scribe default) | US | US | US/China | User choice |

| Technical skill required | High (CLI + Python) | Low | Very low | Very low | High |

video-use wins on auditability, extensibility, and per-hour transcription cost for anyone already inside the Claude Code ecosystem. It loses or ties on ease of onboarding, mobile-native workflows, and non-English default quality. The competitors are not bad tools. They solve different problems for different buyers.

Our Descript review covers the timeline editor case in depth. Our OpusClip review covers the repurposing case. Between those two and video-use, most creators will find their right tool.

DEEP DIVE: THE JAPANESE AUDIO PROBLEM

On April 18, three days after video-use shipped, a Japanese developer posted what I think is the most honest review of the tool in any language. His test: feed a talking-head video in natural Japanese into video-use and let it generate subtitles. The default subtitle chunker in render.py splits captions into two-word uppercase chunks. That rule is encoded around English phonology, not language-agnostic word boundaries. When the target language is Japanese, the splitter has no reliable concept of a “word” because Japanese orthography doesn’t use spaces. Scribe’s diarizer tokenizes the audio into word-like units based on its training distribution, which is heavily English. The result, per the reviewer: natural Japanese sentences get chunked mid-morpheme, splitting compound verbs into fragments that don’t exist as words in actual Japanese.

Calling this a bug in video-use misses where the failure actually lives. It sits in the interaction between English-trained tokenizers, Japanese morphology, and a subtitle chunker that assumes space-delimited words. The SKILL.md explicitly says the two-word uppercase style is “a worked example from one proven video, not a mandate” and invites users to invent their own styles. In practice, what “invent your own style” means for a Japanese creator is: rewrite the subtitle filter chain and possibly the tokenization step. That is a meaningful amount of engineering work.

What to do if you are Japanese, Chinese, Korean: do not use the default subtitle style. Either feed your own pre-chunked SRT into the render path or write a custom subtitle generator that respects language-specific word boundaries. Mandarin and Korean speakers will hit similar issues because the defaults assume Latin-alphabet word separation.

None of this makes video-use wrong for non-English content. It makes the out-of-the-box experience worse than English-native users will report. A global review that doesn’t mention this is giving you half the picture.

DEEP DIVE: THE RESEARCH GAP

I ran a search on Consensus Pro for peer-reviewed studies on “transcript-first video editing” or “LLM-driven cut decisions.” The top result is a 2019 paper by Fried et al. on text-based editing of talking-head video. 277 citations. Nothing in the last three years addresses the specific architectural claim video-use makes, which is that LLMs reasoning from diarized transcripts can make editorial decisions comparable to human editors on talking-head content.

Worth flagging because early evangelists are citing the Fried paper as if it validates the approach. It doesn’t. Fried et al. demonstrated that editing video by editing text was possible in 2019. It said nothing about whether the resulting cuts are good.

A 2024 meta-analysis in Nature Human Behaviour on human-AI decision making found that the average outcome of human-AI teams on complex decision tasks is slightly worse than humans alone. The result does not invalidate video-use. It does suggest that anyone claiming video-use makes better cuts than a skilled editor is making a claim that the research literature does not support.

What would support the claim: a controlled study comparing video-use output to human-edited output on matched content, blind-rated by viewers on engagement, comprehension, and enjoyment. No such study exists as of April 2026. Anyone telling you this tool produces “professional cuts” or “better than human” output is selling, not measuring.

The honest framing: for talking-head content, video-use produces cuts that are probably 80-90% of the way to a skilled editor’s work, much faster, at much lower cost. That is a useful range. It is not “replaces your editor.” It is “replaces a significant slice of your editor’s most tedious work.”

DEEP DIVE: THE AUDITABILITY ANGLE

Now for the thing about video-use that almost nobody is talking about, and that I think matters more than the editing capability itself.

Every edit decision is written to disk. The edl.json records every cut, every source timestamp, every reason the model gave for picking that take over another take. The project.md records the strategy for the session. Transcripts are cached. Graded segments sit in a folder you can inspect. Six months from now, you can open the project folder and see exactly why a given cut was made.

Now consider what happens in a traditional NLE. Premiere, Resolve, Descript. You have a project file in a proprietary format, a timeline of clips and effects, and zero record of why any of it was chosen. The edit is the artifact. The reasoning is lost.

For a YouTuber editing their own content, this doesn’t matter. For a content team at a media company, it starts to matter. For a corporate communications team producing regulated video (pharmaceutical marketing, financial product explainers, healthcare patient education), it becomes a compliance question. Can you prove what was cut from the original footage? Can you reproduce the edit? Can an auditor trace the chain of decisions from raw material to published output?

video-use is the first video editing tool I have seen that makes those questions trivially answerable. Every artifact is plain text or structured JSON. Everything is version-controllable. Everything is diffable. This is not a feature the README emphasizes, but it is maybe the single largest reason enterprise teams should pay attention. The audit trail is free. The editorial process becomes reviewable.

I don’t know if browser-use will lean into this angle in their positioning. They probably should.

IF YOU’RE EUROPEAN: GDPR AND THE EU AI ACT

This section is for European creators, media teams, and compliance-conscious freelancers. Most reviews of US-built AI tools skip this part because most of them were written by US authors for US readers. That is a gap worth filling.

The default video-use configuration sends audio to ElevenLabs servers in the US for transcription. Under GDPR, voice data is biometric data (Article 4(14), Article 9), which is special-category data and requires explicit consent or a clear legitimate-interest basis for processing.

ElevenLabs offers Zero Retention Mode and EU Data Residency but both require enterprise contracts. The default retail Scribe API does not. If you are processing audio that contains EU residents’ voices (interviews, podcasts with European guests, internal corporate video with EU employees), the default video-use setup probably has a compliance gap you need to address before you ship.

The EU AI Act becomes enforceable on August 2, 2026. Article 50 imposes transparency obligations on AI-modified content: creators must disclose AI generation or manipulation to end users. Fines can reach €15M or 3% of global turnover. video-use does not automatically watermark or label output. That is your responsibility, and the window to figure out disclosure policies is narrowing.

The escape path that actually works: swap ElevenLabs Scribe for Mistral’s Voxtral models. Apache 2.0 licensed, self-hostable, multilingual. Keeps audio inside your infrastructure. Also requires real engineering work to integrate into the video-use helper scripts. Not a one-command change. More like a weekend of work for a competent Python engineer.

If you work in healthcare, legal, or journalism in the EU: the default configuration is not for you. Run Voxtral locally or pick a different tool entirely. WhisperX and whisper.cpp are other self-hostable options. The transcript-first architecture of video-use is actually well suited to air-gapped operation. It just doesn’t ship that way by default, and nobody in the launch copy is telling European users this clearly enough.

THE THREE MYTHS EVERY EARLY video-use POST SPREADS

Reading the first wave of hot takes on X and Zenn about this tool, three claims come up over and over. None of them survive scrutiny.

Myth one: “It’s free.”

False in any useful sense. The code is free. The runtime is not. Realistic minimum cost is around $21 a month for the weekend user and $100+ for anyone doing serious work. If you are not already a Claude Code subscriber, “free OSS” is burying about 95% of the actual cost.

Myth two: “It replaces your video editor.”

Not really. It replaces a specific slice of your editor’s work, the part that is transcription-driven and follows production rules. The visual-taste work (pacing, emotion, mood, visual composition of non-talking shots) is still yours. Anyone publishing before/after demos of video-use without showing what they cut is quietly telling you what they think “editing” means. For most content creators, 80-90% of the work is transcription-adjacent. For gaming creators and filmmakers, it is far less.

Myth three: “It’s ready for production.”

Also not quite. It is ready for production for the specific user profile it was designed for. English-speaking developers editing talking-head content using Claude Code. For Japanese creators, EU compliance-regulated teams, or anyone outside the default configuration, “production-ready” is doing a lot of heavy lifting.

None of this is a knock on the tool. video-use is a novel piece of software and the first thing in its category worth using. The bar for a review to be worth reading is whether it tells you where the tool actually breaks. These three are where it breaks most reliably in April 2026. A review that skips all three is telling you less about the tool than about its own priorities.

WHO SHOULD NOT USE video-use

The hardest section in any review is the one where you tell a reader the product is wrong for them. Here it is for video-use.

If you edit video for a living and don’t write code, this is not your tool. The install flow filters you out immediately and the iteration loop assumes comfort with terminals, Python tracebacks, and structured JSON output. Descript, CapCut, and Premiere exist for a reason.

If your content is predominantly visual (gaming, music videos, cinematic work, physical-performance comedy), video-use’s transcript-first architecture reads you wrong by design. It will make cuts that make sense if you were listening but look broken if you are watching. Visual pacing is not in the transcript. The tool can do visual work, but it requires you to narrate what you want with precision, and that friction is higher than just using a timeline editor.

If you work in a language other than English without willingness to customize, the subtitle chunker and probably the cut-boundary heuristics will produce output that feels wrong. Japanese, Chinese, Korean, Arabic, Hebrew users should all test carefully before committing.

If your compliance posture requires EU data residency, the default Scribe dependency is a problem. You can solve it with Voxtral, but “solving it” is engineering work, not configuration.

If you need mobile-first or tablet-first workflows, video-use does not exist for you. It is a terminal-plus-IDE tool. No iOS app is coming. No iPad optimization is planned (that I can find). If your bottleneck is “I only have my phone right now,” use CapCut.

If you want predictable monthly costs with zero variance, video-use’s two-layer cost model will frustrate you. Scribe is variable. Claude Code usage can spike. Neither is expensive in absolute terms, but both require comfort with usage-based billing.

FSR VERDICT

video-use in April 2026 is the most architecturally interesting video editing tool I have used, and also the most narrowly-useful one. It is not a Descript replacement, an OpusClip replacement, or a CapCut replacement. It is a new shape of video tool that only makes sense for a specific user profile: developers who already live inside Claude Code, work primarily in English, edit talking-head content, and care about auditability.

For that user, the word “productivity shift” is not marketing. The cost of editing an hour of podcast audio drops to about 22 cents of Scribe API (assuming you already pay for Claude Code for other work). Editorial decisions are documented in plain text. The pipeline extends in any direction the underlying helpers allow, which is most directions. No commercial tool currently matches this on cost or auditability for that workflow shape.

For everyone else, this is a fascinating demo and the wrong tool. If you do not write code, stay with Descript or CapCut. If you edit visually-driven content, video-use’s assumptions will fail you. If you work outside English, verify heavily before committing. If you are in EU regulated industries, either budget engineering time for a Voxtral swap or pick something else entirely.

The real test will be whether the category video-use defines (Video-as-Code, editing workflows as versionable skills that operate through conversation rather than GUI) gets adopted by others. Descript could ship a text-agent interface tomorrow. Adobe could release a Premiere skill for Claude Code next year. Both have more distribution than browser-use. What browser-use has is first-mover advantage and a design philosophy already proven in one adjacent category. That is not nothing.

I would bet video-use ends up being the reference implementation for a category that grows faster than its creators expect. Or maybe it stays niche and just makes a few thousand developers’ lives much better. Either way, the tool is worth paying attention to in April 2026, especially if you are already inside the Claude Code ecosystem.

Pricing verified on github.com/browser-use/video-use and elevenlabs.io/pricing/api on April 23, 2026. Feature set verified against the SKILL.md at commit 1ffa36d. This review reflects video-use as shipped April 15, 2026, with the “add what-it-does section to readme” update from April 22. If video-use ships a v2 or if browser-use pivots the positioning, check the GitHub README directly. Anything written today about this tool’s trajectory will be partially obsolete by the time you need it.