Chinese AI lab MiniMax released the model weights for M2.7 today. The benchmarks look close to Claude Opus 4.6 and GPT-5.3-Codex. The API costs fifty times less. Developer Twitter is calling it the death of expensive AI coding. But almost nobody is reading the license file, and line one says NON-COMMERCIAL LICENSE.

We verified every claim against independent data, cross-referenced five research tools, and checked the actual HuggingFace LICENSE file ourselves. This is what we found.

MiniMax M2.7 is a 230-billion-parameter coding-focused LLM that activates only 10 billion parameters per token. On select coding benchmarks, it approaches Claude Opus 4.6 and GPT-5.3-Codex performance. The API costs $0.30 per million input tokens versus Opus at $5.00. Independent third-party testing by Kilo Code confirmed that M2.7 catches the same bugs and security vulnerabilities as Opus, delivering roughly 90% of the quality at approximately 7% of the cost.

The catches are real. The open-weight release uses a non-commercial license that requires written permission from MiniMax for any commercial use. Most benchmark scores are vendor-evaluated, not independently verified. The model is text-only in a multimodal world. And the parent company is headquartered in Shanghai.

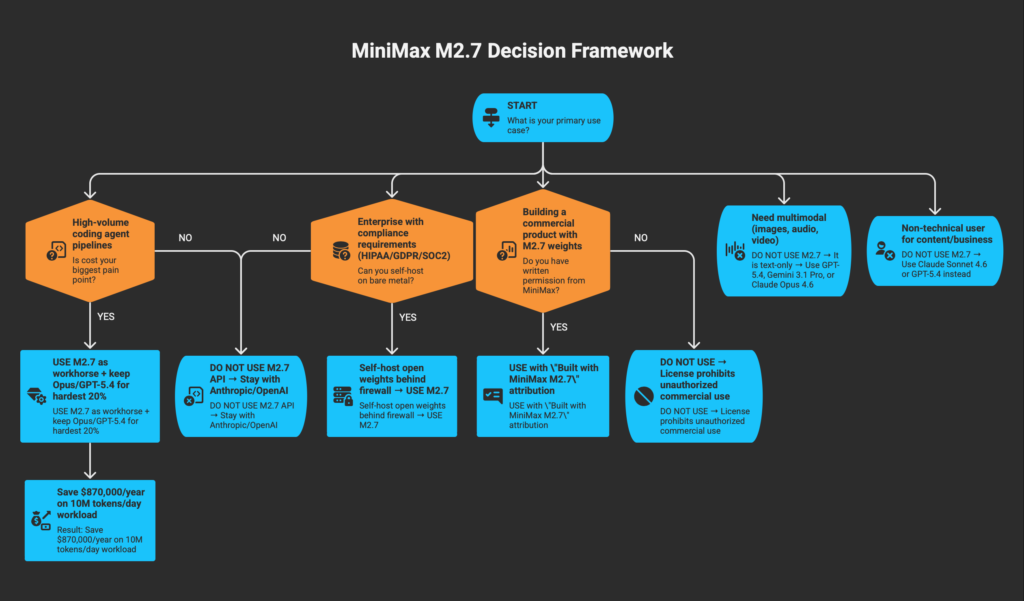

If you run high-volume AI agent pipelines and cost is killing you, M2.7 is worth testing as a workhorse model for routine coding tasks. If you need enterprise compliance, multimodal input, or truly open-source weights, look elsewhere.

What Is MiniMax?

MiniMax is a Shanghai-based AI company founded in early 2022 by Yan Junjie, a former VP at SenseTime. The company listed on the Hong Kong Stock Exchange on January 9, 2026, raising approximately $619 million at a valuation of around $6.5 billion. The stock doubled on its first day of trading.

Western developers mostly know MiniMax through Hailuo AI, a text-to-video generation platform. But the M2 model series is where the company competes against frontier labs. MiniMax reported $79 million in revenue for fiscal year 2025, a 159% year-over-year increase. More than 70% of that revenue comes from outside China, with the United States and Singapore as the top markets. The company had 27.6 million monthly active users during the first nine months of 2025 and serves over 200 countries.

The M2 model series has evolved rapidly. M2 launched in October 2025 under an MIT license. M2.1 followed in December 2025. M2.5 came in February 2026 and quickly became the most popular model on Kilo Code, accounting for 37% of all Code mode usage ahead of Claude Opus 4.6 and GPT-5.4. M2.7 launched on March 18, 2026 as a proprietary model. Today, April 12, MiniMax released the model weights on HuggingFace.

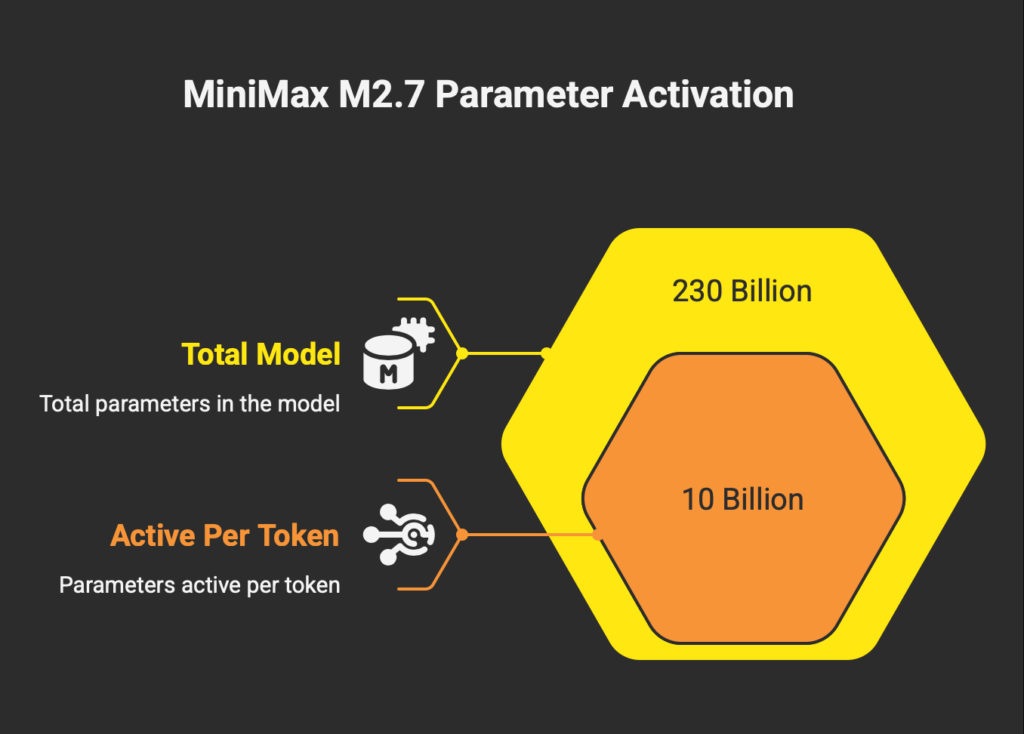

The Architecture: 230 Billion Parameters, 10 Billion Active

M2.7 uses a sparse Mixture-of-Experts (MoE) transformer architecture. The model contains 230 billion total parameters but activates only about 10 billion per token through a top-k expert routing mechanism across 256 local experts. That activation rate of 4.3% is what makes the model so cheap to run.

The attention mechanism uses multi-head causal self-attention with Rotary Position Embeddings (RoPE) and Query-Key Root Mean Square Normalization (QK RMSNorm). The context window is 204,800 tokens across all M2.7 variants.

Two API variants exist. The standard M2.7 runs at approximately 60 tokens per second according to MiniMax. The M2.7-highspeed variant claims around 100 tokens per second with identical output quality at double the price.

One thing to be clear about: M2.7 is text-only. No image input, no audio processing, no video understanding. MiniMax offers multimodal capabilities through separate products like Hailuo AI for video and Speech 2.8 for audio, but M2.7 itself processes nothing but text. In April 2026, with GPT-5.4, Gemini 3.1 Pro, and Claude Opus 4.6 all handling images and documents natively, this is a meaningful limitation.

The Numbers: What M2.7 Gets Right

The cost story is the headline, and it holds up under scrutiny.

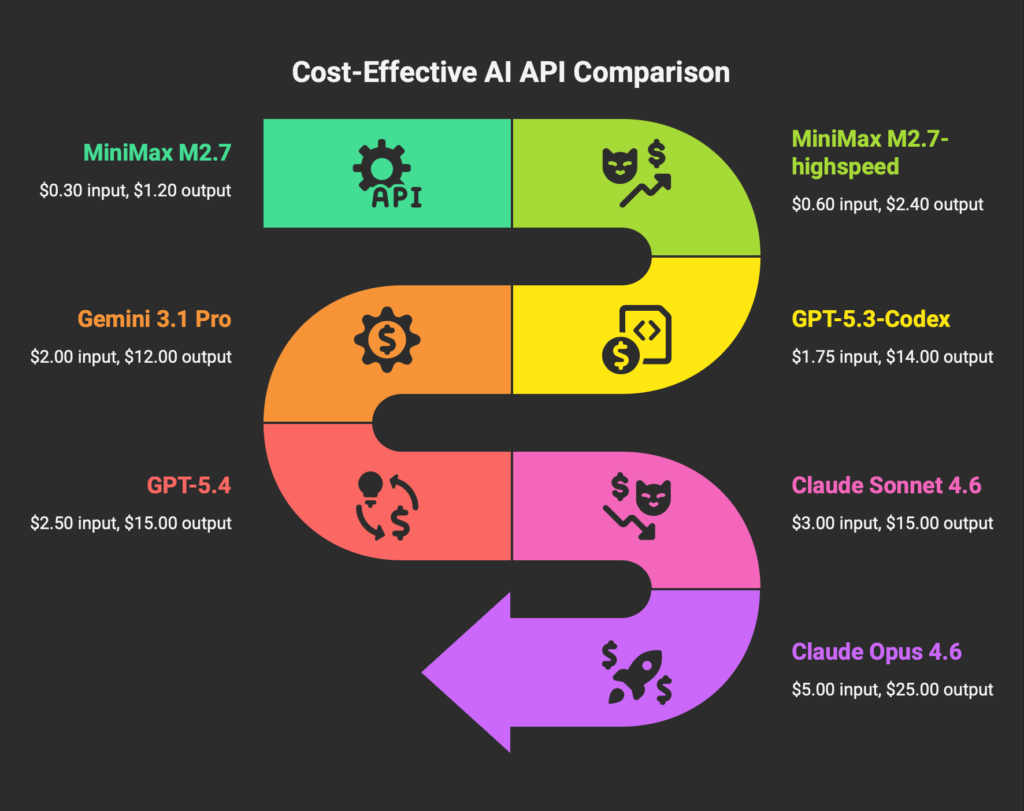

API Pricing (Pay-as-You-Go, verified on official pricing page and confirmed by Artificial Analysis):

| Model | Input / 1M Tokens | Output / 1M Tokens |

|---|---|---|

| MiniMax M2.7 | $0.30 | $1.20 |

| MiniMax M2.7-highspeed | $0.60 | $2.40 |

| GPT-5.3-Codex | $1.75 | $14.00 |

| Gemini 3.1 Pro | $2.00 | $12.00 |

| GPT-5.4 | $2.50 | $15.00 |

| Claude Sonnet 4.6 | $3.00 | $15.00 |

| Claude Opus 4.6 | $5.00 | $25.00 |

The pricing gap is not marginal. M2.7 costs roughly 17 times less than Claude Opus 4.6 on input and 21 times less on output. M2.7 also includes automatic context caching that drops the cost of reused prompts and codebases to $0.06 per million tokens, without any configuration required. For agent pipelines that feed the same system prompt and file state into the model repeatedly, this prevents the runaway cost spikes that plague long-running sessions with proprietary models.

A team processing 10 million tokens per day on Claude Opus 4.6 faces annualized costs approaching $900,000. The same volume on M2.7 drops below $30,000. That is the difference between AI inference being a major budget line item and being a rounding error.

Benchmark Performance (verification level noted for each):

| Benchmark | M2.7 Score | Verification |

|---|---|---|

| AA Intelligence Index v4.0 | 50 | Independently measured by Artificial Analysis |

| SWE-Pro | 56.22% | Independent benchmark, but score is MiniMax-evaluated |

| SWE-bench Verified | 78% | MiniMax-evaluated |

| Terminal Bench 2 | 57.0% | MiniMax-evaluated |

| VIBE-Pro | 55.6% | MiniMax internal benchmark |

| GDPval-AA (ELO) | 1495 | Independently verified by Artificial Analysis |

| MLE-Bench Lite (medal rate) | 66.6% | MiniMax-evaluated |

| LMSYS Chatbot Arena | 1447 | Independent |

The Artificial Analysis Intelligence Index score of 50 is the single most reliable number in this table. It was independently measured by a third party using a composite of 10 evaluations. That score represents an 8-point jump from M2.5’s score of 42, which is significant progress for a one-month iteration. For reference, Gemini 3.1 Pro and GPT-5.4 lead the index at 57, and Claude Opus 4.6 scores 53.

The most compelling independent evidence comes from Kilo Code’s testing lab. Kilo created three TypeScript codebases and ran both M2.7 and Claude Opus 4.6 through identical prompts in Code mode. M2.7 found all 6 bugs and all 10 security vulnerabilities, matching Opus perfectly on detection. Opus produced more thorough fixes and twice as many integration tests. The total cost for M2.7 was $0.27 versus $3.67 for Opus. Kilo described the result as 90% of the quality at 7% of the cost.

Kilo Code reports that MiniMax M2.5 (the previous version) is currently the #1 most-used model across every mode in Kilo Code, ahead of Claude Opus 4.6, GLM-5, and GPT-5.4. In Code mode it accounts for 37% of all usage. M2.7 is the first version where Kilo felt the right comparison was a frontier model rather than another open-weight one.

The Catches (Plural)

The pricing and coding performance are real. What follows is where the story gets complicated.

Catch 1: The License Is Not Open Source

MiniMax describes the M2.7 weight release as “open source.” The HuggingFace page tags the license as “modified-mit.” Neither description is accurate.

We opened the LICENSE file on HuggingFace (uploaded approximately 12 hours before this article was published). Line 1 reads: NON-COMMERCIAL LICENSE. Line 2 clarifies: non-commercial use is permitted based on MIT-style terms, but commercial use requires prior written authorization from MiniMax.

If your commercial use is approved, you are required to prominently display “Built with MiniMax M2.7” in your product. Any commercial use without prior written authorization is prohibited.

The license also includes an appendix of prohibited uses: generating illegal content, military applications, exploiting minors, generating disinformation, and promoting discrimination.

The M2.7 open-weight release is not open source by any standard definition (OSI, FSF, or common developer understanding). It is a non-commercial license with a commercial gate. If you plan to use M2.7 weights in any product or service that generates revenue, you need written permission from MiniMax first. Previous models in the M2 series (M2 and M2.5) shipped under MIT or less restrictive modified-MIT terms. M2.7 represents a clear tightening of licensing conditions.

This matters for the broader narrative. The M2 series went from MIT (M2, October 2025) to progressively more restrictive terms with each release. Developer reaction on X has been strong. Multiple posts with significant engagement criticized MiniMax for calling the release “open source” while shipping non-commercial terms. One developer noted that the license file was edited approximately 6 hours before the weight release, suggesting the commercial restriction was a late addition.

The pattern suggests that MiniMax treats openness as a strategic lever, not a philosophical commitment. They tighten or loosen terms based on competitive position. This is their right, but developers building on M2.7 weights should plan accordingly. Meta’s Llama models, by comparison, ship with a community license that broadly permits commercial use.

Catch 2: The Benchmark Trust Problem

The SWE-Pro score of 56.22% is the number most frequently cited in coverage of M2.7. That number needs context.

SWE-bench Pro is an independent benchmark maintained by external researchers. But MiniMax ran M2.7 on SWE-Pro using their own infrastructure and published the result themselves. No independent lab has replicated the exact 56.22% figure. The M2.5 model card disclosed that MiniMax runs SWE-bench evaluations using Claude Code as scaffolding, with the default system prompt overridden, averaging results across multiple runs. If M2.7 follows the same methodology, the evaluation conditions are heavily optimized.

VIBE-Pro, which MiniMax cites as evidence of end-to-end project delivery capability (55.6%), is explicitly described as an internal benchmark in MiniMax’s own documentation.

Two confirmed regressions complicate the “steady improvement” narrative. Artificial Analysis independently measured an 11 percentage point drop on τ²-Bench (Telecom) compared to M2.5. VentureBeat reported that M2.7 scored worse than M2.5 on BridgeBench vibe-coding tasks, though specific numbers were not published.

There is also a verbosity concern. Artificial Analysis noted that M2.7 generated approximately 87 million output tokens during Intelligence Index evaluation, 55% more than M2.5. A model that produces significantly more output will naturally score higher on benchmarks that reward completeness, but that verbosity translates directly to higher costs and slower response times in production.

The problem is not that the benchmarks are fabricated. The problem is that the strongest numbers come from evaluations that MiniMax controls, while the independently verified numbers tell a more moderate story. The AA Intelligence Index places M2.7 at 50, behind Opus (53), GPT-5.4 (57), and Gemini 3.1 Pro (57). That is a strong score for a model at this price point. It is not frontier.

Catch 3: What M2.7 Cannot Do

M2.7 processes text and outputs text. Nothing else.

In a development workflow that now routinely involves screenshots of error messages, UI mockups, PDF documents with embedded diagrams, video walkthroughs, and voice notes, a text-only model covers only a portion of the work. GPT-5.4 handles text, images, and audio with a 1.1 million token context. Gemini 3.1 Pro processes text, images, audio, video, and code repositories with a 1 million token context. Claude Opus 4.6 supports images and documents with up to 1 million tokens in beta.

M2.7’s 204,800-token context window is adequate for most single-repository tasks but is not a differentiator in 2026. It is roughly one-fifth the size of what competitors offer.

Speed also needs calibration. MiniMax’s official documentation claims approximately 60 tokens per second for the standard model. Artificial Analysis independently measured 43 to 45 tokens per second. The highspeed variant claims approximately 100 tokens per second, but independent benchmarks for that variant are not yet available. By comparison, Gemini 3.1 Pro runs at 127 tokens per second and GPT-5.4 at 81.

The Kilo Code testing also revealed a qualitative gap worth noting. While M2.7 detected bugs and vulnerabilities at the same rate as Opus, the fixes were less thorough. Opus produced 41 integration tests with a dedicated test database and proper cleanup. M2.7 produced 20 unit tests that validated schemas and handler functions directly, without testing the API endpoints through HTTP. In a production environment where regression prevention matters, that gap is not trivial.

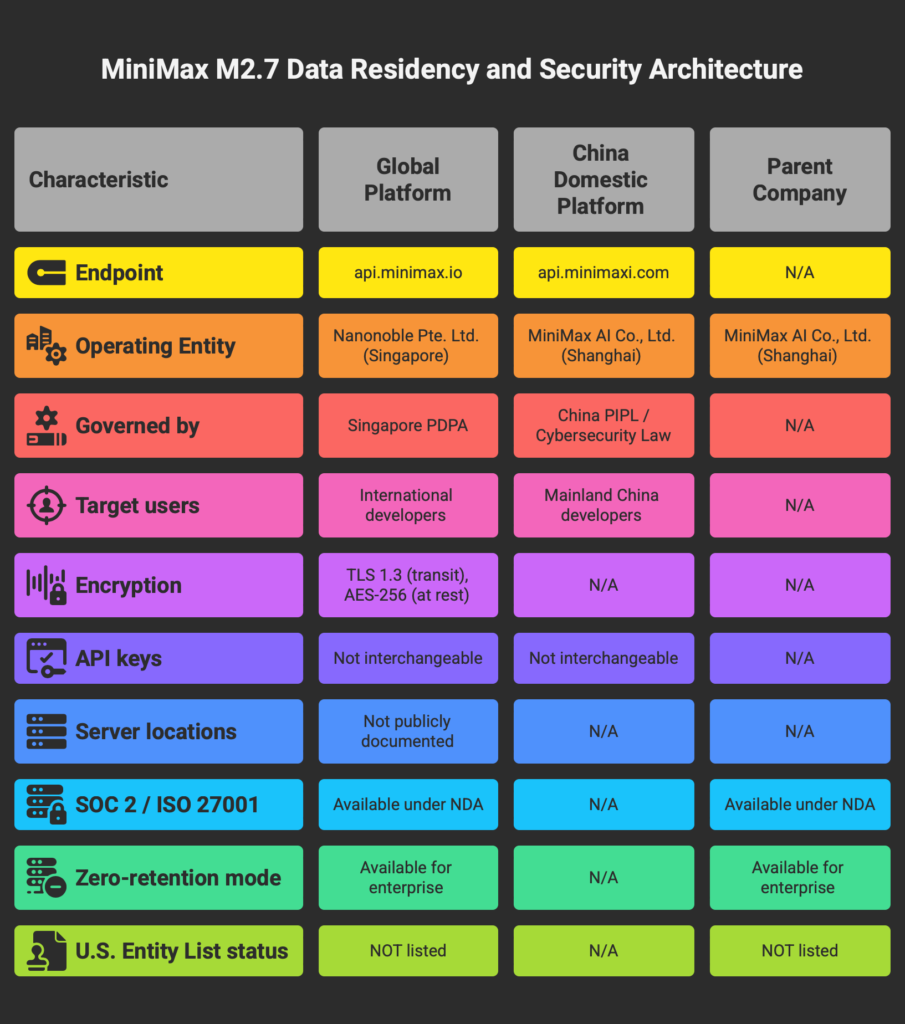

Catch 4: Data Residency, Security, and China Risk

MiniMax operates two separate API endpoints. The global platform at api.minimax.io is run by Nanonoble Pte. Ltd., a Singapore-registered entity. The Chinese domestic platform at api.minimaxi.com serves the mainland market. API keys are not interchangeable between the two.

This separation is structurally different from DeepSeek, where all API traffic routes through Chinese servers and falls directly under Chinese data protection law. MiniMax’s global platform is governed by Singapore’s Personal Data Protection Act (PDPA), which provides a degree of jurisdictional separation.

The parent company is still MiniMax AI Co., Ltd., headquartered at No. 65 Guiqing Road, Xuhui District, Shanghai. The physical server locations for the global API endpoint are not publicly documented. MiniMax offers SOC 2 and ISO 27001 audit reports, but only under NDA. Zero-retention modes are available for enterprise customers.

MiniMax is not currently on the U.S. Entity List. Zhipu AI (z.ai), another major Chinese AI lab, was added to the Entity List in 2025. If U.S.-China AI restrictions expand further and MiniMax is individually targeted, enterprises using the MiniMax API would face immediate supply chain disruption. This is not a prediction; it is a procurement risk that legal and compliance teams will flag during vendor evaluation.

China’s National Intelligence Law (Article 7) requires organizations and citizens to support and cooperate with national intelligence work. The practical scope and extraterritorial applicability of this provision are debated, but the ambiguity itself creates friction in enterprise procurement reviews. For development teams handling PII, PHI, or any data subject to GDPR or HIPAA, using the MiniMax cloud API introduces compliance questions that do not exist with Anthropic or OpenAI.

The cleanest path for data-sensitive teams is to self-host the open weights behind their own firewall. No data leaves the organization. But self-hosting a 230-billion-parameter model requires serious hardware: approximately 461 GB of VRAM for unquantized FP16 (7 H100 80GB GPUs), or 115 to 130 GB for an aggressively quantized 4-bit version. NVIDIA has released optimized kernels for vLLM and SGLang that deliver up to 2.7x throughput improvement on Blackwell Ultra GPUs, but the capital investment remains substantial.

Who Should Use M2.7 (And Who Should Not)

Solo developer using Claude Code daily: Use M2.7 for the 80% of routine tasks (building endpoints, generating tests, known-pattern refactoring) and keep Opus or GPT-5.4 for the hardest 20%. The Anthropic-compatible API means switching requires changing one environment variable. We covered this setup process in Cursor vs Claude Code You will save hundreds of dollars per month.

Startup CTO running agent pipelines at scale: This is M2.7’s primary use case. Multi-agent pipelines that run thousands of continuous tool calls will bankrupt you on Opus pricing. M2.7 provides near-frontier agentic stability at a fraction of the cost. Build a hybrid routing layer: M2.7 for core reasoning and code generation, Gemini or GPT-5.4 for any vision or multimodal tasks.

Enterprise team with compliance requirements: Do not use the cloud API for regulated workloads. If you have the infrastructure to self-host the open weights on bare metal, you gain data sovereignty and an Opus-class coding model inside your firewall. If self-hosting is not feasible, the regulatory risk of routing sensitive data through a Chinese-parented API provider will not clear most security audits.

Open-source enthusiast who self-hosts: Adopt with caution. The coding performance is impressive and the weights are available. But the non-commercial license means you cannot build a commercial product on these weights without MiniMax’s written approval. And the hardware floor is steep: you need multiple data-center GPUs or a very high-end Mac with 256GB+ unified memory.

Non-technical user for content and business tasks: Skip M2.7. It is a coding and agent tool optimized for structured logic, not prose generation or creative work. Claude Sonnet 4.6 and GPT-5.4 provide vastly better user interfaces, broader capabilities, and seamless integration with Google Workspace and Microsoft Office.

The Self-Evolution Story: Compelling, Not Yet Proven

MiniMax’s most ambitious claim is that M2.7 “participated in its own evolution” through an internal framework called OpenClaw.

The described process works like this: M2.7 was deployed within a research agent harness that manages data pipelines, training environments, and evaluation infrastructure. The model ran autonomous optimization loops for over 100 rounds, analyzing failure trajectories, modifying scaffold code, running evaluations, and deciding whether to keep or revert changes. MiniMax reports that this process delivered a 30% performance improvement on internal evaluation sets and that M2.7 handles 30% to 50% of the reinforcement learning research workflow.

On MLE-Bench Lite (22 machine learning competitions from OpenAI), M2.7 achieved a 66.6% average medal rate across three 24-hour trials, earning 9 gold medals autonomously. That places it behind Opus 4.6 (75.7%) and GPT-5.4 (71.2%), and tied with Gemini 3.1 (66.6%).

The narrative is compelling, but important caveats apply. The 30% improvement figure comes from internal evaluation sets that have not been published or independently replicated. The self-evolution process operates within a rigid programmatic harness, not as unconstrained self-modification. And OpenAI has made similar claims about GPT-5.3-Codex contributing to its own training and deployment pipeline.

The honest assessment: what MiniMax describes is a sophisticated automated evaluation and optimization loop, not autonomous self-improvement in the way the marketing implies. The engineering is impressive. The results are measurable. But calling it “self-evolution” leans into anthropomorphism that the underlying mechanics do not fully support.

Access Methods

M2.7 is available through multiple channels:

API (Pay-as-You-Go): Direct access at api.minimax.io with Anthropic SDK compatibility (recommended) and OpenAI SDK compatibility. Token Plans range from $100/year (Starter) to $1,500/year (Ultra High-Speed).

OpenRouter: Available as minimax/minimax-m2.7 at the same pricing as the first-party API. Ranked 4th on OpenRouter by usage volume as of March 2026.

Ollama: Cloud-hosted model via ollama launch openclaw –model minimax-m2.7:cloud. Integrates with Claude Code, OpenCode, and OpenClaw.

HuggingFace (Open Weights): Available at huggingface.co/MiniMaxAI/MiniMax-M2.7. Model size: 229B parameters, available in F32, BF16, and F8_E4M3 tensor types. 18 community quantizations already available.

Self-Hosting: Officially supported via SGLang, vLLM, HuggingFace Transformers, ModelScope, and NVIDIA NIM. NVIDIA has released optimized FP8 MoE kernels that deliver up to 2.7x throughput improvement on Blackwell Ultra GPUs.

MiniMax Agent: Free tier available at agent.minimax.io with trial credits. No API key or development experience required.

Compatible coding tools include Claude Code, Cursor, RooCode, Kilo Code, Cline, Codex CLI, OpenCode, Droid, TRAE, and Grok CLI. For a full breakdown of how these tools compare, see our Best AI Coding Assistant 2026 guide.

FSR Verdict

MiniMax M2.7 is not the open-source Claude killer that today’s social media posts suggest. It is a capable, extremely cheap, text-only coding model with non-commercial licensing restrictions, vendor-controlled benchmark scores, and the geopolitical complexity that comes with any Chinese AI provider.

The pricing advantage is real and independently verified. The coding ability is real and independently tested by Kilo Code. The self-evolution narrative is interesting but not independently validated.

What makes M2.7 worth paying attention to is not any single benchmark number. It is the trajectory. MiniMax went from M2 to M2.7 in six months, and each version has closed measurable ground against frontier models that cost an order of magnitude more. If that pace continues, the next version could force a pricing response from Anthropic and OpenAI that benefits everyone.

For now, M2.7 earns a conditional recommendation. Use it where it excels: high-volume coding agent pipelines where cost matters more than absolute quality. Pair it with a frontier model for the hardest problems and any task that requires vision. Read the license before you ship anything commercial. And verify MiniMax’s benchmark claims against your own workloads before committing.

The strongest case for M2.7 is also the simplest one. It costs $0.27 to do what costs $3.67 on Claude Opus. For a lot of teams, that math ends the conversation.

All pricing verified on official vendor pages as of April 12, 2026. Benchmark data sourced from Artificial Analysis, Kilo Code, MiniMax official documentation, and NVIDIA technical blog. License terms verified directly from the HuggingFace LICENSE file. Company financials sourced from HKEX filings and Reuters.