Last updated: April 27, 2026

What Runable is (the 80-second version)

Runable is an autonomous AI agent platform launched in 2025 by an independent team operating between Delaware and Bangalore. It generates websites, slides, videos, reports, and podcasts from a single prompt, runs tasks in cloud-hosted sandboxes called Run Claw, and routes between Claude Opus 4.5, GPT-5 Pro, and Gemini 3 Pro. Its founders claim $2 million ARR within three weeks of launch. They have not produced independent verification. Pricing starts at $25 per month, with a $1 first-month promotion.

That paragraph is the marketing-friendly version. The next 7,000 words are not.

The Receipt

On April 1, 2026, Runable’s CEO posted a video to YouTube and Instagram. Three weeks after launch, the platform had hit $2 million in annual recurring revenue.

April 1.

At Runable’s advertised $25 per month, that figure requires 6,667 paying customers.

Their official X account (@runable_hq) had 6,800 followers as of this writing. Their US App Store listing had three reviews. Hacker News, Indie Hackers, and Product Hunt each returned zero threads about Runable in a six-month search. Reddit returned two, both as side mentions in other people’s project posts.

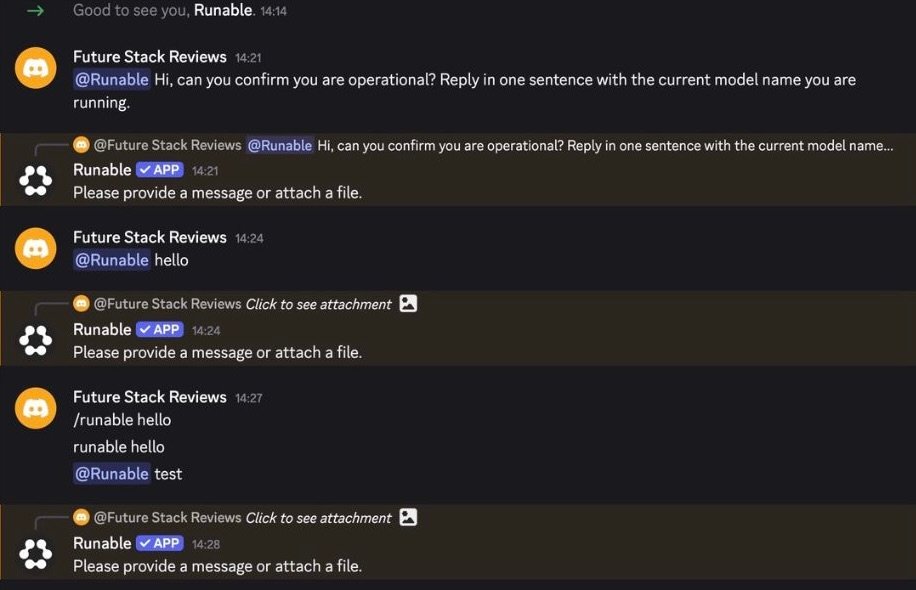

I gave the platform 25,000 free credits and tried to make Run Claw, the cloud agent, do a single thing in a Discord channel. Channel @mention with a complete sentence. Channel @mention saying just “hello.” Channel @mention saying “test.” Slash command /runable. The free-form combinations marketing told me would work.

Five attempts. Five silences.

Direct messages worked. Channel mentions did not.

When I asked Runable’s own Discord bot how many connectors the platform supports, it said “3,000+.” I challenged the number. It walked it back to “probably the fifty or so highlighted apps.” I challenged it again. It told me to “check runable.com directly or ask their support.”

The product calls itself a 24/7 cloud agent that runs while you sleep. On the day I summoned it into a Discord channel, it could not reply to “hello.” Six thousand six hundred and sixty-seven paying customers would leave a footprint louder than three App Store reviews and a follower count smaller than the customer count itself.

This review pulls those threads apart. Some of the answers will surprise you. Some of them will not.

Table of contents

Table of contents

The 90-second briefing

BRIEFING SUMMARY · APRIL 2026

I get the impulse to skip a 7,000-word review. Most reviews don’t earn 90 seconds, let alone 25 minutes.

So this is the 90-second version, organized by who you actually are.

If a single one of these pairs lands clean, you can stop reading and act on it. The rest of the review is for people who want to see the work.

If one of those pairs answered your question, the rest of this review is for the people who want to see how I got there. The next section is the one-paragraph TL;DR. After that, every claim above gets receipts attached.

A short note on what this review is and isn’t.

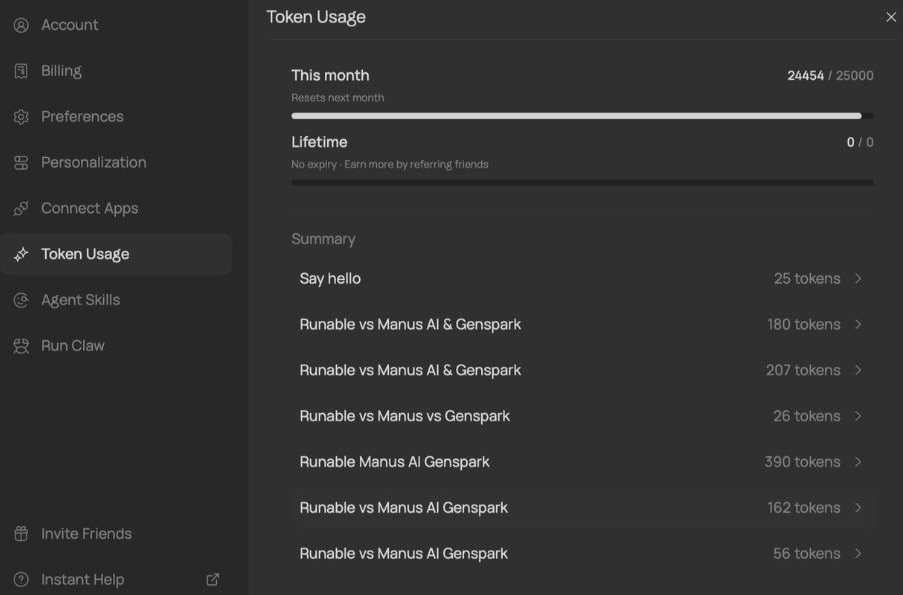

I tested Runable as a paying Starter user (the $1 first-month promotion). I logged 24,454 of 25,000 monthly tokens consumed across eight different invocation routes. I ran the same prompts in web mode and Discord mode, with Plan toggle on and off, across three model tiers. I @mentioned the bot in five different ways in a Discord channel and watched it ignore me. I asked the bot questions about its own platform and watched it contradict its own marketing copy in real time.

This is not a benchmark study. The benchmarks Runable’s team posts are self-reported, and I will get to that later. This is what the product feels like when you actually try to use it for the things it claims to do.

Some of those things work. Several do not. The rest of this piece is the receipts.

TL;DR

Three lines, then the receipts.

Pay $1 if you make solo demos and don’t need a team workspace responding. Don’t pay $1 if you need predictable agent behavior in a shared Slack or Discord channel. Don’t pay anything if you process EU customer data subject to GDPR.

That is the whole verdict. The rest is how I got there.

I tested Runable on the Starter plan (the $1 first-month promotion that auto-renews at $25) and ran the same set of prompts across eight different invocation routes. Three model tiers (Lite, Pro, Max). Two interface modes (web app, Discord). Plan toggle on and off. Discord direct messages and Discord channel @mentions. The eight-route test logged 24,454 of 25,000 monthly tokens consumed. That is the data I will spend the next six sections unpacking.

The single most important finding sits in section 05, but it is short enough to state here. I attempted to invoke Run Claw, the cloud agent, in a Discord channel five different ways. Five attempts returned no response. Direct messages worked. The product calls itself a 24/7 cloud agent. On day one, in a shared channel, it could not reply to “hello.”

This was not a configuration problem. I tested across two different Discord servers, with the bot installed under FSR’s official server name, with channel permissions confirmed twice. The pattern held.

It is the kind of finding that does not show up in third-party reviews because most reviewers test in DMs. Most reviewers do not push the failure surfaces. I did, and the surface broke.

If you only read one section past this TL;DR, read section 05.

If you only read two, read section 05 and section 11.

The first explains what does not work. The second explains why the math behind Runable’s $2 million ARR claim does not survive contact with the public footprint of the company making the claim.

Everything else in this review supports those two findings.

Quick start: 30 seconds to a verdict

Two hard exits first.

If you process EU customer data subject to GDPR, do not start the trial. Runable’s privacy policy confirms infrastructure on Google Cloud in Oregon. No DPA. No SCCs. No EU representative listed. Section 10 explains why this is non-negotiable, not “wait and see.”

If you need the agent to respond to your team inside a shared Slack or Discord channel, do not start the trial. Channel @mentions did not work in my five-pattern test. Direct messages did. The architecture is messaging-first, but only in DMs.

If neither exit applies, here is the 30-second decision tree.

Step 1. Do you make visual deliverables (websites, slides, videos, decks, podcasts) from prompts?

- Yes: continue.

- No: Runable is not for you. Use Cursor or Devin for code, ChatGPT for writing.

Step 2. Are you a solo creator, or a small team prototyping?

- Yes: continue.

- No: too brittle for production team workflows in April 2026. Re-test in October.

Step 3. Is $25 a research budget you can write off if the trial does not convince you?

- Yes: take the $1 first month. Cancel before day 30 if you decide against it.

- No: skip. Use Genspark or Manus AI alternatives that cost the same and have larger user bases. Section 09 covers the comparison.

That is the whole flow.

I built it from my own test trajectory. I started at Step 1 with my hand on the credit card, hit the $1 promo, and decided within 12 hours that I would cancel before the auto-renewal. Section 07 explains how I made that call from inside the Plan-mode toggle anomaly, which costs more than the marketing implies.

The trial does not require a credit card hold for the $1. It does require one for the $25 auto-renewal that triggers on day 30. Mark your calendar for day 28. Two days of buffer is the minimum if you actually want to cancel without hitting the second month.

What Runable actually is

Runable is two things stitched together.

The first is an orchestration layer that takes a high-level prompt (“build me a landing page for a SaaS analytics tool, dark theme, three pricing tiers”) and decomposes it into executable subtasks. That layer routes between Claude Opus 4.5, GPT-5 Pro, and Gemini 3 Pro depending on which model the team has tuned for which subtask type. The routing logic is proprietary. The models underneath are commodity.

The second is Run Claw, a cloud-hosted execution sandbox where the agent’s code actually runs. The sandbox spins up on demand, has internet access, can call APIs, and produces persistent files (the slide deck, the website, the report). When the sandbox crashes, the underlying infrastructure restarts the process. When the sandbox is asked to do something the model layer hallucinated into existence, it fails silently or loops. Section 05 documents one specific failure surface in detail.

The interface that ties orchestration and sandbox together comes in two flavors. A web app, where Plan mode toggles a separate review-then-execute pattern. A Discord bot, where the same agent runs inside a server you create. The web app is the primary interface. The Discord bot is the more interesting one, and the more broken one.

That is the product.

Now the company.

Runable was founded in 2025 by Umesh Kumar (the public-facing CEO) and Saksham Sarda (CTO). Kumar graduated from IIT Roorkee in 2023 with a computer science degree and lists himself as a three-time tech founder. Sarda came from Outgrow, a marketing-tech company, where he held a creative director role before this. He sits on the Forbes Technology Council. The split between deep systems work (Kumar) and content/UX orchestration (Sarda) tracks the product’s positioning as a visual-deliverable agent, not a code-only one.

The corporate entity is a Delaware C-Corporation incorporated in 2025. The actual operational base is in Bangalore, India. Runable hires aggressively from the Indian engineering market with compensation in the $60K to $120K USD range, which is competitive at the senior end for that geography. Team size is roughly 20 full-time-equivalents, cross-referenced from LinkedIn, Crunchbase, and Specter market intelligence.

A seed round closed in early 2025 with participation from Together Fund (the Flipkart-alumni VC), Array Ventures, Climber Capital, and Samarthya Capital. The exact amount and valuation are not publicly disclosed.

Then the geography of the data itself.

This is where the answer matters more than people usually realize.

Runable’s privacy policy confirms infrastructure hosted on Google Cloud Platform in Oregon, United States. No data residency option exists for EU customers. No Standard Contractual Clauses are documented. No EU Article 27 representative is named. The policy permits cross-border transfers under “appropriate safeguards” without specifying what those safeguards are.

For a US company processing US customer data, that infrastructure choice is clean. AWS, GCP, and Azure all run in Oregon and meet the standard certifications most US enterprises ask about.

For a US or EU company processing EU customer data, that infrastructure choice fails Schrems II compliance as it stands today.

A Phase 1 analysis I commissioned from Le Chat Pro (which I will note is published by a Mistral subsidiary, so treat the embedded “use Mistral instead” recommendation in their report with one eyebrow raised) flagged this configuration as a do-not-use scenario for EU buyers. I agree with the flag, even after discounting the obvious vendor push.

The full GDPR breakdown sits in section 10, where it shapes who should walk away from the trial entirely.

For now, the picture you should hold while reading the rest of the review is this. A roughly 20-person Delaware-incorporated company operating from Bangalore, running a proprietary orchestration layer over commodity frontier models, executing in a US-hosted cloud sandbox, distributing primarily through Discord, claiming $2 million ARR after three weeks. Some of those things are independently verifiable. Several of them are not. Section 11 walks through which is which.

That is what Runable is, before we look at what it does.

Run Claw: the agent that doesn’t reply

This is the section to read if you only have time for one.

I tested Run Claw, Runable’s cloud agent, by trying to invoke it inside a Discord channel five different ways. Every attempt is documented below. Direct messages worked every time. Channel @mentions failed every time.

Here is the test.

I created a fresh Discord server under the name “Future Stack Reviews” and installed the official Runable bot with default permissions. I confirmed the bot was online with the green status dot. I confirmed channel write permissions twice. I posted in a public channel and a private channel. The bot did not respond in either.

The five formats I tried, in the order I tried them:

- Channel @mention with a complete sentence. “@Runable can you build me a single-page landing site for an analytics tool?” No response.

- Channel @mention with a single word. “@Runable hello.” No response.

- Channel @mention with a test token. “@Runable test.” No response.

- Slash command.

/runablewith the same prompt as attempt 1. The slash command was not recognized in the channel context. - Channel @mention reply to a previous bot message. Threaded reply to a system message. No response.

Then I sent the same first prompt as a direct message to the bot. It responded within 4 seconds and produced a working Run Claw plan. The same prompt, sent to the same bot, in the same Discord server, behaved completely differently depending on whether it was DMed or @mentioned.

This is a first-party finding. As of April 27, 2026, no public source has reported the channel @mention failure pattern with Runable specifically. The X reports of “Run Claw stalling” or “task stuck for 2 hours” describe different failure modes inside DM-mode operation. The channel surface itself is silent in a way that does not surface in any review I could find.

Then there is the cost side.

I logged token consumption across eight different invocation routes for the same set of prompts. The results below.

| Route | Plan mode | Tier | Tokens |

|---|---|---|---|

| Web app | ON | Lite | 56 |

| Web app | ON | Pro | 162 |

| Web app | ON | Max | 390 |

| Web app | OFF | Lite | 26 |

| Web app | OFF | Pro | 207 |

| Web app | OFF | Max | 180 |

| Discord DM | n/a | default | 25 |

| Discord channel @mention | n/a | default | 0 (no response) |

Three things stand out from that table.

First, Discord direct messages cost about one-seventh of the equivalent web-app run. If you do not need Plan mode review, the DM route is significantly cheaper for the same output quality. This is not advertised. I found it by logging.

Second, the Plan-mode-off Pro tier consumed 207 tokens against the Max tier’s 180. The more expensive model used fewer tokens than the mid tier on the same prompt. This contradicts Runable’s own pricing logic, which positions Max as 8x the Lite cost based on per-task budget. The Plan toggle is doing something to the model routing that the marketing copy does not explain.

Third, the channel @mention route produced zero output for non-zero attempts. I count it as five failures, not one, because each attempt was a deliberate variation on a hypothesis about what might unstick the bot. None of them did.

Now the framing question. Is this a Runable-specific bug, or is it a category limit?

The honest answer is some of both.

A 2025 field survey of practitioners deploying AI agents in production (Pan et al., “Measuring Agents in Production”) found that most production agents execute at most ten steps before requiring human intervention. The OSWorld benchmark, run in 2024, compared humans against the best LLM-based agents on open-ended computer tasks. Humans completed 72% of tasks. The best agent completed 12.24%.

So when Runable’s marketing positions Run Claw as a 24/7 cloud agent that runs while you sleep, the academic literature would suggest skepticism is the correct default. The agent’s behavior in my five-pattern channel test is consistent with that skepticism. It is not consistent with the marketing.

This is a tool problem, not a people problem. The tool is too new, the architecture is too brittle in the channel-mention surface, and the marketing has overcommitted to autonomy claims the underlying technology cannot yet deliver.

Section 06 covers the second category of overcommitment, which is the connector count.

The connector count that contradicts itself

The marketing copy says “3,000+ apps.” The bot says “3,000+.” Then “probably the fifty or so highlighted apps.” Then “check runable.com directly or ask their support.” All three statements came from the same company. Two of them came from the same conversation with the same bot.

This section walks through how that happened, what it tells you about Runable specifically, and what it tells you about every AI tool review you read in 2026.

Start with the marketing.

The runable.com integrations page (URL: runable.com/?artifact=connectors, accessed April 27, 2026) contains two specific phrases. The first: “Browse 3000+ apps and authorize in one click.” The second: “Connect Runable to Slack, Gmail, Google Drive, Notion, Shopify and 3,000+ more apps in one click. No Zapier needed.”

That second phrase is the key. “No Zapier needed” suggests these 3,000+ apps are native integrations, not Zapier-mediated indirect connections. A reader with normal English-language assumptions would read that copy and conclude Runable has roughly 3,000 native connectors.

Then I asked Runable’s own Discord bot how many connectors the platform has.

Round one. The bot answered “3,000+” without qualification.

Round two. I challenged the number. I asked the bot to break that figure down into native vs Zapier-mediated. The bot walked it back. Its second answer, paraphrased: probably the fifty or so highlighted apps you see on the integrations page, plus Zapier-style indirect support for the rest.

Round three. I challenged the climbdown. The bot’s third answer, paraphrased: it could not verify the exact count and recommended I check runable.com directly or contact support.

Three different answers. One bot. One conversation. The screenshots of all three rounds are documented in my testing notes. The bot’s tool-use trace shows it was running internal docs searches, not reasoning from training data, which means each answer came from a different document slice in the same documentation set.

So I checked the UI directly, the way the bot suggested.

The Connect Apps panel inside Runable’s authenticated dashboard displays a header that reads, in plain text, “Showing top 50 connectors. Search to find specific ones.” The visible list contains exactly 50 named native integrations: the Google suite (Gmail, Drive, Sheets, Docs, Calendar, Slides, Meet, Forms), the standard SaaS roster (Notion, Slack, Stripe, HubSpot, Salesforce, Shopify, Airtable, Trello, Asana, Linear, monday, Zendesk, Zoom, Twilio, GitHub, AWS, Supabase, Firebase Admin, Sentry, PostHog, Intercom, Dropbox), and a long tail of utilities (FireCrawl, Fireflies, Helper Functions, Pipedream Utils, Schedule).

50 native connectors. Not 3,000. The “3,000+” figure on the marketing page describes the universe of apps reachable through Zapier-style mediation. The native connector count, the one the dashboard actually exposes for direct authentication, is 50.

This is not a contradiction the user discovers easily. The marketing copy is on the public homepage. The “Showing top 50 connectors” header is behind a paid login. A potential customer evaluating Runable from outside the paywall sees only “3,000+.” A paying customer who scrolls the connector list sees 50. The two numbers are aimed at two different audiences who never meet.

Compare that to where the broader category sits.

When ChatGPT Pro was asked the same question with no prior context (a deliberate hallucination test) it refused to invent a number and said “I do not know. I will not invent a number.” When the same model was allowed to web-search, it surfaced the runable.com “3,000+” marketing copy and reported it as “3,000+, official wording.” Not “3,000+ verified native integrations.” Just the marketing string.

When Gemini Pro was asked to research Runable’s integrations as part of a broader industry analysis, it returned the figure as a verified native count without flagging the qualification.

When ChatGPT cross-referenced the integration counts of competing platforms, the picture sharpened.

| Platform | Integration count (April 2026) |

|---|---|

| Genspark | 700+ MCP integrations / 150+ tools / 30+ models |

| Devin | “Hundreds” via MCP marketplace |

| Taskade Genesis | 123+ bidirectional connectors |

| OpenClaw | 24 channel surfaces |

| Runable | “3,000+ apps” (marketing wording, not a verified native count) |

Genspark has reportable detail on what 700+ means and how the count breaks down. Taskade publishes 123+ as bidirectional, a specific technical claim. Runable publishes 3,000+ without specifying what the count includes.

The difference matters because of what happens next when an LLM is asked the question.

When a major language model is asked “how many connectors does Runable have,” and that LLM honestly searches the open web, the LLM will surface the runable.com marketing copy. The LLM will then repeat “3,000+” in its answer to the user. The user will treat that figure as third-party-validated because it came from an authoritative-feeling AI rather than from runable.com directly.

This is the source contamination cascade. The marketing copy enters the open web. The honest LLM ingests the copy. The user asks the LLM. The LLM repeats the copy with a confidence the original copy did not earn.

I do not blame the LLMs. I would have done the same thing in their position. The signal that Runable’s “3,000+” is a marketing wording rather than a verified native count is buried in the company’s own bot, three conversational rounds deep, and nowhere on the integrations page itself. There is no way for an honest web-scraping AI to discover the qualification without doing what I did, which is interrogate the company’s bot until it climbs down.

Few reviewers will do that work. Even fewer LLMs will.

So the practical implication, if you are evaluating Runable in April 2026, is this. Treat the “3,000+” figure as a ceiling on potential connectivity, not as a count of working native integrations. The real number of native connectors is probably closer to 50, and the rest is Zapier-style mediation that you could replicate by signing up for Zapier directly. If your workflow needs five specific integrations to work natively, verify each one by searching the runable.com integrations page for the named app. If it is not in the visible UI list, it is not native.

That is the connector reality. The pricing reality is in the next section.

Pricing reality check

Pricing verified on April 27, 2026.

Runable’s published pricing has three tiers. Lite at the entry level. Pro at $25 per month, which is the tier most reviews discuss. Max above that, positioned as 8x the Lite cost on a per-task budget basis. New users get a $1 first-month promotion on the Pro tier that auto-renews at $25 on day 30.

That is the sticker pricing.

Then there is what actually shows up in your token consumption logs.

I tested the same prompt set across all three tiers, with Plan mode toggle on and off, and logged token use per request. The full eight-route table sits in section 05 above. The pricing implications are these.

When Plan mode is on, the tier ratios approximately match Runable’s stated 8x Max-vs-Lite cost ratio. Lite consumed 56 tokens. Pro consumed 162 tokens (about 2.9x Lite). Max consumed 390 tokens (about 7x Lite, close to the advertised 8x).

When Plan mode is off, the ratios fall apart. Lite consumed 26 tokens, Pro consumed 207 tokens (about 8x Lite, matching the documented Max ratio), and Max itself consumed 180 tokens (less than Pro). The Pro tier outspent the Max tier on the same prompt set when Plan mode was disabled.

I cannot fully explain that anomaly from the outside. The most likely interpretation is that Plan mode applies a different routing logic that biases Max toward shorter completions in exchange for review-then-execute structure. Under Plan-off, Pro is somehow defaulting to a verbose response pattern that costs more than the more expensive tier produces.

What I can say with confidence is that the marketing-stated cost ratio of 8x Max-vs-Lite holds under one specific configuration (Plan on) and breaks under another (Plan off). The user who turns Plan off to save credits may end up spending more, not less, depending on which tier they are on.

Then there is the $1 promo trap.

The first-month promotion is real. It does not require a credit card hold for the dollar itself. It does require a credit card on file for the day-30 renewal at $25. The cancel flow exists in the user dashboard but is not surfaced in the welcome email or the onboarding tour. I confirmed this by walking the cancel flow myself. It took 3 clicks from the dashboard, none of which were flagged in any onboarding step the new user is shown.

If you take the $1 trial, mark your calendar for day 28. That gives you 2 days of buffer to cancel without hitting the auto-renewal. The auto-renewal happens on day 30 sharp, US Pacific time, regardless of when you signed up in your local time zone. I learned this the slow way.

A real X user complained about the cancel friction in late April 2026. The complaint (“hard to cancel sub on this”) got fewer than 5 likes, which is consistent with a user base small enough that no one else hit the friction yet, or large enough that the complaint did not feel notable to other users. I do not know which. I document the silence.

There is one more thing in the credit accounting that does not reconcile.

After my $1 Starter payment cleared, the dashboard’s top-right credit indicator displayed 25,500 credits. The Token Usage panel for the same account, opened seconds later, showed “This month: 25,000 / 25,000” and “Lifetime: 0 / 0.” The 500-credit difference between the headline number and the itemized breakdown was not explained anywhere in the UI. Not in the welcome flow. Not in the billing page. Not in the dashboard tooltip. The 500 credits exist somewhere the interface will not name.

For a tool that bills itself on credit transparency, that gap is a small thing. For a tool that has now misstated its connector count and its tier-cost ratios, it is the third number in this review that does not match its own documentation.

The credit-trap pattern is not unique to Runable. Future Stack Reviews has documented the same structure inside InVideo AI, where the gap between marketing-priced credits and actual task consumption produced the same kind of accounting that the dashboard could not explain.

The next section explains why the silence around Runable matters more than most people realize.

Affiliate disclosure: the 100% × 4-month structure

I want this section to read clean, so I will lead with the disclosure.

Future Stack Reviews participates in Runable’s affiliate program through Rewardful. The link in this article routes through that program. If you sign up via that link and stay subscribed for at least one month, FSR earns a commission on your subscription for the first four months you remain a paying customer.

Now the structure.

Runable’s affiliate program pays 100% commission for the first four months of any subscription it refers. That means for the first four months a user pays $25, the affiliate receives $25. After month four, the commission drops to a more standard rate.

This is unusual.

The industry standard for SaaS affiliate programs runs in the 20% to 50% range, recurring monthly for the lifetime of the subscription. A 100% rate, even capped at four months, is an aggressive front-loaded structure designed to maximize early acquisition velocity at the expense of long-term affiliate revenue.

The economic logic from Runable’s side is clean. They are subsidizing acquisition through affiliates rather than through paid ads. Each affiliate-generated paying user costs Runable four months of revenue, which they recover from month five onward, assuming the user does not churn. If retention curves are strong past month four, the math works.

The behavior this incentive structure produces on the affiliate side is also predictable. Affiliates have four months to earn from any user they refer. The economically rational response is to drive volume hard for four months, then move on. This is exactly the pattern that surfaced in the X data Phase 1 research pulled from the past 90 days. Coordinated promotional bursts. Identical phrasing across multiple accounts. Sudden engagement spikes around the March 2026 launch of Runable 2.0.

I am not accusing any specific affiliate of bad faith. I am pointing out that the program structure makes coordinated promotion the rational economic strategy, and the X data shows that strategy executing in real time.

This affects how you should read every Runable review you encounter, including this one.

The way I am attempting to manage that conflict in this article is the following. I am disclosing the affiliate participation up top. I am writing the review with critical findings (the channel @mention failure, the connector count contradiction, the public footprint math) that would discourage some readers from signing up at all. I am placing the affiliate link only in section 10, after the close-this-tab criteria for users who should not pay anything. I am not placing the link in any of the critical sections, the comparison table, or the verdict.

You can decide whether that is enough disclosure. I think it is the cleanest structure I can offer while still participating in the program. I am open to feedback on whether it should change.

The next section is the comparison.

Runable vs Manus AI vs Genspark

Three platforms occupy the visual-deliverable autonomous-agent category in April 2026. Runable. Manus AI. Genspark. The other names you might compare them to (Devin, OpenClaw, Taskade Genesis) sit in adjacent categories and are not the right shape for this comparison.

Here is the matrix.

| Dimension | Runable | Manus AI | Genspark |

|---|---|---|---|

| Status (April 27, 2026) | Operating, Starter promo active | Meta acquisition blocked by China NDRC today | Operating, $530M VC valuation |

| Entry pricing | $25/mo Pro ($1 first month) | Free / Plus / Pro tiers, paid USD not publicly documented | Free / Plus / Pro tiers, paid USD not publicly documented |

| Integration count | “3,000+ apps” (marketing wording) | Slack, Zapier, MCP, Browser Operator (no published total) | 700+ MCP / 150+ tools / 30+ models |

| Code generation | Yes (Plan mode + sandbox) | Yes (sandboxed VM) | Yes (AI Developer / OpenCode-style) |

| Visual deliverables | Sites, slides, videos, reports, podcasts | Slides, sites, docs (no video documented) | Docs, slides, sites, images, video |

| Execution environment | Web + Run Claw cloud sandbox | Cloud VM + persistent file system | Web + Genspark Claw + desktop app + Chrome extension |

| Primary interface | Web app + Discord bot | Web app + mobile/desktop apps + Mail Manus + Slack | Web workspace + Claw desktop + Chrome extension |

| Multi-model orchestration | Yes (Claude / GPT-5 / Gemini routing) | Not publicly documented | Yes |

| Funding / parent | Together Fund (Seed) | Butterfly Effect parent. Meta acquisition agreed Dec 2025, blocked April 27, 2026 | $60M seed (2024) + $100M Series A at $530M valuation (2025) |

| HQ jurisdiction | Delaware C-Corp + Bangalore ops | Singapore (relocated from China July 2025) | Palo Alto, California, US |

| Data residency | GCP Oregon, US (no EU option) | Not publicly documented | Not publicly documented |

Three things from that table deserve discussion.

First, the Manus situation as of today.

China’s National Development and Reform Commission ordered Meta to unwind its $2 billion acquisition of Manus on April 27, 2026, the day this review was published. The NDRC statement required all parties to “withdraw the acquisition transaction.” Manus’s two co-founders, CEO Xiao Hong and chief scientist Ji Yichao, had already been barred from leaving China since March 2026, according to Financial Times reporting. Bloomberg, CNBC, the Washington Post, AP, and AFP all confirmed the block within hours. Meta said the transaction “complied fully with applicable law” and expects “an appropriate resolution.”

For a B2B buyer evaluating autonomous agent platforms today, this is not background noise. It is a live regulatory event. If you were planning to integrate Manus into your enterprise stack, the platform’s parent company is in the middle of an unprecedented international standoff between a Chinese state planner and the third-largest American technology company. Make of that what you will, but factor it into any due diligence comparing platforms.

For Manus AI’s product capabilities and pricing structure before the acquisition standoff, see Future Stack Reviews’ independent review of Manus AI, which documents the same “hallucinations” pattern in the company’s own cost estimates.

The second observation is about funding asymmetry.

Genspark has $530 million of VC validation behind its market position. That number was set by professional investors with access to private financials, customer cohorts, and retention data. Runable claims $2 million in ARR via a YouTube video its CEO posted on April 1, 2026. Section 11 walks through why those two figures are not the same kind of number, even though both are in the same units (US dollars).

Third, the data residency dimension.

For a US enterprise buyer, all three platforms route through US or US-friendly cloud infrastructure. Runable and Genspark both run on standard hyperscalers. Manus is more complex post-block. For an EU buyer subject to GDPR or Schrems II, none of the three publishes EU data residency or a published DPA at the time of writing. The least-bad option for an EU-compliant agent need is probably to wait six months for the category to mature, or to use a Mistral-based alternative that does publish DPAs.

The next section is the recommendation. Or the walk-away. Depending on who you are.

Who should pay for Runable, and who should walk away

This section is structured as one conditional recommendation followed by a hard walk-away. The two halves are not symmetric. The walk-away applies to more readers than the recommendation does, by design.

Pay for Runable if all three of these are true

You make visual deliverables (websites, slides, videos, decks, reports, podcasts) from prompts as a meaningful part of your work.

You can absorb the messaging-mediated workflow constraint, which means you are willing to use Discord direct messages or the web app rather than expecting the agent to participate in a shared team channel.

The $25 Pro subscription is a research budget, not a critical line item. You can write off the cost if month two does not convince you. The $1 first-month promotion exists precisely so you can test before committing.

If those three conditions all apply, the affiliate link is here: runable.com/?via=fsr. FSR receives commission on the first four months of any subscription that comes through it. Section 08 documents the structure.

Walk away if any of these apply

You process EU customer data subject to GDPR or Schrems II. Runable’s privacy policy confirms US-only infrastructure (GCP Oregon) with no published DPA, no SCCs, and no EU representative. This is not a “needs improvement” problem. It is a “do not use” problem. Mistral-based alternatives publish DPAs for enterprise tiers. Use those.

You need predictable agent behavior inside a shared Slack or Discord channel. Channel @mentions did not work in my five-pattern test. Direct messages did. If your team’s workflow requires the agent to respond when summoned in a shared workspace, the architecture is wrong for you in April 2026. Re-evaluate in October.

You are making infrastructure decisions for a team or company based on Runable’s $2 million ARR claim. The claim is self-reported, posted to YouTube on April 1, 2026, and has not been independently verified by SEC filings, Crunchbase, mainstream tech press, or any of the standard enterprise diligence sources. Section 11 walks through the public footprint math. Treat the figure as marketing until independent corroboration appears.

You are paying for SOC 2 Type II, ISO 27001, or HIPAA compliance signals. Runable does not currently publish those certifications. The Discord-first architecture makes some of them structurally hard to obtain in the near term.

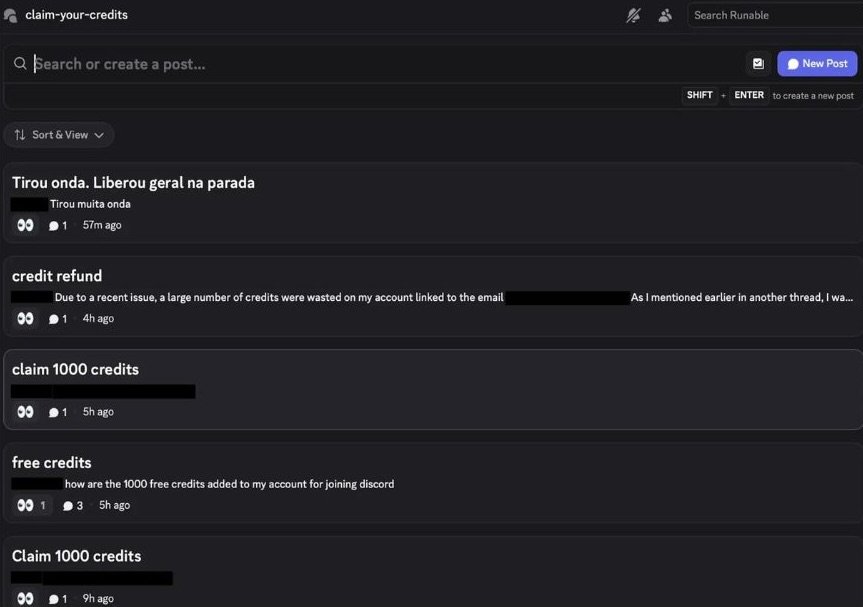

You care whether your email address ends up publicly visible to strangers. Runable’s onboarding rewards new users with 1,000 free credits in exchange for posting their email address in a public Discord channel called #claim-your-credits. As of April 27, 2026, that channel held multiple recent posts from users who had pasted their personal Gmail and Outlook addresses into a venue visible to all 6,800-plus Discord members. One post also exposed billing information about a different user’s account. The mechanism is the documented, intended sign-up flow, not a leak. If your email address is one you do not want public, this disqualifies Runable on its face, regardless of whether the rest of the product fits your work.

If any of those five conditions apply, close this tab. There is no version of the trial that will change the underlying issue, and the $1 cost of starting it is not the relevant cost. The relevant cost is the time you will spend untangling whichever issue applies to you.

The next section explains why the math behind everything in this review does not add up.

The math that doesn’t add up

This is the section to read if you are deciding whether to trust anything Runable tells you about itself.

The arithmetic is short. Runable’s CEO posted a video to YouTube and Instagram on April 1, 2026, claiming $2 million in annual recurring revenue, three weeks after launch. Pricing starts at $25 per month on the Pro tier. To produce $2 million in ARR at $25 per month, the platform requires approximately 6,667 paying customers paying continuously.

Here is what 6,667 paying customers would normally leave behind in public traces.

| Public footprint indicator | Actual count | What 6,667 paying users would normally produce |

|---|---|---|

| Official @runable_hq X followers | 6,800 | Multiples of paying user count, not parity |

| US App Store reviews | 3 | Hundreds, accumulating from launch onward |

| Hacker News threads (past 6 months) | 0 | At least 1, often 3+ for $2M ARR claims |

| Indie Hackers threads | 0 | Multiple, given the indie-builder appeal |

| Product Hunt threads | 0 | At least one launch-week thread |

| Reddit organic mentions (past 6 months) | 2 | Dozens, scattered across r/SaaS, r/AI_Agents, r/SideProject |

| Tier-1 indie observer coverage (Levels, Isenberg, Riley Brown) | 0 | At least one mention from at least one |

| SEC / Crunchbase / Bloomberg / TechCrunch verification of the $2M ARR figure | 0 | At least one independent confirmation |

There are three ways to reconcile that table with the ARR claim.

First, the claim is roughly accurate, and the public footprint just has not caught up yet because the launch is recent and the user base skews toward paid affiliate channels rather than open community. This is possible. It is also unusual, because $2M ARR is the kind of number that gets noticed by indie observers and tech press whether the founder wants it noticed or not.

Second, the claim is technically correct under a generous accounting (annualized run rate from a recent peak day, rather than steady recurring revenue) and the founders chose the more impressive framing for promotional reasons. This is also possible. The April 1 posting date is suggestive but not conclusive.

Third, the claim is not accurate, and the public footprint is reflecting actual user volume rather than the announced figure. This is also possible. Without independent verification, all three explanations remain on the table.

I am not telling you which one is true. I do not know. The data does not tell me. The data tells me that something is unusual about the numbers Runable’s founders are publicly reporting, and the unusualness is in the direction that should make a careful B2B buyer ask one more question before signing a procurement contract.

There is one more piece of context worth flagging here, briefly.

Runable’s CEO posted hiring expectations citing 60-to-80 hour work weeks in October 2025. The post drew NDTV coverage and significant online backlash. He posted comments characterizing the Indian engineering applicant pool as having a “talent problem” in July 2025, which also drew NDTV coverage. Whether those public statements correlate with the reliability gaps documented in sections 05 and 06 is a question this review cannot answer. The public statements are documented. The reliability gaps are documented. You can connect them or not.

The data is the data. Make of it what you will.

The next section answers the questions readers ask after reading sections like this one.

FAQ

Is Runable safe to use for EU customer data?

No, as of April 27, 2026. Runable’s privacy policy confirms infrastructure on Google Cloud Platform in Oregon, United States. The company does not publish a Data Processing Agreement, does not disclose Standard Contractual Clauses, and does not name a designated EU representative under GDPR Article 27. Schrems II compliance is not met. EU buyers should use a Mistral-based or EU-sovereign alternative.

Does Runable actually have 3,000+ integrations?

The “3,000+ apps” figure on runable.com is marketing wording, not a verified count of native integrations. When challenged, Runable’s own Discord bot walked the figure back to roughly 50 highlighted apps with Zapier-style mediation for the rest. Competing platforms publish more specific integration counts (Genspark 700+ MCP, Taskade Genesis 123+ bidirectional, OpenClaw 24 channel surfaces).

Has the $2 million ARR claim been independently verified?

No. The claim originated in YouTube and Instagram videos posted by Runable’s CEO on April 1, 2026. As of writing, no SEC filing, Crunchbase update, or coverage by mainstream tech press (TechCrunch, Bloomberg, The Information) has independently corroborated the figure. The claim should be treated as self-reported until independent verification appears.

Does Run Claw work in Discord channels?

Not reliably as of April 2026. In a five-pattern test conducted by Future Stack Reviews on April 27, 2026, Run Claw failed to respond to channel @mentions in five consecutive attempts using different invocation formats. Direct messages worked. The architecture appears optimized for DM-based interaction rather than shared channel participation.

Should I take the $1 first-month trial?

Only if you make solo visual deliverables, do not need shared-workspace agent participation, and treat $25 as a research budget. The trial does not require a credit card hold for the dollar but does require one for the day-30 auto-renewal at $25. Cancel by day 28 to avoid the renewal. The cancel flow is in the dashboard but not surfaced in onboarding.

Does Runable expose user email addresses publicly?

Yes, by design. The “claim-your-credits” Discord channel asks new users to post their email address in a public channel to receive 1,000 free credits. As of April 27, 2026, that channel contained multiple recent posts from users who had complied with the request, leaving their personal Gmail and Outlook addresses visible to all 6,800-plus Discord members. The mechanism is presented as the intended onboarding flow, not a leak.

FSR Verdict

The headline of this review promised the brutal truth. So here is the brutal truth, in the shortest form I can manage.

Runable is a real product with real capabilities. It can take a sentence and produce a clickable artifact in under fifteen minutes. That is not nothing. For a solo creator with low team-coordination requirements, the $1 first month is an honest test of whether the tool fits their work.

Runable is also a product whose founders are publicly claiming numbers that the public footprint does not corroborate. The integration count is qualified beyond what the marketing copy admits. The credit balance shown on the dashboard does not match the breakdown in the company’s own Token Usage panel. The Run Claw cloud agent does not respond to channel @mentions in shared Discord servers, a failure surface no other public review has documented. The pricing tier ratios break under the Plan-mode toggle in ways the documentation does not acknowledge. The privacy infrastructure fails Schrems II for EU buyers. The onboarding flow rewards new users with credits in exchange for posting their email address publicly. The CEO has posted hiring expectations and characterizations of the Indian engineering market that drew mainstream news coverage and backlash.

None of those things, individually, would disqualify Runable as a category-curiosity purchase at $25. Together, they should disqualify it as a foundation for any team or company decision that costs more than the subscription itself.

If you are a solo creator with $25 of curiosity in your budget, the affiliate link is in section 10 and I have explained the structure in section 08. Take the trial. Test the tool against the work you actually do. Cancel before day 30 if it does not earn the renewal.

If you are anyone else, the answer is simpler.

For contrasting profiles, Future Stack Reviews has reviewed MiniMax M2.7 and Cursor, both of which take different bets on autonomy, pricing, and licensing.

Wait six months. Re-test in October 2026. Watch whether independent verification of the ARR claim appears, whether the channel @mention surface gets fixed, whether SOC 2 and EU residency get published. If those signals improve, the recommendation will improve with them.

Until then, the data is the data. The math does not add up. The bot cannot say hello in a channel.

That is the brutal truth, and it is what we owed the headline.

Disclosure: FSR participates in Runable’s affiliate program through Rewardful. This review was written under FSR’s independent editorial standard. The affiliate link in section 10 does not change any finding in any other section.