Last updated: April 25, 2026

Most “Midjourney alternatives” articles compare seven tools on image quality, slap a verdict on the winner, and send you on your way. That approach worked in 2023 when the only real question was whether a contender could match Midjourney’s aesthetic ceiling. In April 2026, the axes that actually matter for professional work have shifted, and image quality has slid from first consideration to third or fourth.

The professionals producing commercial work with AI images stopped choosing between tools some time ago and started combining them. What determines whether a tool belongs in the stack is usually API access, legal exposure around training data, or text rendering fidelity, none of which shows up on most comparison tables.

This briefing covers what the other reviews leave out.

BRIEFING SUMMARY

The question “which Midjourney alternative should I use” is the wrong question in 2026. No single tool wins across the evaluation axes that matter for professional work. The tools that earn a place in a serious stack, by use case:

- Style exploration and concept art: Midjourney V8.1 (still unmatched for cinematic aesthetics; still no public API)

- Text-in-image work (posters, thumbnails, mockups): Ideogram 3.0

- API integration and automation: Flux (Black Forest Labs) or Stable Diffusion self-hosted

- Commercial safety and EU compliance: Adobe Firefly

- Character and asset consistency for production: Leonardo AI

- Conversational image generation: OpenAI GPT Image (DALL-E 3 retires May 12, 2026)

- Photorealism with web grounding: Google Nano Banana

The Single-Tool Era Ended Quietly

Between early 2023 and April 2026, the AI image generation market fragmented. Midjourney was the default answer for two years because nothing else matched its aesthetic ceiling. For a broader look at what we consider the best AI image generator for each workflow type, our companion analysis goes into depth; this article zooms in on the Midjourney-specific question. That gap has closed on some dimensions and widened on others, and the professionals shipping commercial work figured this out before the reviewers did.

The specialization pattern is uneven. Flux handles photorealism and API workflows that Midjourney will not touch, while Ideogram’s text rendering advantage over everything else has grown rather than shrunk. Firefly, on the legal-safety axis, pulled away from the pack once Adobe formalized indemnification for paid customers. Leonardo built out character consistency features that marketing pipelines and game studios actually use in production. What used to be “Midjourney plus some hobbyist tools” is now a set of serious products that win their specific categories.

A viral thread on X in March 2026 put the pattern bluntly: “MJ for art direction, Flux for refinement, Ideogram for type, Firefly for client work.” That configuration is closer to the median than the exception among working creators.

INTEL

The Gemini-powered image model marketed by Google as Nano Banana was absent from most comparison articles published before March 2026. It sits alongside Imagen in Google DeepMind’s official model lineup and has become a recurring reference in professional workflow threads on X. Any comparison guide that omits it is already out of date.

Midjourney V8.1: What You Are Actually Getting in April 2026

Midjourney released V8.1 in alpha on April 14, 2026. The changes matter for anyone evaluating alternatives, because the pressure points that drove users elsewhere in 2025 have shifted.

V8.1 restores much of the V7-era aesthetic stability that V8.0 users complained had flattened. Style references (srefs) and moodboards generate more consistent results across prompts. HD mode now runs three times faster and costs less than V8.0, with HD set as the default. Standard resolution outputs are 50% faster and 25% cheaper. Image prompts and image weights, which power users missed in the V8.0 rollout, are back. A Prompt Shortener and an upgraded Describe function round out the release.

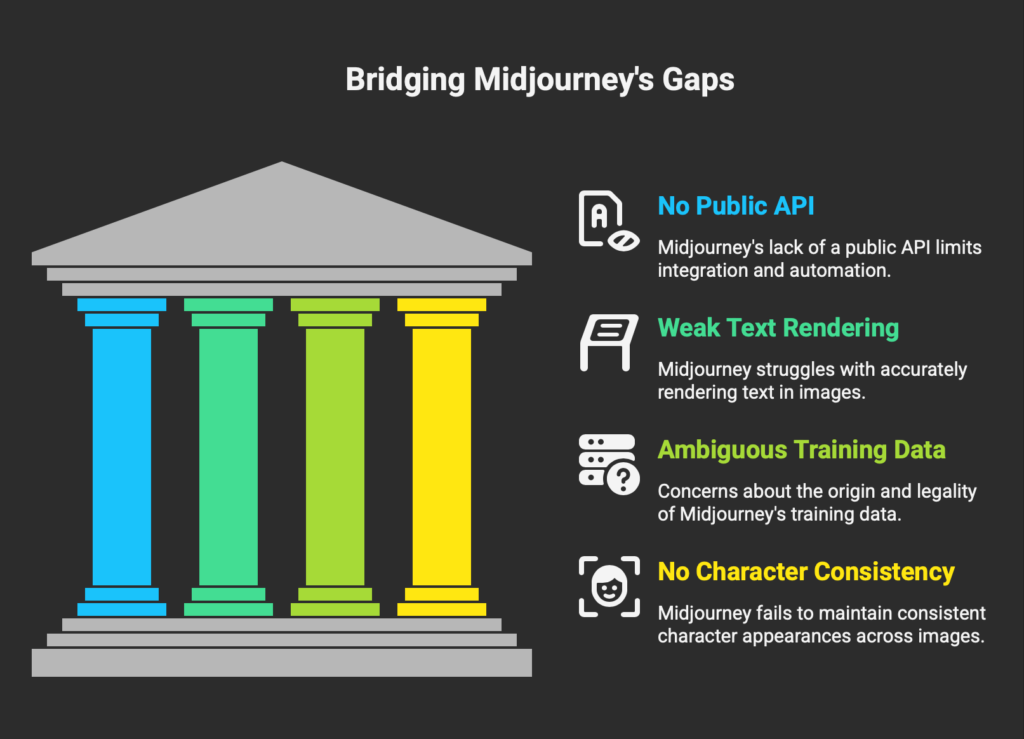

What did not change: no public API, no free tier, no stealth mode below the $60 tier, and no resolution to the copyright infringement lawsuit filed in June 2025 by Disney, Universal, Marvel, Lucasfilm, Fox, and DreamWorks in the US District Court for the Central District of California. That lawsuit remains in active litigation as of April 2026.

If you landed on this article because V8.1 feels like a downgrade or because the Disney lawsuit made you nervous, the alternatives below are worth reading carefully. If you landed here looking to replace Midjourney because of its pricing model, you will likely leave the article using Midjourney alongside one or two other tools rather than instead of them.

35.6%

Reduction in divergent ideation time reported for junior designers using AI image tools with prompt optimization, compared to traditional workflows. Senior designers saw a 21.6% reduction in the same study.

Source: Chen et al. (2024), Journal of Engineering Design

The Evaluation Framework Most Reviews Skip

Image quality is the easiest axis to evaluate and the least useful for professional stack decisions. A tool that produces stunning images but cannot be called from a production pipeline fails a marketing team. A tool with perfect photorealism but ambiguous training data fails an enterprise legal review. The three criteria that actually separate tools for serious work are less glamorous than the comparison images that dominate most reviews.

API and automation readiness is the starting question. Can this tool be called from code, is the API stable, are there rate limits that break at scale? Midjourney has no public API, which eliminates it for product integration regardless of how good the images are. Flux was designed API-first. Firefly exposes enterprise APIs through Firefly Services. This single question closes the door on Midjourney for roughly half the use cases that bring people to comparison articles in the first place.

Text rendering and layout control is the next axis, which covers thumbnails, posters, advertising creative, product mockups, and anything that needs legible type inside the image. Ideogram is measurably better on this axis than every other tool in the comparison, and the gap is not close.

Legal and commercial safety rounds out the list. Whose training data was used, who holds the copyright to the output, and what happens when an enterprise customer asks for indemnification. EU-based teams, agencies serving Fortune 500 clients, and anyone producing work that will be scrutinized by legal review separate sharply from everyone else on this axis.

The comparison table below evaluates each tool on all three criteria alongside the more conventional dimensions. Pricing reflects the lowest paid tier in USD as of April 2026.

| Tool | Lowest paid tier | Free tier | Public API | Text rendering | Commercial use |

|---|---|---|---|---|---|

| Midjourney | $10/mo Basic | No | No | Weak | Yes (Pro+ required if company revenue exceeds $1M/yr) |

| OpenAI GPT Image | $20/mo ChatGPT Plus | Limited via ChatGPT Free | Yes (gpt-image-1) | Strong | Yes on paid tiers |

| Adobe Firefly | $9.99/mo Standard | Yes (25 credits/mo) | Yes (Firefly Services) | Moderate | Yes with indemnification on paid tiers |

| Leonardo AI | $12/mo Apprentice | Yes (150 tokens/day) | Yes | Moderate | Yes on paid tiers (free tier: Leonardo retains rights) |

| Ideogram | ~$8/mo Plus | Yes (100 priority prompts/day) | Yes | Best in class | Yes on paid tiers |

| Flux (BFL) | From $0.014/image PAYG | Playground trial | Yes | Strong | Yes via API; dev open weights non-commercial only |

| Stable Diffusion | $9/mo Stable Assistant | Self-host, free | Yes | Moderate | Conditional (Community License up to $1M revenue) |

| Google Nano Banana | Vertex AI PAYG | ImageFX experimental | Yes (Vertex AI) | Strong | Yes via API |

The table tells you which tool dies first for your use case. It does not tell you which tool to use. For that, the per-tool analysis below matters more than the spec sheet.

The Seven Tools: What Each One Actually Does

1. Midjourney V8.1

Midjourney remains the tool that produces the most visually striking images with the least prompt engineering effort. A messy prompt in Midjourney still returns a coherent, aesthetically rich image at a rate other tools do not match. For concept artists, filmmakers exploring visual direction, and anyone whose job is “generate a mood,” it has not been dethroned.

The architecture of the company explains much of this. Midjourney describes itself as a community-funded research lab of 60 people. No VC pressure, no API customers to serve, no enterprise compliance team making product decisions. The product is tuned for image quality above all else, and the team has the freedom to refuse features that would compromise that focus.

The same architecture creates the problems. No public API means Midjourney cannot be integrated into production pipelines. No free tier means evaluation requires payment. Stealth mode (private image generation) is locked behind the $60/month Pro tier, which means images created on Basic and Standard plans are visible to other users by default. For any work under NDA, this alone rules Midjourney out.

This makes the fit clear enough. Concept artists, illustrators building reference libraries, filmmakers in preproduction, and solo creators whose work is primarily aesthetic exploration get everything they need here. The tool works against everyone else: developers building products, marketing teams needing text in images, anyone working under enterprise NDA, and EU-based teams with strict data residency requirements all run into dealbreakers within the first evaluation day.

2. OpenAI GPT Image (the artist formerly known as DALL-E 3)

OpenAI announced the deprecation of the DALL-E 3 API on November 14, 2025. The final shutdown date is May 12, 2026. The replacement is gpt-image-1 (or the lower-cost gpt-image-1-mini), which is a natively multimodal model rather than a standalone image-only system.

This matters because many articles still reviewing “DALL-E 3” in April 2026 are reviewing a product with weeks left to live. The shift to GPT Image is not a cosmetic rename. The new model uses the language model’s broader world knowledge to generate images with stronger instruction following, better prompt adherence for complex scenes, and cleaner text rendering than DALL-E 3 offered.

The strengths of the OpenAI approach are conversational iteration and natural language handling. Someone who is not comfortable writing prompts in the Midjourney or Flux style can often get usable results by describing what they want in plain English. The weakness is that the image quality ceiling, especially for artistic or cinematic work, still sits below Midjourney and Flux.

OpenAI does not operate an affiliate program. Access requires a ChatGPT Plus subscription ($20/month) or API usage through the OpenAI developer platform.

Developers already embedded in the OpenAI stack get the most out of this model, along with non-specialists who prefer describing images in plain English rather than learning a tool-specific prompt syntax. For professional illustrators looking for aesthetic distinctiveness, it is the wrong choice. Teams planning to build long-term around a specific image model should also pause, because GPT Image itself will eventually be superseded in the way DALL-E 3 is being superseded now.

3. Adobe Firefly

Firefly is the tool enterprise legal departments approve without reading the user manual. That is not a dig. It is the specific commercial role Firefly was built to play, and it plays it well.

Adobe trained the Firefly family on licensed stock content and public domain material, which removes the training-data ambiguity that clouds Midjourney and Stability AI. For customers on paid plans, Adobe offers IP indemnification, shifting the legal risk from the creator to Adobe. For agencies producing work for Fortune 500 clients, or for EU-based teams navigating the AI Act’s training data transparency requirements, this is the feature that matters more than image quality.

Firefly also evolved beyond “Adobe’s in-house image model” over 2025 and into 2026. The current Firefly app integrates partner models from Google, OpenAI, Black Forest Labs, Runway, Ideogram, and others directly inside the Adobe interface. For context on how the video models in that lineup compare on pricing, our Runway vs Pika analysis covers the ugly economics most Firefly users never see. In practice, a Creative Cloud subscriber can switch between Firefly’s indemnified model and third-party models from a single dropdown. This is closer to a creative AI operating system than a single image generator.

The weakness: Firefly’s native outputs lean toward the safe, polished, professionally-approved end of the aesthetic spectrum. Images are commercially viable more often than they are memorable. For concept art or ambitious visual storytelling, Firefly is not the finisher.

Adobe operates a substantial affiliate program through Partnerize, paying up to 85% of first-month subscription value on Creative Cloud plans.

Firefly earns its place in agency stacks, enterprise marketing teams, and EU-based organizations where compliance and procurement ease matter more than aesthetic distinctiveness. Anyone working inside an existing Adobe workflow gets additional integration benefits for free. Individual artists prioritizing memorable visuals over safety, or budget-constrained creators who already have image generation covered through another subscription, will find Firefly redundant at best. For a deeper evaluation of Firefly’s trade-offs against its pricing, our full Adobe Firefly review breaks down where the safety premium does and does not pay off.

4. Leonardo AI

Leonardo occupies an unusual position in the market: it tries to be the full-workflow tool for production creators rather than the best-in-class engine for any single task. The feature list reflects this ambition (image generation, video generation, canvas editing, upscaling, custom model training, API access, team features), and the result is a product that serves working creatives and game studios better than it serves first-time users.

The strongest use case is character and asset consistency. Leonardo’s custom model training lets you generate the same character, environment, or product across hundreds of images without the drift that plagues ad-hoc prompting in other tools. Game studios, illustrated-book creators, and marketing teams producing campaign sequences all benefit from this specifically.

The tradeoff is complexity. Leonardo has more dials and menus than any other tool on this list. A first-time user can spend an hour figuring out the token system, the model selection, and the Canvas before producing a usable image. For someone building a production pipeline, that learning curve is the investment that pays off. For someone looking to quickly generate a single image, it is friction.

A note on commercial rights that Leonardo itself makes clear in the fine print: free-tier outputs are owned by Leonardo, not the user. Paid tiers transfer full commercial rights. This is the correct trade-off, but anyone using the free tier for client work should be aware.

Leonardo operates an affiliate program through Impact.com, paying 60% of first-month subscription value with a 30-day cookie window.

Game studios, illustrators producing series work, and marketing teams running campaign sequences all get real value from Leonardo because character and asset consistency is its core strength. Anyone who needs fast results from a single prompt, or solo creators who do not need the full workflow tooling, will likely find the learning curve not worth paying.

5. Ideogram

Ideogram does one thing better than every other tool on this list: it puts legible, stylistically coherent text inside images. Posters, YouTube thumbnails, advertising banners, product packaging mockups, typographic layouts. If your image needs words that look like a designer made them, Ideogram is where the work gets done.

This is not a subjective preference. Every major professional comparison of text rendering across AI image models ranks Ideogram at or near the top. Midjourney, Firefly, and even Flux trail on this specific axis. For anyone producing marketing or thumbnail work, this capability alone justifies the subscription.

Beyond text, Ideogram’s general image quality is competitive but not category-leading. It is a strong generalist with one exceptional specialty. The other notable strength is its generous free tier: 100 priority prompts per day with full feature access, which is the most permissive free tier among serious tools in this category.

Ideogram operates an affiliate program through its Creators Club, which is invitation-only and oriented toward creators with engaged audiences on YouTube, Instagram, TikTok, and X.

Content marketers, YouTube creators, designers producing posters and advertising work, and anyone whose output has to contain readable text find their tool here. Pure concept artists have less reason to subscribe, and video creators should note that Ideogram is image-only as of April 2026. For cinematic or painterly aesthetics, other tools on this list pull ahead.

6. Flux (Black Forest Labs)

Flux is the tool that answers the question: “What does an API-first, developer-friendly, professional-grade image generator look like?” Built by the team that previously created Stable Diffusion at Stability AI, Flux was designed from the start as a production model rather than a consumer app.

The model family has grown complex enough to require a map. FLUX.2 Pro is the flagship. FLUX.2 klein is the fastest and cheapest (from $0.014/image on PAYG). FLUX.2 dev is the open-weights version, licensed for non-commercial use only unless you obtain an enterprise license. FLUX Kontext handles multi-reference image editing. FLUX Fill handles inpainting. Each serves a specific workflow, and the naming convention gets criticized regularly for being confusing.

The quality matches the complexity. FLUX.2 produces photorealism that edges out Midjourney for photographic work, handles text rendering competently (not at Ideogram’s level, but competitive), supports multi-reference image prompting for production consistency, and exposes all of this through a clean API accessible either directly or through partners (Replicate, fal.ai, Cloudflare Workers AI).

The weakness is the consumer experience. There is no Flux-branded consumer web app with the polish of Midjourney’s interface. Most users access Flux through one of the partner platforms, which varies in quality. This is a tool for developers, for production teams with someone who writes code, and for anyone willing to live with API-first ergonomics in exchange for control.

Black Forest Labs does not operate a consumer affiliate program. Access is through API or through partner platforms.

For developers integrating image generation into products, production teams building pipelines, and anyone prioritizing photorealism with API access, Flux delivers what the other tools in this list cannot. Non-technical users looking for a polished consumer experience will have a harder time here, and anyone unwilling to read model documentation before making a choice will likely abandon Flux within the first session.

7. Stable Diffusion and Stability AI

The distinction between “Stable Diffusion” (the open model family) and “Stability AI” (the company) matters in April 2026 more than it did in earlier years. The company has pivoted toward enterprise customers. The stability.ai website as of this writing emphasizes “Brand Studio” and B2B creative production, with the public pricing page returning a 404 and the primary CTA directing visitors to “Contact us” for enterprise deployment.

This pivot followed a turbulent period for the company in 2024. As of April 2026, Stability AI is led by CEO Prem Akkaraju (formerly of Weta Digital), with Sean Parker as Executive Chairman. A recent funding round brought in $80M with debt forgiveness over $100M, stabilizing the company’s finances. The Stable Diffusion models remain actively developed, with the most recent flagship being Stable Diffusion 3.5 (October 2024) and ongoing model releases through 2025 and 2026.

For users, the practical consequence is that “using Stable Diffusion” in 2026 means one of three things. You are self-hosting the open model (free, maximum customization, maximum technical overhead). You are using a third-party service that wraps the model (DreamStudio alternatives, Replicate, various SaaS wrappers). Or you are an enterprise customer of Stability AI with a custom deployment.

The Stable Diffusion ecosystem remains the gold standard for customization. LoRAs, ControlNet, custom training, workflow tools like ComfyUI: nothing else offers the depth of community tooling. If your use case requires modifying the generation process itself, Stable Diffusion is the only serious answer.

Stability AI does not operate a consumer affiliate program.

Technically comfortable users who want maximum control find their platform here. Teams building custom workflows or enterprise customers needing on-premise deployment get options that are literally unavailable elsewhere. Anyone looking for a polished consumer product should skip this, and teams without the technical bandwidth to self-host will struggle to extract full value from the ecosystem.

8. Google Nano Banana

Nano Banana is the image generation model in Google’s Gemini family, marketed under that name in Google DeepMind’s official model lineup. The current version as of April 2026 builds on Gemini 3’s multimodal capabilities, with the distinguishing feature being real-time web grounding: the model pulls current information from the web during generation rather than relying solely on training data.

In practice, this means Nano Banana produces more accurate outputs for prompts that reference recent events, current products, specific locations, or anything that benefits from up-to-date context. For an Amazon product page mockup, Nano Banana can reference the current product’s actual appearance rather than generating a best-guess version from 18-month-old training data. None of the other tools in this comparison have live web access during generation, which makes this a genuine capability gap rather than a marginal feature difference.

The general image quality is strong, especially for photorealism. Multi-language prompt handling is better than Midjourney or DALL-E-based models, which matters for non-English creators. Integration with the broader Gemini ecosystem (video via Veo, audio via Lyria) makes Nano Banana useful for multi-modal production work.

The weakness is consumer access. Nano Banana is primarily available through the Gemini app, Google AI Studio, and Vertex AI for developers. There is no dedicated Nano Banana consumer product with a Midjourney-style community or gallery. For creators building identity around a specific platform, this matters.

Google does not operate a consumer affiliate program for Nano Banana or Gemini.

The developers who need up-to-date visual context in their outputs, multi-lingual creators working in non-English markets, and teams already building inside the Gemini ecosystem find this tool fits where others do not. Artists looking for an established creative community and mature workflow tooling will find the experience thinner than Midjourney or Leonardo.

By Use Case: The Stack That Fits Your Work

The comparison table tells you what each tool can do. The harder question is which combination serves a specific kind of work. These are the stacks that have converged as patterns across professional threads, working creator reports, and the use-case breakdowns our research surfaced.

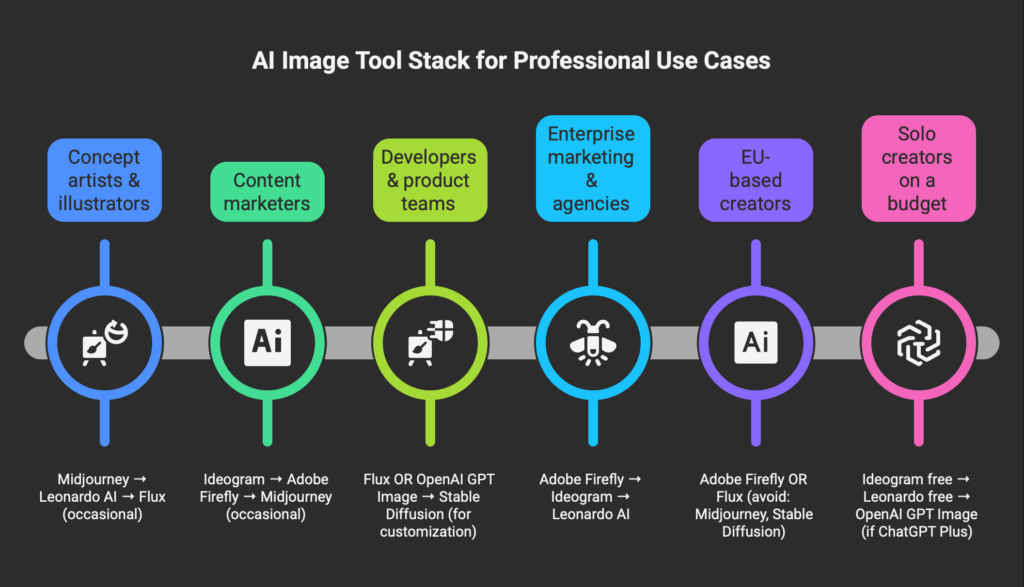

Concept artists and illustrators (individual creators)

The working setup for this group starts with Midjourney V8.1 as the exploratory engine, where style references and moodboards do their best work. Once a direction is chosen, Leonardo AI handles the production run because its custom-model training maintains character consistency across dozens or hundreds of images. Flux gets pulled in occasionally for photorealistic reference shots when Midjourney’s painterly bias gets in the way. The stack optimizes for aesthetic ceiling and iteration speed.

Content marketers and creators

Ideogram becomes the daily driver here because anything with text inside the image (thumbnails, posters, social graphics) lands immediately. Adobe Firefly slots in for commercial-safe client work where indemnification matters. Midjourney makes occasional appearances for hero imagery that does not need text and benefits from its aesthetic distinctiveness. The text-rendering advantage Ideogram holds over other tools is large enough to justify it as the default rather than a specialty pick.

Developers and product teams

The choice collapses to Flux or OpenAI GPT Image, depending on which API your stack already talks to. Stable Diffusion self-hosted enters the picture when customization requirements are high enough to justify the infrastructure overhead. Midjourney is functionally unavailable for this group regardless of its image quality, because there is no public API. Anyone building image generation into a product rather than using it as a standalone tool finds this out within the first hour of evaluation.

Enterprise marketing and agencies

Adobe Firefly leads here on indemnification alone. Ideogram handles text-heavy creative work, Leonardo AI steps in for campaign sequences that need visual consistency across many outputs, and the aesthetic ceiling takes a back seat to procurement-readiness. The image quality may not impress a creative director used to Midjourney, but the output will clear the legal review that kills many AI-generated campaigns before they reach the client deck.

EU-based creators and teams

Firefly (trained on licensed content with GDPR-aligned data handling) and Flux (built by a European company with more transparent training practices) become the viable primary tools. Midjourney and Stable Diffusion are harder to justify as the main engine given active litigation and training data opacity. As the EU AI Act moves through its enforcement phases in 2026, tools with unclear training data provenance increasingly fail enterprise compliance reviews. This shift started in 2025 and accelerates through the year.

Solo creators on a budget

The path here starts with Ideogram’s free tier, which gives 100 priority prompts per day with full feature access. That is the most permissive free tier in the category by a wide margin. Leonardo AI’s 150-tokens-per-day free tier adds variety, and if you already pay for ChatGPT Plus for other reasons, OpenAI GPT Image comes as an included capability rather than a separate subscription. Midjourney remains the least accessible starting point for anyone not yet earning from their work, because it has no free tier at all.

WARNING: THE LEGAL RISK NOBODY TALKS ABOUT

Three active legal cases affect which tools belong in your stack for commercial work:

- Disney and Universal et al. v. Midjourney Inc. (filed June 11, 2025, US District Court for the Central District of California). Six studios (Disney, Universal, Marvel, Lucasfilm, Fox, and DreamWorks) allege copyright infringement through training data use and unauthorized output generation of characters including specific IP references. The 110-page complaint seeks injunction and damages. As of April 2026, the case is in active litigation.

- Getty Images v. Stability AI (UK, with EU implications). The case centers on whether Stability AI’s use of copyrighted images in training constitutes infringement. The outcome is expected to influence similar cases across EU jurisdictions.

- GEMA v. OpenAI (Germany). Music rights case, but the transparency-of-training-data principle extends to image models and shapes the EU regulatory climate for all generative AI.

The EU AI Act moves into a full enforcement phase in August 2026, requiring labeling of AI-generated images, transparency about training data, and respect for copyright opt-outs. Fines reach €15 million or 3% of global annual turnover. Casual use is unaffected by most of this, but anyone producing commercial work for clients with compliance requirements should assume that training data opacity has moved from a soft preference to a hard disqualifier in procurement reviews.

What the Research Actually Says About AI Image Tools in Professional Work

The academic literature on AI image generation in creative workflows is thin but growing. A handful of recent studies provide useful grounding for decisions that are otherwise driven by anecdote.

Chen et al. (2024), published in the Journal of Engineering Design, measured the impact of an AI drawing tool with prompt optimization on conceptual design work. The study reported a 35.6% reduction in divergent ideation time for junior designers and a 21.6% reduction for senior designers. This suggests AI image tools deliver more measurable productivity gains to newer designers than to experienced ones, which aligns with the informal reports from creative teams.

Chandrasekera et al. (2024), in the International Journal of Architectural Computing, ran a 40-student controlled experiment comparing AI-assisted and non-AI design work. The AI group scored higher on creativity measures and lower on cognitive load (measured by NASA-TLX). The exact numerical scores were not reported in the published summary, but the direction is consistent with the Chen study.

Vimpari et al. (2023), published in Proceedings of the ACM on Human-Computer Interaction, studied 14 game industry professionals through qualitative interviews. The paper’s title phrase (“An Adapt-or-Die Type of Situation”) captures the finding: game industry professionals view text-to-image AI as an unavoidable shift they are actively adjusting to, with primary concerns around job displacement, copyright of training data, and the quality ceiling for production work. The study has been cited 71 times in subsequent research.

Jiang et al. (2024), describing the HAIGEN system for fashion design, measured a more dramatic effect: reference exploration time dropped from approximately 4 hours to approximately 0.5 hours using AI assistance, a 250% efficiency improvement. The same study found 90.2% of fashion designers surveyed expressed concern about privacy of their work when using AI tools, and 88.24% expressed concern about idea leakage.

None of these studies directly evaluates the specific tools in this comparison. What they do establish is the baseline effect: AI image tools measurably reduce time and cognitive load for professional design work, and the adoption barriers are primarily about trust, IP, and specific workflow integration rather than raw capability.

The Verdict: Stop Asking “Which One”

The honest answer to “what is the best Midjourney alternative in 2026” is that there is no single alternative. The professionals producing commercial work with AI images are using two to four tools in combination, chosen for their specific strengths on the axes that matter for the work at hand. This mirrors the pattern we documented across our broader tech stack audit, where twenty-two paid tools were stress-tested and only eleven stayed in active production.

The stack that serves most people who arrive at this question looks something like this: Midjourney for aesthetic exploration, Ideogram for anything with text, one of Flux or Leonardo for production refinement, and Adobe Firefly if client work or EU compliance is in the mix. Calling this a compromise misses the point. The configuration reflects how the tools have specialized over the past two years, and fighting that specialization by insisting on one tool for everything is the expensive path.

If the cost of running multiple subscriptions is the objection, the single-tool choice that covers the broadest range is Adobe Firefly. Not because it is the best at any one thing, but because it has the broadest commercial safety and now integrates several partner models (Google, OpenAI, Black Forest Labs, Ideogram) inside a single interface. For people whose work is defined by one of the specialized axes, the answer collapses to a single tool: Midjourney if aesthetic ceiling dominates, Flux if the work lives inside code, Ideogram if the image has to contain text.

The practical takeaway is that your tool choice should be driven by what you are actually producing, not by which model ranked first in the most recent benchmark roundup.

Frequently Asked Questions

Is Midjourney still the best AI image generator in 2026?

Midjourney V8.1 remains unmatched for cinematic aesthetic quality and for generating visually striking images with minimal prompt engineering. It is not the best tool for text in images (Ideogram wins), for API workflows (Flux or Stable Diffusion win), for commercial safety (Firefly wins), or for character consistency across many outputs (Leonardo wins). The answer depends on which axis matters for the work.

Can I use Midjourney images commercially?

Yes, paid Midjourney subscribers have commercial use rights for their outputs. Companies with gross revenue over $1 million per year must use the Pro or Mega plan ($60 or $120 per month). The active Disney/Universal lawsuit does not change these rights as of April 2026, but it does introduce a risk factor for anyone producing work that derives from copyrighted characters.

Which AI image generator is best for YouTube thumbnails?

Ideogram. The text rendering capability is meaningfully better than any other tool in this comparison, which matters specifically because thumbnails almost always include text. Midjourney and Firefly are viable for thumbnail images without text.

What happens to DALL-E 3 after May 2026?

OpenAI officially deprecates the DALL-E 3 API on May 12, 2026. The replacement is gpt-image-1 (or gpt-image-1-mini), which is accessible through the ChatGPT interface, the Images API, and the Responses API. Existing workflows built on the DALL-E 3 API need to migrate before the shutdown date.

Is Stable Diffusion free to use commercially?

It depends. Open-weight Stable Diffusion models are generally free to use for individuals and for companies below certain revenue thresholds under Stability AI’s Community License. Above those thresholds (typically $1M revenue per year), an Enterprise License is required. Self-hosted use is technically free but operationally expensive due to compute requirements.

Which tool is safest for enterprise client work?

Adobe Firefly. Adobe offers IP indemnification for paid subscribers, trained the model on licensed and public domain content, and has the enterprise procurement relationships that make it the easiest AI image tool to get approved by legal review. If the work involves broader design platform decisions rather than image generation alone, our Canva and Adobe Express side-by-side comparison covers how Adobe’s licensing approach extends from Firefly into Express, and what that means versus Canva’s user-content-trained AI.

What is Nano Banana?

Nano Banana is the marketing name for Google’s Gemini-based image generation model, listed in Google DeepMind’s official model lineup. The distinguishing feature versus Midjourney and Flux is real-time web grounding, which lets the model reference current information during generation rather than relying only on training data. Access is primarily through the Gemini app, Google AI Studio, or Vertex AI.

Can I run these tools offline?

Only Stable Diffusion and Flux dev (open weights) can be run fully offline on your own hardware. Midjourney, DALL-E / GPT Image, Firefly, Leonardo, Ideogram, and Nano Banana are all cloud services and require an internet connection.